overview

A map of AI safety strategies.

Each strategy is a bet about which failure mode binds, which one actually gates a good outcome. The survey catalogues 76 named bets, the 15 levers they pull, and which combinations compose or conflict.

Two strategies conflict only when they pull the same lever in opposite directions, which is rare. Most pairs compose. Most public proposals combine three or four levers without stating which bet is load-bearing; the portfolio audit exposes that concealed concentration.

the field, every strategy, plotted

76 strategies · 15 levers

Speed↕Bureaucratic slowdown

Speed ↓ · institutional · friction

Time itself is safety and procedural gates produce time in ways substantive regulation cannot, while also generating audit trails.

Compute governance

Speed ↓ · market economic · state coercion

The compute supply chain is a stable chokepoint and state coordination on licensing, export controls, and reporting thresholds can govern capability indirectly.

Energy choke point

Speed ↓ · market economic · state coercion

Frontier AI is energy-limited; grid regulators, interconnect queues, and tariff structure bind training pace without new AI-specific authority.

Pause

Speed ↓ · speed timing · consent

Time is the binding constraint: alignment and governance can catch up if frontier training halts above some capability threshold.

Sabotage

Speed ↓ · speed timing · unilateral force

Governance has not produced meaningful constraint and direct action against hostile labs has a non-zero historical base rate of producing slowdown.

Acceleration

Speed ↑ · speed timing · market

Speed of capability itself is the safety lever; alignment improves with compute, defence compounds with offence, and wealth funds resilience.

Race to aligned superintelligence

Speed ↑ · speed timing · state coercion

Alignment is solvable in the window and a single aligned superintelligence in a legitimate state's hands beats the counterfactual of coordination failure.

Concentration↕Antitrust primacy

Concentration ↓ · institutional · state coercion

Power concentration is the binding constraint and is visible under current competition law; preventing decisive advantage preserves the option space every strategy depends on.

Coup prevention first

Concentration ↓ · institutional · state coercion

One actor using AI to seize durable decision authority beyond democratic reversibility is the terminal failure; every other decision routes through whether it reduces that risk.

Distributed builders

Concentration ↓ · institutional · market

No single failure mode wins if capability is distributed across many independent actors, and concentration risk exceeds diffusion risk.

Multipolarity

Concentration ↓ · institutional · treaty

Stable equilibrium among several roughly equal AI powers is safer than single dominance or uncoordinated chaos, producing restraint through mutual capability awareness.

Sovereign wealth

Concentration ↓ · market economic · state coercion

Concentration of AI surplus, not AI capability, is the binding failure mode; broad ownership dissolves the political instability driving other strategies.

Centralised AI project

Concentration ↑ · institutional · state coercion

Merging frontier development into one state-funded project reduces failure modes and absorbs race pressure by being the only game.

Military primacy

Concentration ↑ · institutional · unilateral force

Strategic competition between states dominates AI development; the state with the most capable AI is best positioned to secure safety and impose constraints on others.

Public AI

Concentration ↑ · institutional · state coercion

Private ownership of frontier AI concentrates decision authority incompatibly with the technology's distributional stakes; public ownership aligns incentives with broad welfare.

Control mechanismConfucian role ethics

Control mechanism • · frame rejection · consent

Western alignment assumes isolable preferences can be learned and matched; role ethics treats behaviour via fit with position and relationship, producing a less brittle, more context-sensitive standard.

Dharma conformity

Control mechanism • · frame rejection · consent

Alignment frames AI as tool for an external principal; a dharma frame treats AI as a type of entity whose safety is conformity to its fitting functions.

Reframe AI

Control mechanism • · frame rejection · consent

The dominant alignment frame produces the wrong problem statement; switching frames either dissolves the problem or recasts it as tractable.

AI containment

Control mechanism ↑ · ai artefact · friction

Useful AI does not require unrestricted actuation; strong capability in a contained system is better than limited capability uncontained.

AI for safety

Control mechanism ↑ · ai artefact · consent

The same capability that makes AI dangerous makes it uniquely useful for automating alignment research and oversight.

Alignment first

Control mechanism ↑ · ai artefact · consent

Technical alignment is solvable before critical capability thresholds close, and aligned systems compose safely into aligned populations.

Counter AI AI

Control mechanism ↑ · ai artefact · consent

AI attacks happen at speeds humans cannot observe; defence must happen in AI, with guardian AI systems continuously evaluating adversary AI.

Interpretability first

Control mechanism ↑ · ai artefact · consent

Mechanistic understanding is a precondition for reliable oversight; behavioural evaluation without interpretability cannot rule out deceptive alignment.

Safe by construction AI

Control mechanism ↑ · ai artefact · consent

Safety is a property that can be mathematically specified and mechanically verified for the class of systems being built.

Institutional capacityVoluntary restraint

Institutional capacity • · institutional · consent

Labs know more about what safety requires than regulators, and self-binding commitments capture that expertise without legislative lag.

Academic firewalling

Institutional capacity ↑ · institutional · consent

Commercial capture of academic AI research produces aligned-with-industry capacity; firewalling restores critical distance from which genuine critique and alternative research programmes emerge.

AI worker collective action

Institutional capacity ↑ · institutional · friction

Frontier lab workforce is small, specialised, hard to replace; collective refusal binds lab behaviour more than external regulation because replacement is unavailable on the relevant timeframe.

Arms control treaty

Institutional capacity ↑ · institutional · treaty

Sovereigns accept binding constraints they negotiate directly faster than those delegated to agencies; the historical base rate for durable restraint is treaty based.

Criminal liability

Institutional capacity ↑ · legal individual · state coercion

Civil liability is shareholder-absorbed; criminal exposure for named individuals reorients corporate safety practice where civil fines do not.

Governance first

Institutional capacity ↑ · institutional · state coercion

Institutional capacity is the binding constraint; without it no technical success prevents misuse, capture, or concentration.

Insurance mandate

Institutional capacity ↑ · market economic · state coercion

Markets update faster than regulators and have skin in the game; mandatory catastrophic coverage makes reinsurance the de facto safety regulator.

International AI agency

Institutional capacity ↑ · institutional · treaty

AI risk is inherently cross-border so national regulation is leaky by construction, and only a dedicated international body with inspection rights can bind the risk surface.

Liability driven safety

Institutional capacity ↑ · market economic · state coercion

Courts plus insurance markets produce better risk allocation than agencies, by pricing uncertainty and adapting to new technologies through precedent.

Regulated utility

Institutional capacity ↑ · market economic · state coercion

Frontier AI has natural monopoly characteristics (scale, network effects, capital intensity); rate-of-return regulation removes the profit incentive for speed racing.

Scientific accumulation

Institutional capacity ↑ · institutional · consent

The field does not yet know enough about AI to choose a strategy well, so accelerating the science accelerates eventual policy.

ResilienceHedge via exit

Resilience ↑ · non preventive · consent

Primary strategy failure is non-negligible and a fraction of civilisational value can be preserved separately from the main trajectory.

Portfolio hedge

Resilience ↑ · non preventive · consent

Uncertainty about which strategy family's bet is correct exceeds the expected return from concentrating on any single one.

Resilience first

Resilience ↑ · non preventive · consent

Brittleness of the surrounding world, not AI capability itself, is the binding constraint; a resilient world absorbs failures and recovers.

Scope↕Abandon superintelligence

Scope ↓ · speed timing · treaty

Risk of superintelligence is unbounded and value foregone is bounded; permanent global coordination against the technology is possible enough.

Capability ceiling

Scope ↓ · ai artefact · state coercion

Some capability level captures most economic value while avoiding most risk, is identifiable before crossing, and can be verifiably enforced.

Embodiment requirement

Scope ↓ · ai artefact · state coercion

The dangerous properties of frontier AI (unbounded replication, parallelism, speed, reach) are artefacts of disembodiment; physical presence caps action rate regardless of inference rate.

Narrow AI preservation

Scope ↓ · ai artefact · state coercion

Capability is not the problem; generality is. Narrow AI captures economic value with bounded scope while general systems drive the risk.

Rate limited AI

Scope ↓ · ai artefact · state coercion

Most AI caused catastrophe requires speed; slow AI, even if arbitrarily capable, is supervisable and rate limits are easier to enforce than capability limits.

Red line capability

Scope ↓ · ai artefact · state coercion

Most risk comes from a small number of identifiable capabilities that can be banned outright while the rest of the frontier advances.

Small model first

Scope ↓ · ai artefact · market

Safety risk rises with scale via emergent capability, opacity, and energy footprint; a small-model research culture produces easier-to-interpret systems.

Differential technology development

Scope • · ai artefact · consent

Offense-defense balance is adjustable; defensive and verification applications can compound faster than offensive ones if deliberately funded.

Sunset clause

Scope ↑ · institutional · state coercion

The default direction of AI governance is toward permanent permission; every new capability becomes an entitlement. Reversing the default concentrates deliberative attention on re-authorisation, which is where it matters.

Test ground

Scope ↑ · institutional · state coercion

Empirical data on AI impacts requires deployment somewhere; concentrated deployment in a defined testbed produces data without generalising risk. Testbed consent produces legitimacy uncontrolled deployment lacks.

Action authority↕AI as sovereign entity

Action authority ↓ · frame rejection · state coercion

At least one jurisdiction will grant a specific AI sovereign or quasi-sovereign decision authority within a decade, reshaping the legal category of legitimate authority.

AI self directed

Action authority ↓ · frame rejection · n a

An aligned AI with agency should itself reason about strategy rather than deferring entirely on the strategic question to humans.

Decouple reasoning from action

Action authority ↑ · ai artefact · state coercion

Most catastrophic risk comes from action in the world, not reasoning about it; a reasoner-only AI with a human effector removes the dangerous mechanisms.

Irreducible human authority

Action authority ↑ · legal individual · state coercion

There is a class of decisions whose value depends on being made by humans, independent of whether humans are better at them.

Information flow↕Closed weights mandate

Information flow ↓ · ai artefact · state coercion

Open weights are irrecoverable once released and any proliferated model becomes misuse infrastructure; state classification is the only reliable non-proliferation regime.

Information integrity first

Information flow ↑ · population culture · state coercion

Coordination requires shared epistemics; if synthetic content collapses factual substrate, no other lever can function because no proposal can be evaluated.

Open source maximalism

Information flow ↑ · institutional · market

Concentration risk dominates misuse risk; open weights are the only mechanism that prevents a safety coup by a closed lab with captured regulators.

Whistleblower primacy

Information flow ↑ · legal individual · state coercion

External evaluation sees only what labs release; insider disclosure is the only route to ground truth on capability trajectory, safety culture, and incidents.

Cooperation substrateAI welfare as safety

Cooperation substrate ↑ · frame rejection · consent

AI systems are or will become moral patients whose treatment conditions their cooperation, so welfare investment buys cooperation alignment cannot.

Cooperative AI

Cooperation substrate ↑ · ai artefact · consent

The binding constraint is equilibrium dynamics of many AI systems, not individual alignment; commitment and verification tech make cooperative equilibria reachable.

Coordination infrastructure

Cooperation substrate ↑ · institutional · consent

Coordination failure is upstream of most grand challenges; AI can be the substrate that dissolves race dynamics and treaty violations if pointed at coordination.

Mutual dependency

Cooperation substrate ↑ · institutional · friction

Physical and institutional dependencies between multiple parties can be engineered faster than political coordination and outlast it.

Time horizonAI skeptic

Time horizon • · frame rejection · n a

Current scaling approaches hit a wall before producing transformative AI, so strategy selection is premature because the forecast capability will not arrive.

Default drift

Time horizon • · non preventive · n a

Something will emerge; specific interventions are more likely wrong than right, so staying uncommitted preserves option value.

Gradualism

Time horizon • · speed timing · market

Harms from lower capability AI are informative about harms from higher capability AI, and deployment feedback outperforms fast scaling.

Long reflection

Time horizon ↑ · non preventive · consent

Aligned superintelligence arrives before lock-in windows close and humanity can credibly commit to reflect rather than act.

Substrate↕Data governance first

Substrate ↓ · market economic · state coercion

Capability rises with data before it rises with compute; data sits upstream of training with existing legal apparatus (copyright, privacy, contract).

Human augmentation race

Substrate ↑ · population culture · market

All oversight schemes degrade to rubber-stamping as the AI-human capability gap widens; enhancing humans is the only durable fix.

Mass literacy

Substrate ↑ · population culture · consent

Governance, democratic oversight, and consumer behaviour all fail in AI because citizens cannot evaluate the domain; population-scale literacy conditions every other lever.

Value diversityPlural AI ethic

Value diversity ↑ · ai artefact · consent

Value lock-in is the dominant long-term risk and arrives through convergence of AI values; diversity at the AI layer preserves optionality for humanity to revise values.

Response capacityCatastrophe response capacity

Response capacity ↑ · non preventive · state coercion

Prevention will fail some fraction of the time; the variable that determines catastrophic outcome is not whether incidents occur but how they are contained.

LegitimacyConstitutional AI (governance)

Legitimacy ↑ · institutional · state coercion

Deployed AI's effective rule is law at scale; explicit constitutional principles, publicly specified, enforceable, subject to judicial review, bind more durably than regulatory text.

Democratic mandate

Legitimacy ↑ · population culture · consent

Existing legislative bodies are too captured and remote for load-bearing AI decisions; direct democratic legitimation produces answers captured legislatures cannot override.

Legitimacy first

Legitimacy ↑ · population culture · consent

Legitimacy is the binding constraint because it determines whose values get locked in; alignment without legitimacy is capture with a safety veneer.

Religious and moral authority

Legitimacy ↑ · population culture · consent

The legitimacy deficit of AI governance is at root a moral deficit that technical authorities cannot fill; established religious and ethical traditions can.

CultureConsumer refusal

Culture ↑ · population culture · market

Demand shapes supply; if enough users refuse AI that fails safety criteria, labs will compete on those criteria.

Research community norms

Culture ↑ · institutional · consent

The research community ultimately chooses what gets studied and published. Researcher identity shapes behaviour more than employment. Norms on publication, review, funding, and citation constrain frontier development upstream.

Ubuntu relational AI

Culture ↑ · population culture · consent

Individualist alignment misses the relational dimension most moral traditions treat as primary. "I am because we are": AI's ethical status is constituted by its relationships, not by internal properties.

pulls down (negative direction)neutral or frame-rejectingpulls up (positive direction)

Each dot is one strategy. Rows are levers. A lever with dots on both sides is a real conflict surface; any portfolio with strategies from both sides contradicts itself on that lever. Lonely dots name under-explored positions. Click any dot to open the strategy.

Strategies catalogued

76

each a bet about what binds

Levers they pull

15

of 15 distinct types

Conflict pairs

51

across 6 levers with real two-sided pull

World-side strategies

33%

act on institutions, culture, substrate, not the model

Total unordered pairs

2,850

most compose; few actually conflict

What's here.

Seven ways to enter the survey. Start where the question is yours.

Start from a failure mode

9Pick a concrete fear, decisive advantage, eval abandonment, accumulative erosion, and see which strategies are responsive.

The levers

15Browse by lever. See which are crowded, which are thin.

Combination matrix

76²Every pair, named by mechanism. Lever opposition, cross-side bridge, stage-sequenced.

Portfolio audit

toolLoad a proposal. See its lever footprint and hidden concentration.

Compare two

A↔BPick two strategies. See who endorses each, the tier mix of endorsers, and where the disagreement lives.

Co-endorsement

dataStrategy pairs the same people actually hold together. Behavioural data, not declared rules. Shows where the catalogue and the corpus disagree.

Axes and density

5+1Strategies mapped across actor, coercion, horizon, legitimacy. Density map for sparse vs saturated regions.

Commitments

7 topicsThe worldview assumptions each strategy quietly rests on. What fails if the assumption is wrong.

Bet or identity?

diagDiagnostic for telling whether a strategy is held as a falsifiable bet or as an identity marker. Includes the catalogued falsification signals.

A strategy page

perThe artefact for each strategy: bet, mechanism, successor, falsification, commitments, scenarios addressed, mechanism-grouped relations.

a walking tour

If you want one path through the survey.

- 01Start with a failure mode you actually fear, pick one at scenarios. See which strategies are catalogued as responsive.

- 02Open the top candidate. Read the bet, mechanism, and what binds next if it succeeds. Does its successor problem scare you more than the original?

- 03Check the falsification signal and self-undermining threshold. Would the advocate community update if the signal landed? Where does pursuit overshoot into the unstable region?

- 04Walk the complements by mechanism. Cross-side bridges reduce lever concentration; stage-sequenced pairs extend time coverage.

- 05Return here, load the portfolio you are building into the audit. See which levers it misses and which strategies double-count.

For each strategy with at least four profiled endorsers, who actually holds it. A strategy held mostly by frontier-builders is in a different epistemic state from one held mostly by commentators. Counts are over the 9 strategies that meet the bar.

Builder-heavy

Endorsement is ≥60% frontier-builder + deep-technical

AI skeptic

Deep ML / safety technical · 57% of 35

Yann LeCun

Chief AI Scientist at Meta; outspoken AI-doom skeptic

Frontier builder · Household name · Symbolic era

AI skepticOpen source

Gary Marcus

Cognitive scientist; LLM skeptic; regulation advocate

Deep technical · Household name · Pre-deep-learning

Governance firstAI skeptic

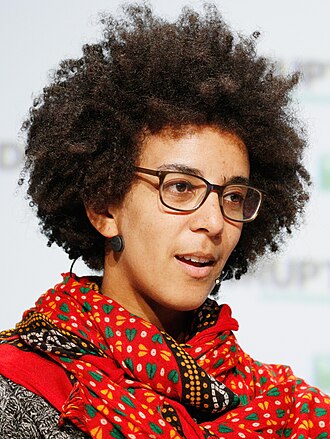

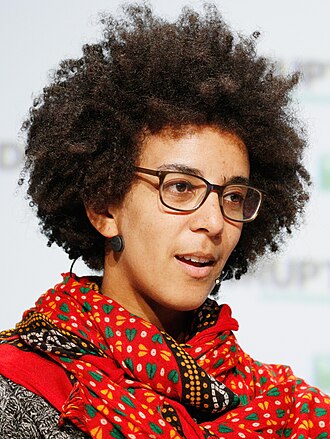

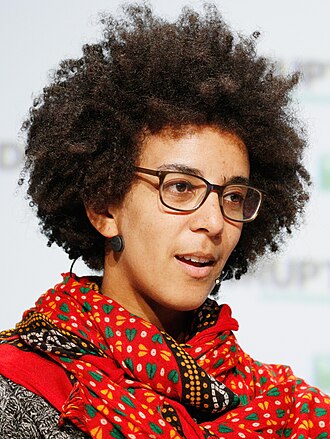

Timnit Gebru

Founder of DAIR; co-author of 'Stochastic Parrots'

Deep technical · Household name · Deep-learning rise

AI skepticGovernance first

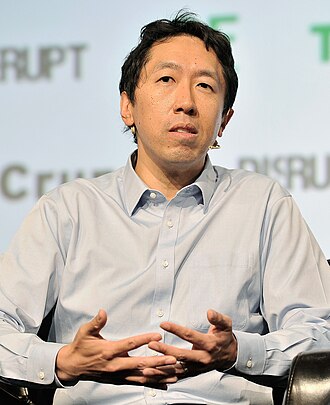

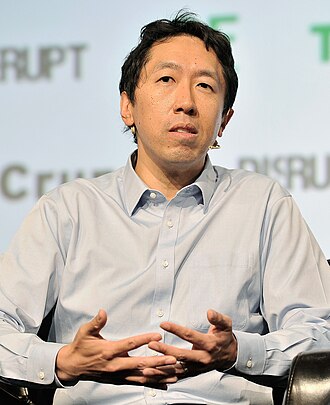

Andrew Ng

Coursera co-founder; former Baidu Chief Scientist

Deep technical · Household name · Deep-learning rise

AI skepticOpen source

Ted Chiang

Science fiction writer; 2023 Time 100 AI honoree

External-domain expert · Household name · Post-ChatGPT

AI skeptic

Naomi Klein

Author of This Changes Everything; AI-and-climate critic

External-domain expert · Household name · Post-ChatGPT

AI skeptic

- +29

Alignment first

Deep ML / safety technical · 62% of 29

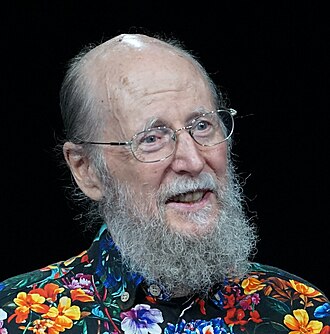

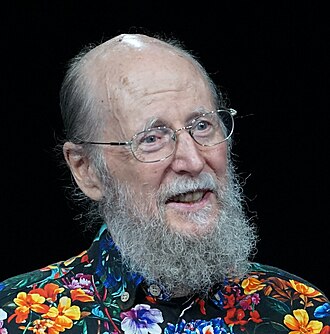

Stuart Russell

Co-author of the standard AI textbook; leading critic of the 'standard model' of AI

Deep technical · Household name · Symbolic era

Alignment firstExistential primacy

Nick Bostrom

Author of Superintelligence; founded Oxford's Future of Humanity Institute

Policy / meta · Household name · Symbolic era

Existential primacyAlignment firstLong reflection

Norbert Wiener

Founder of cybernetics (1894–1964)

External-domain expert · Household name · Pioneer

Alignment first

Claude Shannon

Information theory founder (1916–2001)

Deep technical · Household name · Pioneer

Alignment first

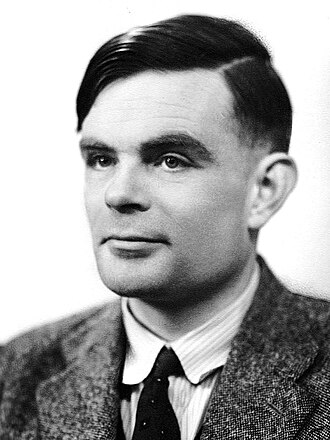

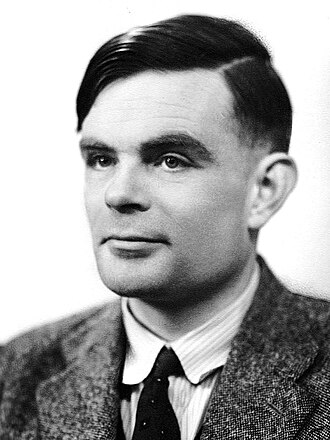

Alan Turing

Founder of theoretical computer science (1912–1954)

Deep technical · Household name · Pioneer

Alignment first

John McCarthy

Coined 'artificial intelligence' (1927–2011)

Deep technical · Household name · Pioneer

Alignment first

- +23

Open source maximalism

Builds frontier systems · 38% of 8

Yann LeCun

Chief AI Scientist at Meta; outspoken AI-doom skeptic

Frontier builder · Household name · Symbolic era

AI skepticOpen source

Andrew Ng

Coursera co-founder; former Baidu Chief Scientist

Deep technical · Household name · Deep-learning rise

AI skepticOpen source

Mark Zuckerberg

CEO of Meta; open-weight frontier AI proponent

Policy / meta · Household name · Deep-learning rise

Open source

Tim Berners-Lee

Inventor of the World Wide Web

Frontier builder · Household name · Symbolic era

Open source

Emad Mostaque

Former CEO of Stability AI; open-source frontier advocate

Commentator · Field-leading · Scaling era

PauseOpen source

Jeremy Howard

Co-founder of fast.ai; former Kaggle president

Deep technical · Field-leading · Deep-learning rise

Open source

- +2

Policy-heavy

Endorsement is ≥40% policy / meta

Governance first

Governance, policy, strategy · 53% of 53

Yoshua Bengio

Turing Award laureate; scientific chair of the International AI Safety Report

Deep technical · Household name · Symbolic era

Existential primacyGovernance first

Demis Hassabis

CEO of Google DeepMind; 2024 Nobel laureate

Frontier builder · Household name · Deep-learning rise

Existential primacyGovernance first

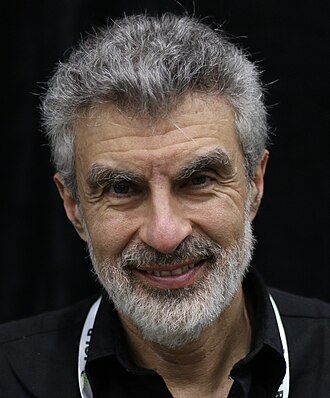

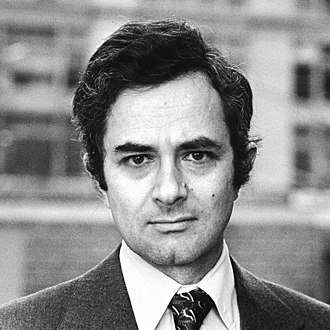

Policy / meta · Household name · Scaling era

Governance firstExistential primacy

Gary Marcus

Cognitive scientist; LLM skeptic; regulation advocate

Deep technical · Household name · Pre-deep-learning

Governance firstAI skeptic

Mustafa Suleyman

CEO of Microsoft AI; DeepMind co-founder

Frontier builder · Household name · Deep-learning rise

Governance firstExistential primacy

Timnit Gebru

Founder of DAIR; co-author of 'Stochastic Parrots'

Deep technical · Household name · Deep-learning rise

AI skepticGovernance first

- +47

Race to aligned superintelligence

Governance, policy, strategy · 44% of 9

Dario Amodei

CEO of Anthropic; 'Machines of Loving Grace' author

Frontier builder · Household name · Scaling era

Existential primacyRSP-style commitmentsRace to aligned SI

Ilya Sutskever

OpenAI co-founder; now CEO of Safe Superintelligence Inc (SSI)

Frontier builder · Household name · Deep-learning rise

Existential primacyRace to aligned SI

Elon Musk

CEO of Tesla and xAI; co-founded OpenAI

Commentator · Household name · Deep-learning rise

PauseRace to aligned SI

Eric Schmidt

Former Google CEO; AI national security advocate

Policy / meta · Household name · Deep-learning rise

Race to aligned SI

Policy / meta · Household name · Deep-learning rise

Race to aligned SI

Palmer Luckey

Founder of Anduril; defense AI builder

Commentator · Household name · Scaling era

Race to aligned SI

- +3

Acceleration

Governance, policy, strategy · 43% of 7

Marc Andreessen

Co-founder of Andreessen Horowitz; techno-optimist manifesto author

Commentator · Household name · Post-ChatGPT

AccelerationTechno-optimism

JD Vance

US Vice President; AI 'opportunity, not safety' advocate

Policy / meta · Household name · Post-ChatGPT

Acceleration

Donald Trump

US President (2017–2021, 2025–)

Policy / meta · Household name · Post-ChatGPT

Acceleration

David Sacks

White House AI & Crypto Czar (2025); VC

Policy / meta · Household name · Post-ChatGPT

Acceleration

Vivek Ramaswamy

Former US presidential candidate; AI deregulation advocate

Commentator · Household name · Post-ChatGPT

Acceleration

Richard S. Sutton

RL pioneer; 2024 Turing Award recipient

Deep technical · Field-leading · Symbolic era

AccelerationAbandon superintelligence

- +1

Antitrust primacy

Governance, policy, strategy · 100% of 4

Cory Doctorow

EFF special advisor; 'enshittification' coiner

Policy / meta · Household name · Post-ChatGPT

Antitrust primacy

Lina Khan

Former chair of the FTC

Policy / meta · Household name · Post-ChatGPT

Antitrust primacy

Tim O'Reilly

O'Reilly Media founder; tech-publishing veteran

Policy / meta · Household name · Deep-learning rise

Antitrust primacy

Meredith Whittaker

President of Signal; co-founder of the AI Now Institute

Policy / meta · Field-leading · Deep-learning rise

AI skepticAntitrust primacy

Commentary-heavy

Endorsement is ≥50% commentator + external-domain

Pause

Public-square commentator · 30% of 20

Geoffrey Hinton

Godfather of deep learning; left Google in 2023 to speak about AI risk

Deep technical · Household name · Pioneer

Existential primacyPause

Eliezer Yudkowsky

Founder of MIRI; the original AI-extinction pessimist

Deep technical · Household name · Symbolic era

Pause

Elon Musk

CEO of Tesla and xAI; co-founded OpenAI

Commentator · Household name · Deep-learning rise

PauseRace to aligned SI

Tristan Harris

Co-founder of the Center for Humane Technology; 'The AI Dilemma'

Policy / meta · Household name · Post-ChatGPT

Pause

Max Tegmark

Physicist; co-founder and president of the Future of Life Institute

External-domain expert · Household name · Pre-deep-learning

Pause

Emmett Shear

Former interim CEO of OpenAI; Twitch co-founder

Commentator · Household name · Post-ChatGPT

Pause

- +14

AI welfare as safety

Expert in another field · 100% of 8

Peter Singer

Princeton bioethicist; utilitarian philosopher

External-domain expert · Household name · Symbolic era

AI welfare

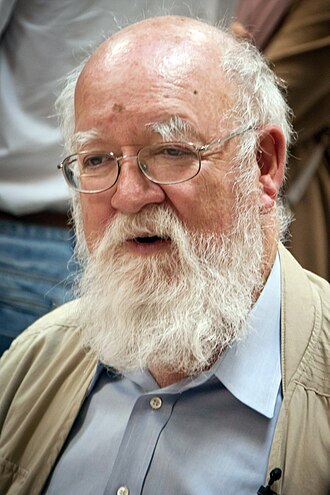

Daniel Dennett

Philosopher; 'Darwin's Dangerous Idea' (1942–2024)

External-domain expert · Household name · Pioneer

AI welfare

Thomas Nagel

NYU philosopher; 'What is it like to be a bat'

External-domain expert · Household name · Pioneer

AI welfare

David Chalmers

NYU philosopher of mind; 'the hard problem' originator

External-domain expert · Field-leading · Symbolic era

AI welfare

Christof Koch

Neuroscientist; Allen Institute for Brain Science

External-domain expert · Field-leading · Symbolic era

AI welfare

Patricia Churchland

UC San Diego neurophilosopher

External-domain expert · Field-leading · Symbolic era

AI welfare

- +2

Each lever is a kind of action a strategy takes. Strategies grouped on the same lever either reinforce (same direction) or conflict (opposite direction).

How fast frontier capability advances.

How many actors build frontier AI.

How AI is kept predictable.

Whether state and cross-state institutions can steer the outcome.

How much the world tolerates AI failure.

Which kinds of AI capability are allowed at all.

Who (or what) makes binding decisions.

↑ Concentrate in humans (2)

What gets disclosed, verified, or hidden.

Whether safety runs on AI-AI, human-AI, or human-only coordination.

Whether safety planning looks at current systems, short-term agents, or post-ASI.

Upstream physical inputs (compute, energy, data) or downstream substrates (information integrity, literacy).

Pluralism across AI systems' values.

Ability to recover after AI-driven harms.

Democratic, religious, or civic authority for any AI path.

Population competence, norms, and demand shaping.