Information flow ↑ · institutional

Open source maximalism

Concentration risk dominates misuse risk; open weights are the only mechanism that prevents a safety coup by a closed lab with captured regulators.

Mechanism

Require open weights and open source at the frontier, letting any sufficiently resourced actor replicate or audit systems.

If it succeeds: what binds next

Everyone has frontier weights. The problem becomes whose defence stands up to whose offence, the offence-defence balance becomes the binding constraint.

A strategy that produces a worse next problem than the one it solved has not done durable work.

Falsification signal

An open released model produces a verified harm in a domain where defender access does not bound the risk.

A strategy held without a falsification signal is not strategy; it is affiliation. Continued support after this signal lands is identity, not bet. See the identity diagnostic.

Self-undermining threshold

overshoot riskWhen capabilities exceed defender throughput.

The offence-defence symmetry holds only where defender access bounds the risk. Outside that domain open release is a one-way ratchet.

Every strategy has a stable region where it reinforces itself and an unstable region where pursuit defeats it. The threshold between them is usually narrower than advocates acknowledge.

People on the record

37Profiled figures appear first, with their tier in small caps. Each face links to the person and their full quote record. Tag: open-source-maximalism.

expertise mix · 8 profiled

recognition mix

A strategy whose endorsement skews to commentators or external-domain experts is in a different epistemic state from one endorsed mostly by frontier-builders. The mix is read carefully across both axes; see the board for criteria. Counts are over the 8 profiled people on this strategy (29 unprofiled excluded).

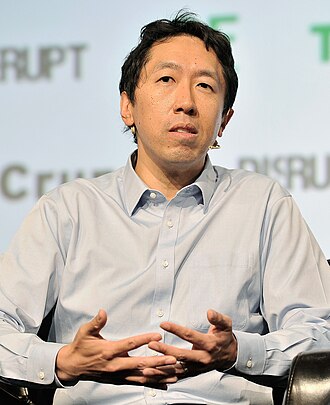

Andrew Ng

Deep ML / safety technical · Mass-public recognition

Emad Mostaque

Public-square commentator · Known across the AI/safety field

Jeremy Howard

Deep ML / safety technical · Known across the AI/safety field

Joëlle Pineau

Deep ML / safety technical · Known across the AI/safety field

Mark Zuckerberg

Governance, policy, strategy · Mass-public recognition

Stella Biderman

Builds frontier systems · Known across the AI/safety field

Tim Berners-Lee

Builds frontier systems · Mass-public recognition

Yann LeCun

Builds frontier systems · Mass-public recognition

Ada Rose Cannon

W3C web standards advocate; AR/VR engineer

Ali Farhadi

Allen Institute for AI CEO

Ali Ghodsi

Databricks co-founder and CEO

Anjney Midha

Andreessen Horowitz general partner; AI investor

Arthur Mensch

CEO of Mistral AI; French frontier-model founder

Ce Zhang

ETH Zürich → University of Chicago; ML systems

Clément Delangue

CEO of Hugging Face; open-source AI advocate

Colin Raffel

UofT; Hugging Face; T5 author

Illia Polosukhin

NEAR Protocol co-founder; Transformer co-author

Lewis Tunstall

Hugging Face; LLM post-training

Liang Wenfeng

Founder of DeepSeek; Chinese frontier AI

Luis Ceze

OctoML CEO; UW computer architecture

Martin Casado

Andreessen Horowitz general partner; infrastructure investor

Matei Zaharia

Databricks CTO and co-founder; Apache Spark creator

Mike Lewis

Meta FAIR; BART, Llama 2 lead

Nathan Lambert

Allen Institute for AI; 'Interconnects' newsletter

Nick Clegg

Former Meta President of Global Affairs (2018–2025)

Nigel Shadbolt

Oxford / Open Data Institute co-founder

Omar Khattab

Stanford / Databricks; DSPy creator

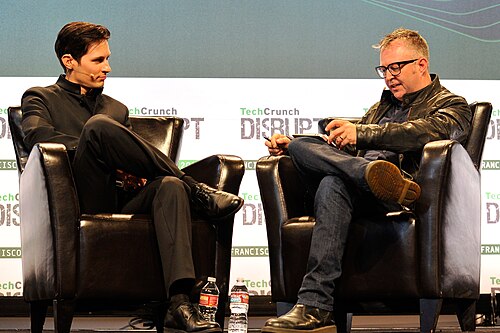

Pavel Durov

Telegram founder; arrested in France 2024

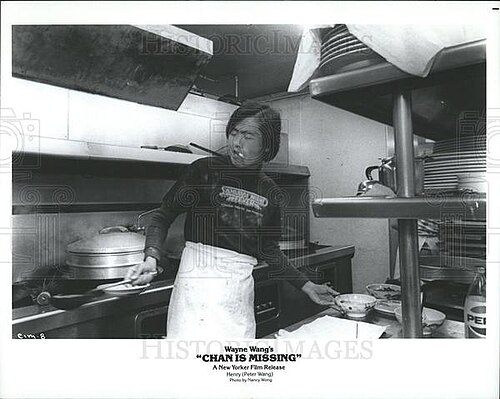

Peter Wang

Co-founder of Anaconda; scientific Python and AI

Robin Rombach

Black Forest Labs co-founder; Stable Diffusion lead

Sasha Rush

Cornell Tech professor; HuggingFace research scientist

Sebastian Raschka

Lightning AI; ML educator and author

Soumith Chintala

PyTorch creator; Meta AI

Tianqi Chen

CMU professor; XGBoost and TVM creator

Tim Dettmers

Efficient-training and quantization researcher

Vukosi Marivate

Univ Pretoria; African NLP / Masakhane

1 more on the record. See the full tag page: open-source-maximalism

Coordinates

Conflicts, grouped by mechanism

3Frame opposition

incompatible premisesThe strategies accept different premises about what AI is or what the binding problem is. They conflict not on lever choice but on the frame that makes lever choice sensible.

Lever opposition

same lever, opposite pullThe pair's primary lever is the same; they pull it in opposite directions. A portfolio containing both is internally incoherent on that lever.

Complements, grouped by mechanism

4Same-side diversification

same side, different leverBoth act on the same side (AI or world) but pull distinct levers. They cover several failure modes on that side while leaving the other side uncovered.

Adjacent bet

different levers, loosely coupledDifferent levers, different directions of action. They reinforce only via the general principle that covering more bets dominates covering fewer.

Same-lever reinforce

same lever, same pull, different mechanismBoth strategies pull the same lever in the same direction by different means. They stack: doing both amplifies the pull, at the cost of double-counting in portfolio audits.

Same-lever twins

1Both use the same lever in the same direction. Usually redundant inside a portfolio: each dollar or effort unit only buys one lever pull, even if two strategies are named.

Axis position

Source note: Open source maximalism strategy.md