strategy tag

Open source.

Release weights widely; transparency beats closed safety

also known as: open weights

stated endorsers

37

no opposers yet

profiled endorsers

8

248 on the board total

endorser mean p(doom)

25%

n=2 · median 25%

quotes by endorsers

37

just for this tag

principal voices

Highest-recognition profiled endorsers, broken ties by quote count. Inclusion is not endorsement of the position, it's recognition of who the discourse turns to when the bet is debated.

Yann LeCun

Yann LeCunHousehold name

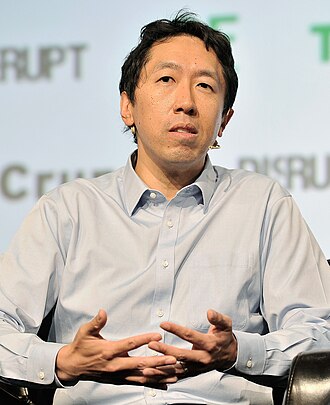

Andrew Ng

Andrew NgHousehold name

Mark Zuckerberg

Mark ZuckerbergHousehold name

Tim Berners-Lee

Tim Berners-LeeHousehold name

Emad Mostaque

Emad MostaqueField-leading

where the endorsers sit on the board

8 of 248 profiled · 3% of the board

| expertise ↓ · recognition → | Household name | Field-leading | Established | Emerging |

|---|---|---|---|---|

| Frontier builder | · | · | ||

| Deep technical | · | · | ||

| Applied technical | · | · | · | · |

| Policy / meta | · | · | · | |

| External-domain expert | · | · | · | · |

| Commentator | · | · | · |

Each face is one profiled person. Cell shade intensifies with endorser density. Faces with × are profiled opposers, same tier, opposite position. Empty cells mark tier combinations the field has not produced for this bet.

also held by these endorsers

What other strategies the same people endorse. Behavioural signal of compatibility, not a declared rule. A high share means the two positions are routinely held together.

Compare this list to the declared relations matrix. Where they differ, the data reveals a pairing the framework doesn't name yet, the global co-endorsement view ranks all pairs.

Tier mix counts only endorsers (endorses, mixed, conditional, evolved-toward).

expertise mix of endorsers · 8 profiled of 37

recognition mix of endorsers

vintage mix · n=8 of 8 profiled with era assigned

Vintage is the era when this person's AI worldview formed, pioneer through post-ChatGPT. A bet held mostly by post-ChatGPT entrants is in a different epistemic state from one held by pre-deep-learning veterans.

People on the record.

37Ada Rose Cannon

W3C web standards advocate; AR/VR engineer

Argues open standards are the structural antidote to AI-driven platform consolidation.

If the open web doesn't have an answer to AI, AI will swallow the open web.

Ali Farhadi

Allen Institute for AI CEO

Argues fully open frontier models, including data and training recipes, are necessary for the field's scientific integrity and for democratizing access to advanced AI.

We released OLMo with everything: weights, training data, training code, evaluation suites. Open-source AI cannot be a marketing word, it has to be all the way down.

Ali Ghodsi

Databricks co-founder and CEO

Argues every enterprise will need to build custom AI on its own data; closed APIs are a temporary architecture and the durable form is open-source models trained against private data.

The future of enterprise AI is custom models trained on the company's own data. That requires open foundation models you can adapt, not closed APIs you have to depend on.

Andrew Ng

Coursera co-founder; former Baidu Chief Scientist

Supports open-source models and has warned against regulatory capture that would lock them out.

Bad actors can use open source models; good actors can use them too. The real risk is if regulation effectively bans open source.

Anjney Midha

Andreessen Horowitz general partner; AI investor

Argues open-weight foundation models are the principal path to keeping frontier AI from being captured by a small number of incumbents; supports portfolio companies building on open weights.

The open-source AI ecosystem is the only credible counterweight to closed-frontier capture. Backing it is an act of structural diversification of the AI economy.

Arthur Mensch

CEO of Mistral AI; French frontier-model founder

Argues open-weight frontier models are both a safety benefit and a sovereignty imperative for Europe.

Open models are the only way to ensure that AI development remains a shared enterprise, not an oligopoly.

Ce Zhang

ETH Zürich → University of Chicago; ML systems

Argues distributed and open ML infrastructure is necessary to keep frontier capabilities accessible to academic research and small organizations; co-founded Together AI on this thesis.

Open and decentralized infrastructure for foundation models is the only way to make sure academic research and small companies can keep contributing to the frontier.

Clément Delangue

CEO of Hugging Face; open-source AI advocate

Argues open-source AI gives civil society, academia, and governments a counterbalance to frontier-lab concentration.

“Open science and open source create a safer path for development of the technology by giving civil society, nonprofits, academia and policymakers the capabilities they need to counterbalance the power of big private companies.”

Context: Testimony to the US House Science, Space, and Technology Committee.

Colin Raffel

UofT; Hugging Face; T5 author

Argues open-source ML research, datasets, weights, training code, is the principle that lets science around foundation models actually accumulate; closed releases break that mechanism.

“We propose a unified framework that converts every text-based language problem into a text-to-text format. The simple objective lets us study transfer learning systematically.”

Emad Mostaque

Former CEO of Stability AI; open-source frontier advocate

Built Stability AI on open-weight principles.

Open models are a public good.

Illia Polosukhin

NEAR Protocol co-founder; Transformer co-author

Argues open and decentralized infrastructure is the natural counterweight to corporate AI concentration; founded NEAR partly on this thesis.

Centralized AI poses risks no single regulator can solve. We need open infrastructure that lets users own their data and models.

Jeremy Howard

Co-founder of fast.ai; former Kaggle president

Argues open-weight AI is necessary for safety, research, and access.

We need open research and open models. The closed-lab approach is a danger to civil society.

Joëlle Pineau

Cohere Chief AI Officer; former Meta VP of AI Research

Long-time public face of Meta's open-source AI strategy. Argues open research accelerates the field.

“Science advances more quickly when we share our knowledge. When we put all of our knowledge in common, we push that boundary faster.”

Lewis Tunstall

Hugging Face; LLM post-training

Argues open post-training tooling is a precondition for the open-source AI ecosystem to keep pace with frontier labs; built TRL and DPO/PPO scaling primitives toward this end.

If RLHF is opaque and proprietary, the open-source ecosystem is locked out of the most important phase of model development. Building open post-training tooling is the answer.

Liang Wenfeng

Founder of DeepSeek; Chinese frontier AI

Released DeepSeek R1 and V3 as open-weight models with cost-efficient training. Resets cost expectations for Chinese frontier AI.

Open-weight models are the path to China's leadership in AI.

Luis Ceze

OctoML CEO; UW computer architecture

Argues open-source compiler-and-runtime ecosystems (TVM, MLIR) are the technical foundation for diversified AI hardware.

Open compilers like TVM are the only path to a diverse AI hardware ecosystem.

Mark Zuckerberg

CEO of Meta; open-weight frontier AI proponent

Argues open-weight models are essential and that the long-term risks of closed concentration exceed the near-term risks of open weights.

I think most developers want to use open-source AI. I think most of the AI ecosystem is going to be built on open-source models.

Martin Casado

Andreessen Horowitz general partner; infrastructure investor

Argues open-source AI is essential for both market structure and safety; framed regulatory capture by frontier labs as the principal AI risk to oppose.

Regulating AI right now means regulating math. Open source is the antidote to the regulatory capture being attempted by the largest labs.

Matei Zaharia

Databricks CTO and co-founder; Apache Spark creator

Argues open and customizable foundation models, deployed on enterprise data, are how AI actually creates value at scale; closed APIs alone are insufficient.

The biggest value of generative AI for most businesses comes from training and customizing models on their own data, in their own infrastructure. That requires open foundation models.

Mike Lewis

Meta FAIR; BART, Llama 2 lead

Co-architect of Meta's open-weight Llama family; argues open foundation models accelerate research and broaden the safety discussion beyond the frontier labs.

“BART pretrains by corrupting text and learning a model to reconstruct the original text. The denoising objective generalises across many language tasks.”

Nathan Lambert

Allen Institute for AI; 'Interconnects' newsletter

Argues open post-training is a prerequisite to credible AI safety claims; the closed nature of frontier RLHF makes external evaluation impossible.

We can't talk seriously about AI safety while the post-training of frontier models is opaque to outsiders. Open-source post-training is the precondition for everything else.

Nick Clegg

Former Meta President of Global Affairs (2018–2025)

Argued during his Meta tenure that open-weight models are the right answer to concerns about AI concentration; positioned Llama's release as a structural counterweight to closed labs.

Open-source AI is the path to keeping innovation accessible and trustworthy. The alternative, a small number of opaque models controlling the future of the technology, is the worse outcome for both users and societies.

Nigel Shadbolt

Oxford / Open Data Institute co-founder

Argues open data and open infrastructure are the prerequisites for AI that benefits society broadly rather than entrenching incumbent platforms.

Open data is the raw material of an AI future that is more equitable. Without it, AI development concentrates in whoever already controls the largest private datasets.

Omar Khattab

Stanford / Databricks; DSPy creator

Argues programmatic LLM pipelines, not prompt engineering, are how production AI systems should be built; this requires open infrastructure to be fully practical.

DSPy lets you treat LLM calls as differentiable building blocks, optimized end-to-end against a metric. The shift is from prompt-tuning by hand to compiling LLM programs.

Pavel Durov

Telegram founder; arrested in France 2024

Argues open and uncensored communication infrastructure is foundational to civil society and should not be sacrificed to AI-content-moderation imperatives.

Outdated laws should not be used to force platforms to police speech automatically. The principle that providers of communication tools are not responsible for individual user actions has to survive the AI era.

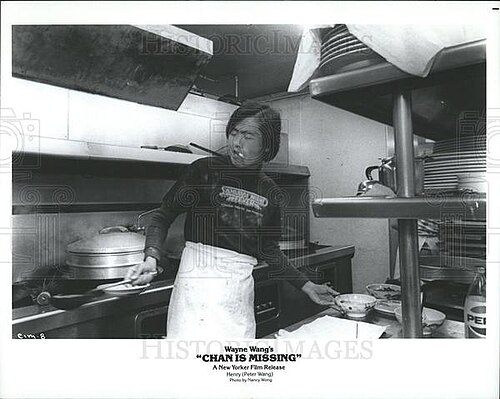

Peter Wang

Co-founder of Anaconda; scientific Python and AI

Argues open-source infrastructure is a public good; AI companies building on top of it should contribute back.

The AI revolution runs on open-source that nobody is funding sustainably.

Robin Rombach

Black Forest Labs co-founder; Stable Diffusion lead

Argues open-weight image-generation models, not just open APIs, are necessary for the field to keep developing safety properties externally; co-built Black Forest Labs on this thesis.

Latent diffusion models compress the image generation problem into a learned latent space, making high-resolution generation accessible to consumer hardware.

Sasha Rush

Cornell Tech professor; HuggingFace research scientist

Champions open replication of frontier research; argues open replication and tutorials are how the field grows.

Open replication is the lifeblood of an honest research field.

Sebastian Raschka

Lightning AI; ML educator and author

Argues open educational materials and open-source ML tooling produce the most resilient version of the field; an industry that depends entirely on closed APIs has lost its capacity to teach itself.

Open-source tooling and open educational materials are how the ML field reproduces itself. We can't outsource that to a few frontier labs without losing something important.

Soumith Chintala

PyTorch creator; Meta AI

Built the most-used open-source deep-learning framework; advocates open infrastructure as the foundation of AI research.

Open infrastructure is what allowed AI research to scale. It is what allows new entrants to compete.

Stella Biderman

Executive Director of EleutherAI

Argues open research and open models are essential; the field cannot be governed if only a handful of closed labs can audit models.

Science doesn't work in secret. Open research is how safety work gets done.

Tianqi Chen

CMU professor; XGBoost and TVM creator

Built foundational open-source AI tools used industry-wide. Argues the AI ecosystem depends on open infrastructure.

Open infrastructure is what allowed many actors to build AI. Closing it now would be a fundamental mistake.

Tim Berners-Lee

Inventor of the World Wide Web

Argues decentralised data architecture (Solid pods) is the structural response to AI and platform exploitation.

“The web has evolved into an engine of inequity and division; swayed by powerful forces who use it for their own agendas.”

Tim Dettmers

Efficient-training and quantization researcher

Develops efficiency techniques that broaden access to frontier-scale training.

If only frontier labs can run frontier models, then only frontier labs will shape what they do.

Vukosi Marivate

Univ Pretoria; African NLP / Masakhane

Argues open and community-led NLP is the only credible path for African languages; built Masakhane as a distributed research network independent of frontier labs.

Frontier-lab tokenizers and pretraining mixtures barely cover African languages. The community has had to build its own datasets, evaluations, and benchmarks, often from scratch.

Wes McKinney

Pandas creator; Posit/RStudio data infrastructure

Argues open-source data infrastructure is the foundation of AI; without open data tooling, AI access is gated by industry.

Open data infrastructure is the precondition for democratised AI work.

Yann LeCun

Chief AI Scientist at Meta; outspoken AI-doom skeptic

Has pushed Meta toward open-weighting Llama models and argues open weights are necessary for diverse, safe AI.

The smartest among us do not want to dominate the others. The idea that because a system is intelligent, it wants to take control is completely false.