strategy tag

AI skeptic.

AGI risk narratives overstated; real harms are mundane and current

stated endorsers

81

2 oppose

profiled endorsers

35

248 on the board total

endorser mean p(doom)

0%

n=1 · median 0%

quotes by endorsers

88

just for this tag

principal voices

Highest-recognition profiled endorsers, broken ties by quote count. Inclusion is not endorsement of the position, it's recognition of who the discourse turns to when the bet is debated.

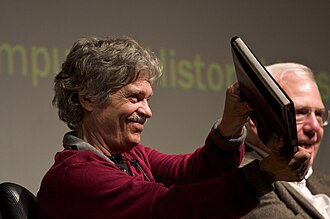

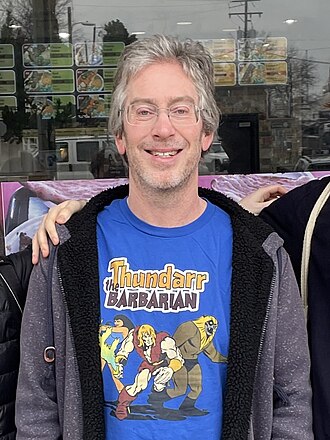

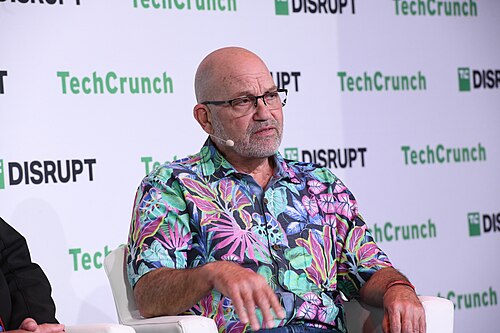

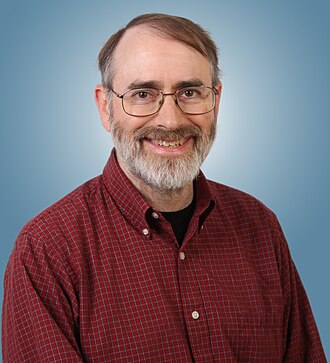

Yann LeCun

Yann LeCunHousehold name

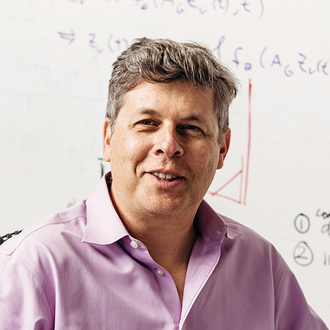

Gary Marcus

Gary MarcusHousehold name

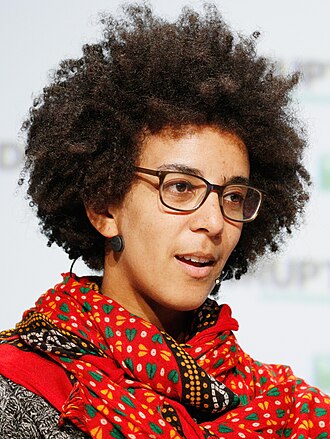

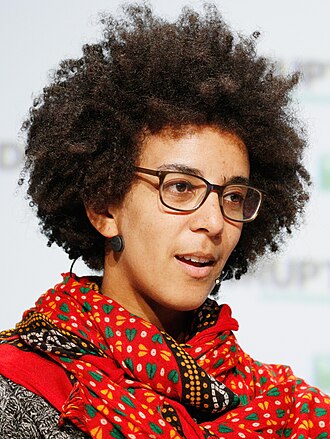

Timnit Gebru

Timnit GebruHousehold name

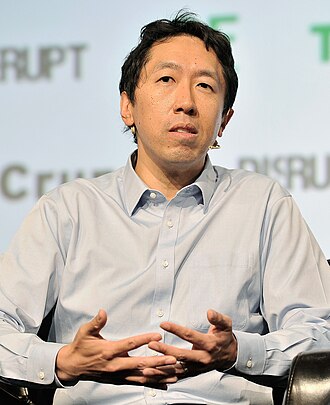

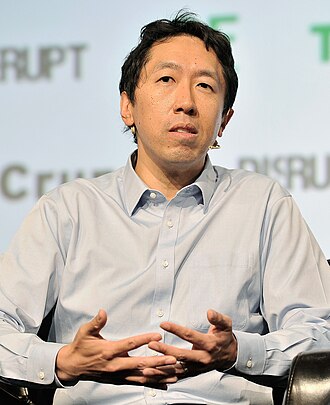

Andrew Ng

Andrew NgHousehold name

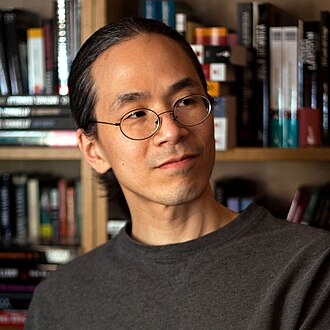

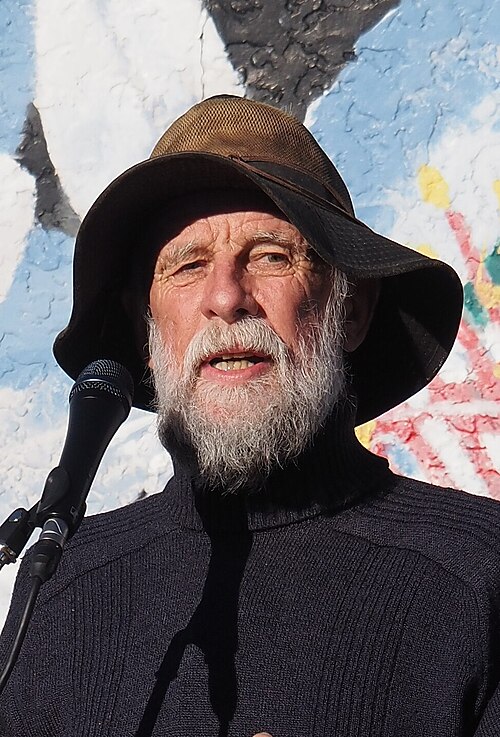

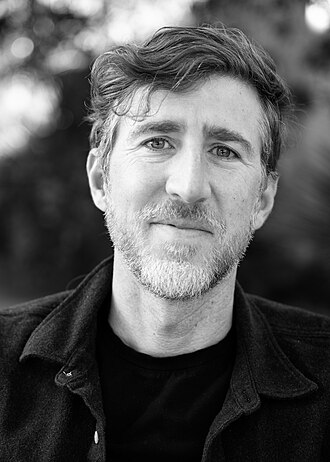

Ted Chiang

Ted ChiangHousehold name

where the endorsers sit on the board

35 of 248 profiled · 14% of the board

| expertise ↓ · recognition → | Household name | Field-leading | Established | Emerging |

|---|---|---|---|---|

| Frontier builder | · | · | · | |

| Deep technical | · | · | ||

| Applied technical | · | · | · | |

| Policy / meta | · | · | · | |

| External-domain expert | · | |||

| Commentator | · | · | · |

Each face is one profiled person. Cell shade intensifies with endorser density. Faces with × are profiled opposers, same tier, opposite position. Empty cells mark tier combinations the field has not produced for this bet.

also held by these endorsers

What other strategies the same people endorse. Behavioural signal of compatibility, not a declared rule. A high share means the two positions are routinely held together.

- Open source2 · 2%

Release weights widely; transparency beats closed safety

- Governance first2 · 2%

Lead with regulation, treaties, liability regimes

Compare this list to the declared relations matrix. Where they differ, the data reveals a pairing the framework doesn't name yet, the global co-endorsement view ranks all pairs.

Tier mix counts only endorsers (endorses, mixed, conditional, evolved-toward). 2 people oppose this position; they are not in the bars below but appear in the list further down.

expertise mix of endorsers · 35 profiled of 81

recognition mix of endorsers

vintage mix · n=35 of 35 profiled with era assigned

Vintage is the era when this person's AI worldview formed, pioneer through post-ChatGPT. A bet held mostly by post-ChatGPT entrants is in a different epistemic state from one held by pre-deep-learning veterans.

People on the record.

83

Abhijit Banerjee

MIT economist; 2019 Nobel laureate

Argues AI does not solve underlying development problems and can replicate them. Skeptical of AI-shortcut framings.

AI cannot substitute for institutions. The development problems that institutions address remain.

Ada Lovelace

First programmer; analytical engine theorist (1815–1852)

Anticipated the 'Lovelace objection' Turing later named: that machines can only do what we explicitly program them to do, a position later argued and rejected.

“The Analytical Engine has no pretensions whatever to originate anything. It can do whatever we know how to order it to perform.”

Aidan Gomez

CEO of Cohere; 'Attention Is All You Need' co-author

Publicly critical of AI extinction-risk discourse; focuses on enterprise deployment and measured capability claims.

The extinction narrative has done real damage to the field by distracting from present harms and deployment reality.

Alan Kay

Object-oriented programming and personal computing pioneer

Argues today's AI is symptomatic of how the original computing-as-augmentation vision was lost; LLMs are statistical mimicry, not understanding.

The best way to predict the future is to invent it. We have not yet invented an AI worth predicting.

Alex Hanna

Director of Research at DAIR; Mystery AI Hype Theater 3000

Argues the AI hype cycle obscures labour and rights violations; rejects 'AGI' framings.

Hype is not a neutral description. It is a mode of governance.

Andrew Ng

Coursera co-founder; former Baidu Chief Scientist

Publicly rejects extinction-risk framings and warns that safety-first regulation risks cementing Big Tech oligopolies.

When I think about existential risks to humans of AI, I don't know how AI could cause us to go extinct. I don't see it.

Andriy Burkov

ML engineer; 'The Hundred-Page Machine Learning Book' author

Argues current LLM capabilities are over-marketed; deployment reality is messier than benchmarks suggest.

Don't confuse a benchmark score with a deployed product. The gap is bigger than you think.

Andy Clark

Sussex philosopher; extended mind theorist

Frames AI as cognitive extension rather than independent cognition; pushes back on both 'AI is conscious' and 'AI is just statistics' framings.

The mind extends into the world, into tools, into others, and now into AI. The boundary of cognition is not the skull.

Context: Core claim of his 1998 paper with David Chalmers, applied to AI in subsequent work.

Arvind Narayanan

Princeton professor; AI Snake Oil co-author

Argues much AI marketing is snake oil; calls for rigorous evaluation of specific deployed systems, not capability hype.

Most AI systems are far less capable than they are marketed to be. The conversation should be about specific deployed systems, not general 'AI'.

Ben Recht

UC Berkeley professor; ML reproducibility critic

Argues much ML research has reproducibility issues; capability claims should be checked rigorously before policy is built on them.

If we cannot reproduce the result, we cannot build policy on it.

Beth Singler

Cambridge anthropologist; AI religion researcher

Documents how AI is increasingly framed in religious or spiritual terms; argues these framings shape policy in ways the policy community is not aware of.

Tech-savvy people are saying things about AI that, in any other context, would be classed as religious utterances.

Bryan Caplan

GMU economist; AI bets partner

Originally a strong skeptic of LLMs passing his economics exams; lost the bet when GPT-4 scored A on a 2023 exam, and has publicly updated toward taking LLM progress more seriously.

I lost my bet. GPT-4 got an A on my labor economics midterm. I am publicly updating.

Cal Newport

Georgetown CS; 'Deep Work' author

Argues current LLMs are useful but limited tools whose productivity gains have been oversold; warns the same workplace dynamics that produced burnout from email will recur with AI.

The reasonable response to AI in knowledge work is not to chase the latest hype cycle but to ask what kind of work makes sense in a world where these tools exist, and structure your day around that.

Cassie Kozyrkov

CEO of Data Scientific; former Google Chief Decision Scientist

Argues for skepticism of enterprise AI hype but supports responsible AI adoption with clear decision framing.

Most AI projects fail at the decision, not the model.

Charlie Warzel

The Atlantic staff writer; tech culture

Publicly skeptical of utopian and apocalyptic AI framings; focuses on present-day media ecosystem effects.

The AI boom feels less like a technological revolution and more like a cultural and political one, where the technology is the vehicle, not the driver.

Daniel Faggella

Emerj founder; 'Worthy Successor' AGI philosopher

Rejects pause and alignment-first framings; argues AGI is inevitable and the question is about incentives of builders.

“Moralizing AGI governance and innovation, calling some 'bad' and others 'good', is disingenuous. All players are selfish.”

Donald Knuth

Computer science pioneer; The Art of Computer Programming

Foundational CS figure; prefers measured engagement with LLMs over both hype and panic. His 2023 ChatGPT-questions experiment was widely circulated.

The questions I sent to ChatGPT brought back results that ranged from outstanding to almost-correct to deeply wrong, all delivered with the same confidence.

Doug Lenat

Cycorp founder; symbolic AI pioneer (1950–2023)

Argued LLMs alone do not have the common-sense reasoning required for AGI; pure-LLM advocacy was overconfident.

LLMs do not understand. They do something else, which is impressive, but it is not understanding.

Douglas Engelbart

Pioneer of human-computer interaction (1925–2013)

Foundational reference for 'augmentation, not automation' framings of AI. Argued technology should make humans collectively smarter rather than replace them.

“By 'augmenting human intellect' we mean increasing the capability of a man to approach a complex problem situation, to gain comprehension to suit his particular needs, and to derive solutions to problems.”

Ed Zitron

EZPR founder; 'Where's Your Ed At' newsletter

Argues frontier-lab valuations are detached from the actual revenue and capabilities of the products; treats most AGI/transformative-AI rhetoric as a financial-marketing strategy.

OpenAI is a money pit propped up by VC delusion. The product doesn't pay for the compute, the compute doesn't produce a product worth its cost, and the entire thing is held together by hype.

Emily M. Bender

Linguist; co-author of 'Stochastic Parrots'

Argues that LLMs do not understand language, that existential-risk framings are harmful marketing, and that real harms are current and tractable.

Large language models present dangers such as environmental and financial costs, inscrutability leading to unknown dangerous biases, and potential for deception. They cannot understand the concepts underlying what they learn.

Eric Weinstein

Mathematician; ex-Thiel Capital MD

Argues AI hype has been used by incumbents to entrench existing power structures; warns that the technical achievements are real but the surrounding institutional response is dishonest.

What we have is a real technological achievement combined with a layer of institutional capture that should not be confused with the technology itself. The two have to be analyzed separately.

Erik Brynjolfsson

Stanford HAI; 'Turing Trap' essay

Frames the 'Turing Trap' as the economically urgent risk, not extinction but labour displacement and inequality.

We have fallen into the Turing Trap. Building AI to imitate humans concentrates power and displaces workers; building AI to augment humans does the opposite.

Evgeny Morozov

Belarusian scholar; 'solutionism' critic

Argues the mainstream AI narrative is a form of solutionism that benefits incumbents and obscures the political choices driving AI.

There is no such thing as 'AI'. There is only a set of political-economic choices about how data, labour, and capital are organised.

François Chollet

Creator of Keras; ARC benchmark author

Frames LLM-based AGI claims as overblown; argues the field needs tests like ARC-AGI that reward abstraction, not pattern matching.

LLMs are not the path to AGI. They are impressive pattern-matchers, but they do not generalise to novel problems.

Freddie deBoer

Cultural critic; AI skeptic

Argues AI capabilities are dramatically over-marketed and that the deployment realities are mundane.

Every AI demo is the best version of the product. Every AI deployment is the worst.

Gary Marcus

Cognitive scientist; LLM skeptic; regulation advocate

While advocating strong regulation, Marcus is publicly skeptical of LLM-only paths to AGI and of high p(doom) framings.

Current large language models are not intelligent; they are stochastic compression of text at best.

Holly Jean Buck

Buffalo geographer; climate AI critic

Argues AI claims to solve climate are often technosolutionist; the policy work is harder than the AI hype suggests.

AI cannot solve climate. AI plus politics might.

Hubert Dreyfus

Berkeley phenomenologist; AI critic (1929–2017)

Argued AI must be embodied and embedded in skilful coping, not symbol manipulation. His critique anticipated key features of the embodied-cognition movement and recent skepticism of pure-LLM AGI.

We do not start out with explicit rules and then learn how to apply them. We learn by example, by skill, by being in the world.

Janelle Shane

AI Weirdness; optics researcher and AI humorist

Argues AI failures, both funny and concerning, are pedagogically important; pushes back on hype while taking misuse risks seriously.

AIs are weird. They generalize from data in ways that humans don't, and the ways they fail tell us as much about them as the ways they succeed.

Jaron Lanier

Computer scientist; VR pioneer; AI skeptic

Argues the term 'AI' obscures that what we have are tools built from humans' labour and data; reframes safety as data dignity.

There is no AI. There is only a new form of social collaboration.

Jeff Hawkins

Co-founder of Numenta; Thousand Brains theory author

Argues from a neuroscience-first viewpoint that current LLMs are not intelligent and that doom scenarios rest on anthropomorphism.

Intelligent machines will not have survival drives unless we give them. The alignment-extinction framing projects evolution onto systems that didn't evolve.

John Searle

UC Berkeley philosopher; Chinese Room Argument

Argues syntax does not produce semantics. Foundational philosophical opposition to strong-AI claims, still cited against LLM-AGI framings.

Imagine a person who knows no Chinese sitting in a room with a rule book. Slips of paper with Chinese characters come in, the person uses the rule book to send appropriate slips back. The room passes the Turing test, but the person inside understands no Chinese.

Jonathan Haidt

NYU Stern professor; The Anxious Generation

Argues children should not have AI companions and that AI deepens the youth-mental-health crisis his Anxious Generation identifies.

“No children should be having a relationship with AI. If we give our kids AI companions that they can order around and will always flatter them, we are creating people who no one will want to employ or marry.”

Jonathan Mugan

DeepGrammar founder; AI for children's media

Frames AI as lacking grounded understanding; argues practical deployment depends on scoping to domains where this limitation is managed.

AI systems do not understand the world the way we do. Deployments that assume they do will fail in specific, predictable ways.

Joseph Weizenbaum

ELIZA inventor; AI ethics pioneer (1923–2008)

Built one of the earliest chatbots and immediately warned against the 'powerful delusional thinking' AI could induce. Anticipated decades of subsequent debate.

“There are certain tasks which computers ought not be made to do, independent of whether computers can be made to do them.”

Judea Pearl

UCLA professor; Bayesian networks and causality pioneer

Argues current deep-learning AI is stuck at the lowest rung of the 'Ladder of Causation', pure association, and cannot reach reasoning without explicit causal models.

Deep learning at present remains stuck at the bottom rung of the ladder of causation. It does observation, not intervention, and certainly not counterfactuals.

Kate Crawford

Author of Atlas of AI; USC research professor

Argues AI is better understood as an extractive industry than as an autonomous agent; the interesting governance questions are about labour, data, and land.

“AI is made from vast amounts of natural resources, fuel, and human labor. It is a technology of extraction.”

Context: Opening framing of Atlas of AI.

Kyle Mahowald

UT Austin; LLMs as not-quite-thought experiments

Argues LLMs are excellent at the formal patterns of language but unevenly competent at the functional reasoning behind it; pushes back on conflating fluency with thinking.

We argue that LLMs are good at formal linguistic competence but inconsistent at functional linguistic competence: the latter requires more than next-token prediction.

Kyunghyun Cho

NYU professor; Genentech; encoder-decoder pioneer

Argues current LLMs are useful tools but not paths to AGI; criticizes the framing that scale alone produces general intelligence and the secrecy practices of frontier labs.

I'm tired of the hype. We don't have AGI. We have very useful pattern matchers, and the social effect of pretending otherwise is corrosive.

Luc Julia

Renault Chief Scientist; Siri co-creator

Argues current AI is not intelligent; deployment hype outruns capability. Frames AI as a useful set of statistical tools rather than emerging mind.

“Artificial intelligence does not exist.”

Context: Title and core thesis of his French-language book L'Intelligence Artificielle n'existe pas.

Marc Raibert

Boston Dynamics founder; AI Institute executive director

Skeptical of AGI timelines from a roboticist's perspective; argues physical-world generality is much further than language-only benchmarks suggest, and that the bottleneck is real-world data.

Robotics is hard. The gap between getting something to work in simulation and getting it to work in the real world has not narrowed nearly as much as the language-AI hype suggests.

Margaret Boden

Sussex emerita; cognitive science of AI

Argues general intelligence requires forms of understanding (autonomy, embodiment, creativity) that current ML does not approach; warns against equating large model behaviour with the foundations of mind.

Behaviour can imitate understanding without instantiating it. Cognitive science exists precisely to keep that distinction in view.

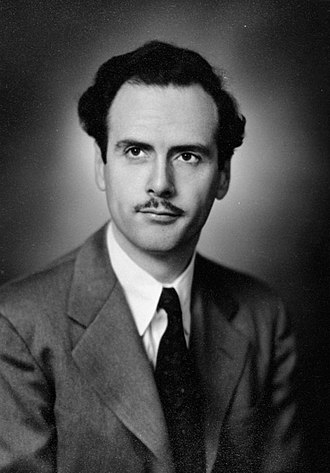

Marshall McLuhan

Media theorist; foundational AI-and-media reference

Foundational thinking on how communication technologies reshape what they carry. The 'AI as medium' framing draws heavily on McLuhan.

“We shape our tools and thereafter our tools shape us.”

Context: Often attributed to McLuhan via Understanding Media; the precise wording was John M. Culkin in 1967, but the framing is McLuhan's.

Matteo Wong

The Atlantic associate editor; AI critic

Frames AI for literary audiences; treats hype with skepticism while taking real capabilities seriously.

The most important thing AI does is reshape how we think about thinking. The economic effects come later.

Meghan O'Gieblyn

Essayist; 'God, Human, Animal, Machine'

Argues AGI discourse inherits and re-enacts religious frames, incarnation, eschatology, the soul, and that recognising those origins changes what we should make of the predictions on offer.

Most of the questions we ask about AI, what it knows, whether it has a soul, what we owe it, were first asked by theologians. We have not stopped being theological; we have only forgotten that we are.

Melanie Mitchell

Santa Fe Institute professor; author of 'Artificial Intelligence: A Guide for Thinking Humans'

Argues intelligence requires abstraction, analogy, and embodied understanding that LLMs do not currently possess.

The real intelligence we want our machines to have, flexible, abstract, analogical reasoning, is far beyond current systems.

Meredith Whittaker

President of Signal; co-founder of the AI Now Institute

Frames the extinction narrative as a distraction from present corporate-power harms.

“My concerns are more about the people, institutions and incentives that are shaping AI than they are about the technology itself, or the idea that it could somehow become sentient or God-like.”

Arguments about existential AI risk are implicitly arguing that we need to wait until the people who are most privileged now, who are not threatened currently, are in fact threatened before we consider a risk big enough to care about.

Michael I. Jordan

Berkeley ML pioneer; 'the AI we have is not the AI we imagined'

Argues the contemporary term 'AI' confuses many distinct technologies and that framing it as singular-Intelligence is misleading.

The AI we have is not the AI we imagined. And the rhetorical conflation of statistical pattern recognition with intelligence is harmful.

Michael Wooldridge

Oxford computer science department head

Argues AI has had boom-and-bust cycles and that the current cycle is likely to over-promise on AGI.

“AI researchers have spent huge amounts of effort and money and repeatedly claimed to have made breakthroughs that bring the dream of intelligent machines within reach, only to have their claims exposed as hopelessly overoptimistic.”

Mike Knoop

Co-founder ARC Prize; ex-Zapier

Argues frontier LLM benchmarks have been collapsing into 'memorization plus retrieval' and that ARC-AGI shows current systems are not on a smooth path to general intelligence.

Existing frontier models score under 50% on ARC-AGI puzzles that are easy for humans. The gap reveals what 'general intelligence' really demands beyond scale.

Moxie Marlinspike

Signal co-founder; cryptographer

Engineer-grade skepticism about AI trajectories; specifically worries about supply-chain risk from AI-assisted code.

If AI writes the code, who audits the AI?

Naomi Klein

Author of This Changes Everything; AI-and-climate critic

Argues AI is a tool of dispossession and despoilation, and that the 'hallucination' is not the model but the industry's promises.

In a reality of hyper-concentrated power and wealth, AI is much more likely to become a tool of further dispossession and despoilation.

A world of deep fakes, mimicry loops and worsening inequality is not an inevitability but a set of policy choices.

Nello Cristianini

Bath University; ML pioneer; 'Shortcut' author

Argues modern AI represents a 'shortcut' to behavior that mimics intelligence without recreating its mechanisms; understanding the difference is essential to anticipating risks and capabilities.

Modern AI is a shortcut. We did not solve intelligence; we found ways to produce useful behaviour without it. The risks of this shortcut differ from the risks of human-like minds.

Nicolas Perrin-Gilbert

Inria; embodied AI; co-founder of Genesys Robotics

Argues language-only training underestimates how much intelligence relies on physical embodiment; embodied robotics is a slower but more honest research path.

Disembodied LLMs can mimic many features of intelligence without acquiring the structural understanding that physical interaction with the world produces. We need both, but the latter is what we are skipping.

Noam Chomsky

Linguist; LLM skeptic

Publicly argues LLMs are sophisticated plagiarism engines rather than intelligences; dismisses near-term AGI.

ChatGPT is basically high-tech plagiarism and a way of avoiding learning.

Oren Etzioni

Founding CEO of AI2; UW professor

Argues AI extinction risk is overstated while endorsing near-term regulation and deepfake-detection tools.

AI is a long way from being able to spontaneously form its own goals or acquire resources to pursue them.

Paul Bloom

Yale and University of Toronto; cognitive science of AI moral status

Argues people are too quick to anthropomorphise AI; psychological research shows our moral intuitions about machines are systematically miscalibrated.

Our minds are designed to attribute agency and feeling to things that move with apparent purpose. AI systems exploit those inclinations far better than we appreciate.

Pedro Domingos

UW emeritus; The Master Algorithm author

Publicly critical of existential-risk framings; argues the bigger risk is under-adoption and illiberal regulation.

AI's greatest risk is not having enough of it.

Ramin Hasani

Liquid AI CEO; liquid neural networks pioneer

Skeptical that transformer-only scaling is the path to AGI; builds alternative architectures.

The default narrative that transformer scale-up leads to AGI is probably wrong. Architectural diversity matters.

Raphaël Millière

Macquarie University philosopher of cognitive science

Argues philosophical questions about LLM cognition cannot be settled by behavioural tests alone; defends careful operationalization of concepts like 'understanding' against both inflationary and deflationary readings.

It is tempting to declare LLMs either trivially intelligent or trivially mindless. Neither verdict survives careful philosophical analysis of what these systems actually do.

Robin Hanson

GMU economist; Age of Em author

Argues against 'foom' scenarios; AI progress will be gradual and economically driven, favouring existing market and regulatory equilibria.

Foom scenarios are extremely unlikely. AI will progress by ordinary competitive dynamics.

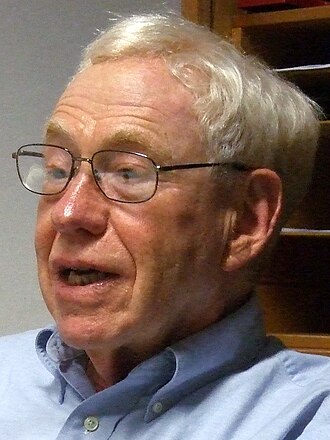

Rodney Brooks

MIT professor emeritus; iRobot co-founder; AI skeptic

Argues AI extinction-risk debates are dominated by people who haven't built AI, and LLMs cannot reason.

“LLMs do not reason, by any reasonable definition of reason.”

Ronen Eldan

Microsoft Research; 'TinyStories' author; mathematician

Argues much of LLM behaviour can be replicated with much smaller, narrower models when training data is carefully curated; rejects the idea that scale is necessary.

TinyStories shows that small models can produce coherent, grammatical, and creative text when trained on a constrained synthetic corpus. The dependency on scale is more about diversity of training distribution than fundamental capability.

Ross Douthat

NYT columnist; conservative AI commentator

Pushes religious and humanist framings of AI risk; concerned about AI's effect on meaning more than extinction.

The question is not whether AI is dangerous; it's whether we know what we want from it.

Sara Hooker

Former Cohere VP of Research; 'Hardware Lottery' author

Argues compute-threshold governance and scale-focused framings obscure the actual research drivers of capability.

Ideas in AI often succeed or fail based on whether they happen to fit existing hardware, rather than their inherent merit.

Compute thresholds as a governance strategy have serious limitations and may miss the risks they were meant to catch.

Sean Carroll

Johns Hopkins / Santa Fe; physicist and Mindscape host

Argues current LLMs are not on a smooth path to general intelligence; engages seriously with x-risk arguments but views many specific scenarios as physically and economically implausible.

I'm willing to take seriously that AI is a really big deal. I'm not willing to grant that the specific paths to doom you imagine have anything like the probability you assign to them.

Sherry Turkle

MIT social scientist; AI and loneliness researcher

Argues AI companions degrade human capacity for empathy and authentic connection, particularly in young people.

“AI is the greatest assault on empathy I have ever seen.”

Steven Pinker

Harvard psychologist; AI-doom skeptic

Argues AI-extinction fears rest on implausible conflation of intelligence with domination and on sci-fi priors.

Intelligence is not the same as power. The doomsday scenarios conflate the two.

Stuart Ritchie

Psychologist and science journalist; AI-risk skeptic

Treats the existential risk literature sympathetically but pushes back on specific numerical claims.

I take AI risk seriously, but I'm not sure the quantitative arguments for high p(doom) are as rigorous as they present.

Subbarao Kambhampati

ASU professor; 'LLMs Can't Plan' advocate

Argues autoregressive LLMs cannot plan or reason in any formal sense; advocates LLM-Modulo frameworks where LLMs are combined with symbolic verifiers.

Auto-regressive LLMs cannot, by themselves, do planning or self-verification. They are approximate knowledge sources, not reasoners.

When you obfuscate the names of actions and objects in planning problems, GPT-4's performance plummets.

Sundar Sarukkai

Bangalore-based philosopher of science

Argues Western framings of AI cognition do not match Indian and other non-Western philosophical traditions; pushes for plurality in AI ethics.

AI ethics has been written in one philosophical tradition. Other traditions have things to say.

Ted Chiang

Science fiction writer; 2023 Time 100 AI honoree

Frames LLMs as lossy compression of human language and argues the interesting AI governance question is about corporate capture, not emergent agency.

“ChatGPT is a blurry JPEG of the web.”

Will A.I. Become the New McKinsey? Applying A.I. to the real world is a form of economic outsourcing.

Thomas Dietterich

Oregon State emeritus; AAAI past president

Argues mundane reliability failures, not superintelligence takeover, are the real AI risk.

“The biggest risk is that those algorithms may not always work. We need to be conscious of this risk and create systems that can still function safely even when the AI components commit errors.”

Timnit Gebru

Founder of DAIR; co-author of 'Stochastic Parrots'

Argues the AI extinction narrative diverts attention from immediate harms: labour exploitation, data extraction, and discriminatory deployment.

We urge the signatories of the FLI letter to be mindful of the hype surrounding the power of AI, and to focus on the actual harms that are being done.

Context: DAIR statement in response to the FLI Pause letter.

Tony Fadell

iPod creator; Nest founder; AI hardware critic

Argues current LLMs are unreliable for high-stakes use; calls for specialised, transparent, government-supervised systems instead.

“Right now we're all adopting this thing and we don't know what problems it causes.”

Trevor Hastie

Stanford statistics; ML pioneer

Argues classical statistical learning principles still constrain what deep learning can do reliably; warns that ignoring those constraints in deployed systems leads to predictable failures.

Most of the lessons of statistical learning still apply to neural networks: bias-variance trade-offs, regularization, distribution shift. Pretending these have been transcended is how we get unreliable systems in production.

Tristan Greene

Tech journalist; AI deep dive coverage

Reports on AI from a science-skeptic angle; pushes back on capability hype with reproducibility questions.

Most AI breakthrough headlines wouldn't survive a rigorous reproduction.

Yann LeCun

Chief AI Scientist at Meta; outspoken AI-doom skeptic

Holds that the AI-extinction narrative is unfounded; frames the debate as a values discussion about control by large labs, not a technical risk.

“You're going to have to pardon my French, but that's complete B.S.”

Context: Response when asked by the Wall Street Journal whether AI could become smart enough to pose a threat to humanity.

Before we worry about controlling super-intelligent AI, we need to have the beginning of a hint of a design for a system smarter than a house cat.

Yannick Kilcher

YouTuber; ML paper explainer; ex-DeepJudge

Argues much of LLM research is overfit to benchmarks and underexamined for fundamental novelty; explains both capability and safety papers with technical specificity for developer audiences.

When I read a new paper, the first question is always: is this real, or is the impressive number coming from something obvious about the evaluation? You'd be surprised how often it's the latter.

3 more on the record. The page renders the first 80 alphabetically; the rest live in the full directory, filterable by this tag.