strategy tag

Governance first.

Lead with regulation, treaties, liability regimes

stated endorsers

252

no opposers yet

profiled endorsers

53

248 on the board total

endorser mean p(doom)

35%

n=2 · median 35%

quotes by endorsers

262

just for this tag

principal voices

Highest-recognition profiled endorsers, broken ties by quote count. Inclusion is not endorsement of the position, it's recognition of who the discourse turns to when the bet is debated.

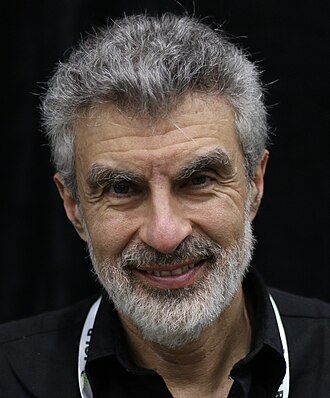

Yoshua Bengio

Yoshua BengioHousehold name

Demis Hassabis

Demis HassabisHousehold name

Sam Altman

Sam AltmanHousehold name

Gary Marcus

Gary MarcusHousehold name

Mustafa Suleyman

Mustafa SuleymanHousehold name

where the endorsers sit on the board

53 of 248 profiled · 21% of the board

| expertise ↓ · recognition → | Household name | Field-leading | Established | Emerging |

|---|---|---|---|---|

| Frontier builder | · | |||

| Deep technical | · | |||

| Applied technical | · | · | ||

| Policy / meta | · | |||

| External-domain expert | · | · | ||

| Commentator | · | · | · | · |

Each face is one profiled person. Cell shade intensifies with endorser density. Faces with × are profiled opposers, same tier, opposite position. Empty cells mark tier combinations the field has not produced for this bet.

also held by these endorsers

What other strategies the same people endorse. Behavioural signal of compatibility, not a declared rule. A high share means the two positions are routinely held together.

- Existential primacy5 · 2%

Extinction/disempowerment risk overrides ordinary cost-benefit

- AI skeptic2 · 1%

AGI risk narratives overstated; real harms are mundane and current

Compare this list to the declared relations matrix. Where they differ, the data reveals a pairing the framework doesn't name yet, the global co-endorsement view ranks all pairs.

Tier mix counts only endorsers (endorses, mixed, conditional, evolved-toward).

expertise mix of endorsers · 53 profiled of 252

recognition mix of endorsers

vintage mix · n=53 of 53 profiled with era assigned

Vintage is the era when this person's AI worldview formed, pioneer through post-ChatGPT. A bet held mostly by post-ChatGPT entrants is in a different epistemic state from one held by pre-deep-learning veterans.

People on the record.

252

Abeba Birhane

Mozilla Foundation senior advisor; AI ethics researcher

Argues dataset-level audits are the tractable governance lever and that 'AGI' rhetoric is harmful to minoritised users.

The dataset is the system. Audit the dataset.

Adam Tooze

Columbia historian; Chartbook newsletter

Argues AI governance is fundamentally a question of macroeconomic and geopolitical strategy; treats the China-U.S. tech competition as the structural frame within which AI policy will be set.

AI is unfolding within a configuration of state power, capital, and infrastructure that is already in motion. Treating it as a free-floating technology to be governed in the abstract misses where the action is.

Adrian Weller

Cambridge professor; Alan Turing Institute fellow

Bridges technical ML research and UK government AI policy work; argues evidence-based regulation is the durable framework.

Evidence-based AI policy beats principles-based AI policy when the evidence is there. We just have to invest in producing the evidence.

Adrienne LaFrance

The Atlantic executive editor; technology critic

Frames AI governance around democratic epistemics and civic resilience rather than around extinction or optimism.

The question isn't whether AI will change democracy. It is whether we will have functioning democracy afterwards.

Adrienne Williams

Former Amazon warehouse worker; AI labour activist

First-hand voice for workers surveilled by AI; argues those affected should lead policy.

I was the AI's training data. The people building AI for warehouses have never worked in one.

Akash Wasil

Encode Justice; AI policy advocate

Argues U.S. policy must catch up to capability progress; supports legally enforceable safety standards rather than purely voluntary frameworks.

We are losing the race between capability and policy. Legally enforceable safety standards, with real consequences for violations, are the only way to close that gap.

Albert Fox Cahn

Surveillance Technology Oversight Project (S.T.O.P.) founder

Litigates against AI-enabled surveillance; argues current US law allows surveillance practices that would have been unthinkable a decade ago.

AI surveillance is rolling out faster than the laws to govern it. The gap is the danger.

Alex 'Sandy' Pentland

MIT Connection Science director; computational social science

Argues data is collective property and should be governed via 'data cooperatives' rather than corporate ownership.

Data should be treated as a community asset. Data cooperatives are the institutional form that follows from that.

Alex Kantrowitz

Big Technology podcast host; tech journalist

Reports AI from a measured tech-business angle; pushes CEOs on accountability without being captured.

The AI industry has not earned the public trust it is asking for. The story is far from settled.

Alex Tamkin

Anthropic societal impact researcher

Publishes on how AI is actually deployed and what the societal impact patterns are, concrete data rather than speculative framings.

Measuring how models are actually used is the prerequisite for credible societal impact claims.

Allan Dafoe

DeepMind Frontier Safety and Governance lead

Argues AI governance must reckon with strategic incentives: lab races, great-power competition, and institutional path dependence.

AI governance needs to be treated as a political-economy problem, not only a technical compliance problem.

Allen Gunn

Executive Director of Aspiration Tech

Organises civil-society-side AI governance work; champions participatory governance over expert-led regulation.

AI governance has to include the people most affected by AI. Otherwise it's just self-regulation.

Alondra Nelson

Former Biden OSTP deputy director; architect of the AI Bill of Rights

Advocated civil-rights-framed AI governance: the AI Bill of Rights proposes five principles (safe systems, algorithmic discrimination protections, data privacy, notice, and human alternatives).

“The Blueprint for an AI Bill of Rights is for everyone who interacts daily with these powerful technologies, and every person whose life has been altered by unaccountable algorithms.”

Amartya Sen

Harvard economist; capability approach pioneer

Argues AI evaluation must be grounded in human capabilities, what people can do and become, not just narrow technical or economic metrics.

Development is about expanding capabilities. AI should be evaluated by how it expands human capabilities.

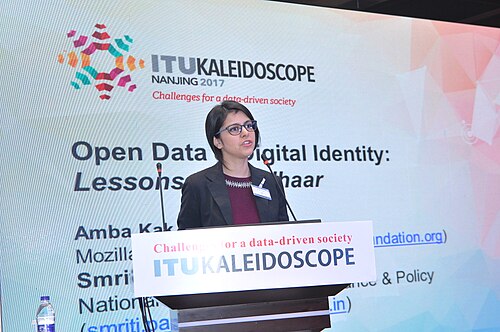

Amba Kak

Co-director of the AI Now Institute

Argues AI governance is primarily a political-economy problem and that reform must go beyond procedural 'safety' framings.

AI policy has been captured by the industry being regulated. The question is who governs the governors.

Amy Zegart

Stanford Hoover senior fellow; national security and AI

Argues AI is transforming intelligence; national security institutions must adapt to AI as infrastructure.

Intelligence agencies are now picking through huge haystacks for one or two needles of insight, and that's precisely the kind of project at which AI excels.

Andrew Trask

Founder of OpenMined; privacy-preserving AI

Argues privacy-preserving AI is the technical substrate for AI that can be both open and safe.

Structured transparency, letting outsiders verify that an AI system has the properties it claims, without exposing the data, is the missing layer of AI governance.

Andrew Yang

Former US presidential candidate; Forward Party founder

Advocates for UBI and new labour-market institutions in response to AI automation; signed the Pause letter.

Automation is not on its way. It's here. We need a Freedom Dividend to respond.

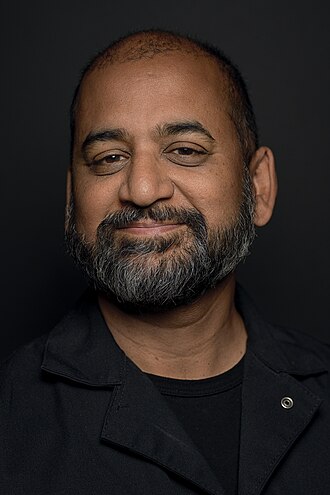

Anil Dash

Glitch former CEO; technology culture writer

Argues tech regulation should be grounded in civil-society frameworks; has criticized 'AI' as a marketing category that obscures specific harms.

'AI' is marketing. The actual question is whose data, whose labour, and whose rules.

Anita Allen

UPenn law professor; privacy and AI

Argues legal-philosophical privacy frameworks are foundational to AI governance, not just technical privacy mechanisms.

Privacy theory is not a luxury for AI. It is the precondition of AI policy that protects human dignity.

Anna Bacciarelli

Human Rights Watch senior researcher; formerly Amnesty International

Argues AI governance must be grounded in existing international human-rights law, with particular focus on non-discrimination and surveillance.

The Toronto Declaration sets out tangible and actionable standards for states and the private sector to uphold the principles of equality and non-discrimination under binding human rights laws.

Anna Eshoo

Former US Representative (CA); AI Foundation Model Transparency Act sponsor

Architect of the AI Foundation Model Transparency Act; advocates for structured transparency over permission-based regulation.

“Transparency into how AI models are trained and what data is used to train them is critical for consumers and policy makers.”

Anna Makanju

OpenAI VP of Global Impact; policy veteran

Argues OpenAI engages proactively with governments and advocates measured, risk-tiered regulation.

AI policy needs to be built by people who understand both the technology and the geopolitics.

Anthony Albanese

Prime Minister of Australia (2022–)

Cautious supporter of AI regulation; aligns Australia with mid-Atlantic positions, stronger than U.S., softer than EU, on frontier model governance.

Australia must shape the rules around AI rather than be a passive recipient of them. That means working with both our allies and our region.

Anu Bradford

Columbia Law professor; 'The Brussels Effect' author

Argues the EU AI Act will propagate globally via the Brussels Effect, regardless of US action.

The Brussels Effect operates on AI as on every other regulated technology: when the EU regulates a global market, that regulation becomes global standard.

Arvind Krishna

CEO of IBM

Supports accountability-focused AI regulation; opposes rules that create unpredictability for business.

Companies that put out AI models should be held accountable to their models.

Azeem Azhar

Exponential View founder; tech-economy analyst

Argues institutional capacity to absorb AI is the binding constraint on whether AI is net positive.

We have exponential technology in linear institutions. The gap is the governance problem.

Barath Raghavan

USC professor; digital infrastructure and AI energy

Argues AI energy consumption must be treated as first-class infrastructure cost, with accountability for usage.

If AI consumes 10% of world electricity, we should decide that consciously, not as an emergent property.

Ben Buchanan

Former White House AI Special Advisor (2021–2025)

Architect of chip export controls and the 2023 AI executive order; argues national security and AI safety are inseparable.

Export controls are the most important tool the United States has on frontier AI.

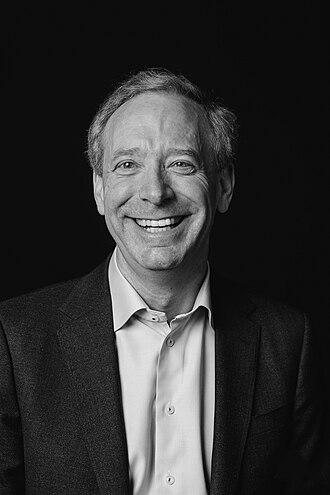

Brad Smith

Microsoft Vice Chair and President

Supports licensing of frontier models, export controls on advanced chips, and an internationally coordinated oversight regime.

We need to slow down, not stop, so that we can put in place the guardrails that a technology this powerful demands.

Brad Templeton

Long-time tech journalist; self-driving cars critic

Bridges technical engineering and AI policy on transport. Argues self-driving safety claims need real-world validation, not just simulation.

Real-world miles matter. AVs that are safer in simulation than on roads need scrutiny.

Brando Benifei

MEP; EU AI Act co-rapporteur

Argued for stricter rules on foundation models and biometric surveillance during the AI Act trilogues; framed AI regulation as a fundamental-rights protection mechanism.

AI must be human-centric and rights-based. Without rules, the technology will reshape our societies according to whatever values its developers happen to hold.

Bret Taylor

Chairman of OpenAI; co-CEO of Sierra

As OpenAI Chair, has emphasised structured governance and board independence; co-led the post-Altman-saga reform.

OpenAI's mission requires governance that can survive disagreement among its board.

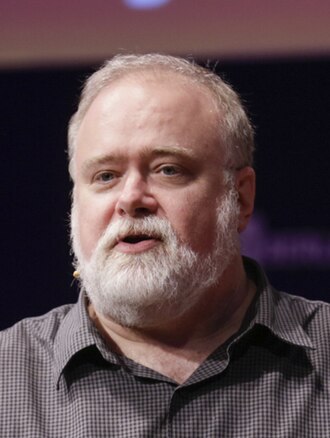

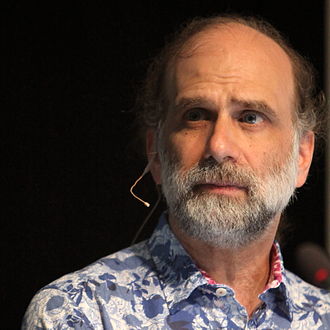

Bruce Schneier

Security guru; AI security and democracy critic

Argues AI security and AI democracy questions overlap; advocates structural changes to platform power.

We're being conditioned by AI in ways we don't yet understand. The political-economic question is who builds the AI we are conditioned by.

Carina Prunkl

Utrecht AI ethics researcher; former FHI

Argues AI ethics must engage more robustly with broader structural and political factors, not just algorithmic properties.

AI ethics has largely focused on algorithmic properties; we need to zoom out to structural and political context.

Carl Benedikt Frey

Oxford economist; 'The Future of Employment' author

Argues labour-market impact demands policy response; complements the AI x-risk agenda with economic welfare concerns.

“About 47 percent of total US employment is in the high-risk category, meaning associated jobs could be automated in the next decade or two.”

Context: From the landmark 2013 Frey–Osborne paper.

Carlos Ignacio Gutierrez

Future of Life Institute AI policy researcher

Maps the comparative AI legislative landscape across jurisdictions.

Without comparative AI legislative analysis, jurisdictions repeat each other's mistakes.

Carme Artigas

Spanish AI and Digital Agenda Secretary; AI Advisory Body co-chair

Chief negotiator of the EU AI Act and co-chair of the UN AI Advisory Body's global-governance work.

We negotiated the EU AI Act with one principle: human rights are non-negotiable.

Carolyn Rouse

Princeton anthropology chair; AI sociology

Argues AI ethics requires deep sociological grounding, particularly on race and historical inequality.

AI ethics without sociology produces frameworks that are blind to the structural conditions in which AI is deployed.

Casey Newton

Platformer founder; Hard Fork co-host

Reports on AI policy and the AI lab politics; generally pro-regulation pragmatic.

The AI companies are going to police themselves exactly as well as every past industry has, which is to say, not at all.

Catelijne Muller

ALLAI president; EU AI Act civil-society voice

Argues the EU's risk-based regulatory approach should be the global template; pushed for stronger civil-society participation in the AI Act trilogues.

Trustworthy AI must be lawful, ethical and robust. The EU AI Act is the world's first comprehensive attempt to make these requirements binding.

Cathy O'Neil

Mathematician; Weapons of Math Destruction author

Argues algorithmic systems must be audited and their harms to vulnerable populations must be measured and mitigated.

“Models are opinions embedded in mathematics.”

“The human victims of WMDs are held to a far higher standard of evidence than the algorithms themselves.”

Chinasa T. Okolo

Brookings fellow; African Union AI strategy contributor

Contributed to the AU-AI Continental Strategy. Argues AI governance in Africa cannot be imported wholesale from OECD frameworks.

AI is not Africa's savior. Avoiding technosolutionism in digital development requires AI governance rooted in African contexts.

Chinmayi Arun

Yale ISP fellow; Indian tech policy scholar

Argues AI governance must take non-US, non-EU legal systems seriously; frames AI policy through a comparative constitutional law lens.

AI governance frameworks built on US and EU constitutional premises often don't translate. Indian, Brazilian, and South African jurisprudence has its own grip on these problems.

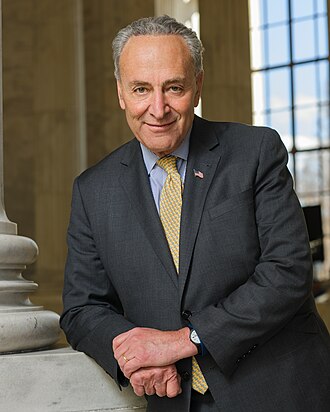

Chuck Schumer

US Senate Majority Leader (2021–2024); architect of the SAFE AI framework

Pushed the SAFE framework (Security, Accountability, Foundations, Explain) as the basis for federal AI legislation; organised bipartisan AI Insight Forums.

We need an all-hands-on-deck effort to contend with AI.

Claire Leibowicz

Partnership on AI; AI and media

Argues synthetic media governance, provenance, disclosure, liability, is the tractable live AI governance problem.

Provenance and disclosure are the foundational trust layer for AI-in-media.

Dame Wendy Hall

Southampton professor; UK AI policy author

Co-authored the foundational UK AI strategy report (2017) and continues to advise on UK AI policy.

The UK can lead in AI if we treat it as a sovereign capacity, not a technology to be imported.

Darío Gil

SVP and Director of IBM Research

Advocates shared open benchmarks and standards as the backbone of AI governance.

Open science and open standards are the backbone of trustworthy AI.

Daron Acemoglu

MIT economist; 2024 Nobel laureate

Argues AI must be redirected toward human augmentation via policy, antitrust, and labour-market mechanisms.

Progress depends on the choices societies make about technology. We have to choose human-complementary AI, or else it will be chosen for us.

David Krueger

Cambridge professor; AI extinction risk advocate

Calls for binding international governance and argues that voluntary commitments from frontier labs are structurally insufficient.

Voluntary commitments from frontier labs are structurally unreliable. We need binding external constraints.

Deep Ganguli

Anthropic societal impact lead

Argues meaningful safety work must include societal-impact measurement alongside technical evaluations.

We cannot align AI with the right human values until we measure what it does to society when deployed.

Deepak Padmanabhan

Queens University Belfast; AI responsibility

Argues AI responsibility must address structural patterns, not just model-level metrics.

Responsible AI needs to look at systems in context, not just at models on a bench.

Demis Hassabis

CEO of Google DeepMind; 2024 Nobel laureate

Calls for international coordination on frontier AI, framed around immediate bio/cyber misuse risk plus longer-term autonomous-system risk.

We should think of aligning AI like raising a child, guardrails and values have to come together.

Context: CBS 60 Minutes interview with Scott Pelley.

Artificial intelligence could end disease and lead to radical abundance.

Diane Coyle

Cambridge economist; Bennett Professor of Public Policy

Argues GDP-style measurement frameworks need overhaul to capture AI's economic effects; without measurement, governance is blind.

We are governing AI based on outdated economic indicators that don't measure most of what AI is doing.

Divya Shrivastava

RAND Corporation AI safety policy researcher

Contributes technical-risk analysis to RAND's AI-biosecurity and cyber research.

The near-term catastrophic AI risks we can actually measure, biosecurity uplift, cyber offence, should ground policy, not speculative framings.

Dominic Cummings

Former UK No. 10 chief adviser; AI policy commentator

Writes on the UK and US governance weakness in responding to frontier AI; argues for professionalised expert teams in government.

Western states are dangerously underpowered to handle frontier AI. The machinery of state needs technical teams, not more committees.

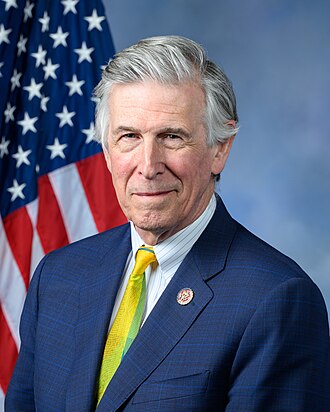

Don Beyer

US Representative (VA); AI Foundation Model Transparency Act sponsor

One of the Congress members with technical AI training; co-sponsored transparency-first AI legislation.

We cannot regulate what we cannot audit. Transparency about training data and model characteristics is the minimum.

Dorothy Denning

Georgetown emeritus; cybersecurity pioneer

Brings cybersecurity grounding to AI governance; argues AI creates new attack surfaces that existing defense doctrine does not cover.

AI is both a tool for defenders and a tool for attackers. The balance depends on the deployment context.

Dragoș Tudorache

MEP; EU AI Act co-rapporteur

Argued AI regulation must be horizontal and risk-based; co-shaped the EU AI Act's tiered framework that distinguishes prohibited, high-risk, and limited-risk uses.

The EU AI Act is the world's first comprehensive AI regulation. We chose a risk-based approach because we wanted to regulate uses of AI, not the technology itself.

Ed Newton-Rex

Fairly Trained founder; ex-Stability AI

Runs the Fairly Trained certifier for consent-based AI training; argues fair-use defence is structurally wrong for generative AI.

“I resigned from Stability AI because I disagree with the company's position that training generative AI models on copyrighted works is 'fair use'.”

Edward Felten

Princeton emeritus; ex-FTC Chief Technologist

Argues AI policy should be built on technical literacy in government; technologists need to be inside agencies to make policy implementable rather than performative. Frames AI governance as a continuity of decades of computer-and-society policy work.

Good tech policy requires technologists in government, not just outside advisors. The detail of what AI systems actually do is where policy succeeds or fails.

AI governance is not a new field. It is a continuation of decades of computer-and-society policy work.

Edward Harris

Gladstone AI co-founder

Authored policy recommendations including export controls on frontier compute and mandatory model evaluations.

The US should create a frontier AI regulatory agency with compute licensing authority.

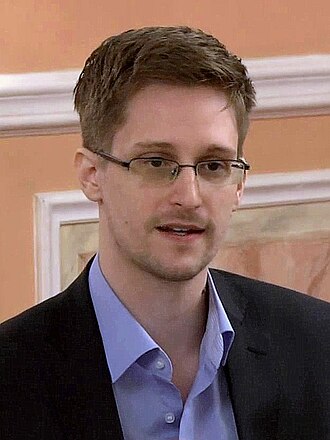

Edward Snowden

NSA whistleblower; AI surveillance critic

Argues AI massively expands the surveillance possibilities he warned about a decade ago. Calls for civil-liberty-grounded constraints.

AI is the most powerful surveillance technology ever invented. The threat model has changed; the law has not.

Eliza Strickland

IEEE Spectrum senior editor; AI Spectrum

Reports AI from an engineering-society lens; pushes for measurable, auditable AI deployment.

Engineering AI requires engineering accountability. Right now, marketing is outpacing both.

Elizabeth Kelly

Founding director of the US AI Safety Institute

Framed the US AI Safety Institute's mission around 'advancing the science of AI safety' via evaluations, red-teaming, and international coordination.

“Safety enables trust, which enables adoption, which enables innovation.”

Emily Grumbling

Former AI policy advisor; National Academies staff

Frames US AI governance as a cross-cutting interagency challenge requiring better expertise and coordination mechanisms.

Federal AI expertise is unevenly distributed; the interagency coordination task is bigger than people appreciate.

Emma Strubell

CMU professor; energy cost of AI pioneer

Argues energy and carbon should be first-class constraints on AI training.

Training a single large NLP model can emit as much carbon as five cars over their lifetimes.

Erie Meyer

Former CFPB Chief Technologist

Argues enforcement of existing consumer-protection law is underused against AI harms.

There is no AI exemption in the Equal Credit Opportunity Act.

Evan Greer

Fight for the Future director; digital rights activist

Frames AI policy as a digital civil-rights battle; mobilises grassroots opposition to surveillance AI.

Big Tech wants you to debate whether AI will kill us all in 50 years so you don't notice it is harming you today.

Evan Williams

Twitter co-founder; Medium founder

Publicly concerned about AI's effect on information ecosystems; cautious about both hype and doom.

We are in the middle of an experiment on the information ecosystem. We should not pretend we have consent to run it.

Frank Pasquale

Brooklyn Law; Black Box Society

Proposed four 'New Laws of Robotics', robots should complement humans, not counterfeit them, and humans must retain accountability.

Robots should not counterfeit humanity; they should complement it.

Gabriel Weinberg

Founder and CEO of DuckDuckGo

Argues AI surveillance is a civil-rights emergency and should be banned before it is entrenched.

“AI surveillance should be banned while there is still time. All the same privacy harms with online tracking are also present with AI, but worse.”

Garrison Lovely

Journalist covering AI safety and EA

Reports on the AI-safety movement from a left-wing labour perspective; combines x-risk seriousness with labour-politics framing.

The AI debate is not just about whether we survive, but about who controls what survives.

Gary Marcus

Cognitive scientist; LLM skeptic; regulation advocate

Argues for an FDA-style pre-deployment safety review, a nimble monitoring agency with pullback authority, and mandatory transparency.

“The big tech companies' preferred plan boils down to 'trust us'. Why should we?”

Context: Senate testimony on AI oversight.

“We are facing a perfect storm of corporate irresponsibility, widespread deployment, lack of adequate regulation, and inherent unreliability.”

Geoffrey Cain

Author of 'The Perfect Police State'

Documents how AI surveillance is already deployed in authoritarian contexts; argues governance frameworks must address this present reality.

Xinjiang is a glimpse of what AI in the hands of an authoritarian state actually looks like.

Gillian Hadfield

University of Toronto; 'regulatory markets' theorist

Argues the standard harms-regulation paradigm is necessary but insufficient; proposes private regulatory markets as a scalable complement.

Regulatory markets require the targets of regulation to purchase regulatory services from a private regulator, which competes on quality of regulation.

Hadrien Pouget

Carnegie Endowment; EU AI Act translator-in-chief

Argues U.S. policymakers underestimate how much the EU AI Act will set de facto global standards; calls for U.S. policy that engages substantively rather than dismissing Brussels.

The EU AI Act is going to shape the global market for advanced AI whether U.S. firms like it or not. The substantive question is which provisions are exportable and which are uniquely European.

Hany Farid

UC Berkeley professor; digital forensics pioneer

Advocates for content provenance standards (C2PA) and universally-applied media-detection infrastructure.

The problem with deepfakes is not the fakes. It's that every real thing now has plausible deniability.

Haydn Belfield

Cambridge CSER academic project manager

Bridges Cambridge x-risk research and UK policy; helps design third-party AI evaluation frameworks.

Third-party AI evaluation is an under-developed governance primitive that the next decade of AI policy will be built on.

He Jianfeng

China Academy of Information and Communications Technology researcher

Contributes to Chinese AI standards work and participates in international AI governance dialogues.

China's AI governance framework is evolving in conversation with international standards, not in isolation.

172 more on the record. The page renders the first 80 alphabetically; the rest live in the full directory, filterable by this tag.