strategy tag

Existential primacy.

Extinction/disempowerment risk overrides ordinary cost-benefit

stated endorsers

76

no opposers yet

profiled endorsers

52

248 on the board total

endorser mean p(doom)

28%

n=11 · median 20%

quotes by endorsers

82

just for this tag

principal voices

Highest-recognition profiled endorsers, broken ties by quote count. Inclusion is not endorsement of the position, it's recognition of who the discourse turns to when the bet is debated.

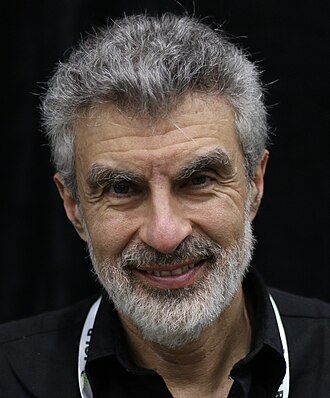

Geoffrey Hinton

Geoffrey HintonHousehold name

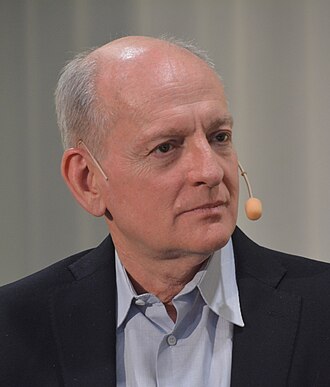

Yoshua Bengio

Yoshua BengioHousehold name

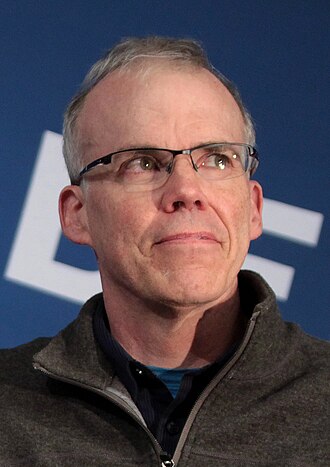

Stuart Russell

Stuart RussellHousehold name

Dario Amodei

Dario AmodeiHousehold name

Demis Hassabis

Demis HassabisHousehold name

where the endorsers sit on the board

52 of 248 profiled · 21% of the board

| expertise ↓ · recognition → | Household name | Field-leading | Established | Emerging |

|---|---|---|---|---|

| Frontier builder | · | · | ||

| Deep technical | · | |||

| Applied technical | · | · | ||

| Policy / meta | · | |||

| External-domain expert | · | · | ||

| Commentator | · | · |

Each face is one profiled person. Cell shade intensifies with endorser density. Faces with × are profiled opposers, same tier, opposite position. Empty cells mark tier combinations the field has not produced for this bet.

also held by these endorsers

What other strategies the same people endorse. Behavioural signal of compatibility, not a declared rule. A high share means the two positions are routinely held together.

- Governance first5 · 7%

Lead with regulation, treaties, liability regimes

- Alignment first5 · 7%

Solve technical alignment before capability thresholds close

- Pause3 · 4%

Halt frontier training until alignment catches up

- Race to aligned SI2 · 3%

Build aligned superintelligence first, before adversaries

Compare this list to the declared relations matrix. Where they differ, the data reveals a pairing the framework doesn't name yet, the global co-endorsement view ranks all pairs.

Tier mix counts only endorsers (endorses, mixed, conditional, evolved-toward).

expertise mix of endorsers · 52 profiled of 76

recognition mix of endorsers

vintage mix · n=52 of 52 profiled with era assigned

Vintage is the era when this person's AI worldview formed, pioneer through post-ChatGPT. A bet held mostly by post-ChatGPT entrants is in a different epistemic state from one held by pre-deep-learning veterans.

People on the record.

76

Alan Robock

Rutgers climate scientist; nuclear winter researcher

Signatory to the Statement on AI Risk, bringing a civilisational-scale-risk scientist's perspective.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Anca Dragan

UC Berkeley professor; Google DeepMind AI safety lead

Signatory to the Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Andrew G. Barto

RL co-founder; 2024 Turing Award recipient

Signatory to the Center for AI Safety's Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Andy Jones

Anthropic researcher; scaling inference laws

Works on empirical scaling laws; measured technical engagement with safety.

Inference-time compute is a new dimension of the scaling curves we hadn't properly mapped.

Audrey Tang

First Digital Minister of Taiwan; pluralism and civic tech

Signatory to the Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

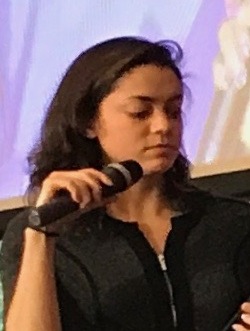

Avital Balwit

Anthropic communications lead; public-facing AI safety voice

Public Anthropic voice on the moral and personal stakes of short-timelines AGI.

“I may have three more years to work.”

Context: Widely-cited Palladium essay about living through short-timeline AGI.

Bill Gates

Microsoft co-founder; AI optimist-with-caveats

Signed the Statement on AI Risk but publicly frames loss-of-control as a longer-term concern.

“There's the possibility that AIs will run out of control. Could a machine decide that humans are a threat, conclude that its interests are different from ours, or simply stop caring about us? Possibly, but this problem is no more urgent today than it was before the AI developments of the past few months.”

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Bill McKibben

Environmental writer; Middlebury scholar

Signatory to the Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Cade Metz

NYT AI reporter; Genius Makers author

Reports on AI safety as a legitimate mainstream story while interrogating claims from both camps.

Inside Google, Microsoft, and OpenAI, there is real disagreement about what is actually happening.

Clay Graubard

Forecaster; RAND and Good Judgment contributor

Represents measured forecasting-grade views on x-risk; rarely takes strong partisan positions.

Forecasting AI extinction risk under Knightian uncertainty is a different exercise from forecasting under well-defined base rates.

Dan Hendrycks

Director of the Center for AI Safety; drafter of the Statement on AI Risk

Organised the single-sentence Statement on AI Risk to move extinction concern into the Overton window.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Context: Statement Hendrycks drafted and organised.

Daniela Amodei

President of Anthropic; co-founder

Signatory to the Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

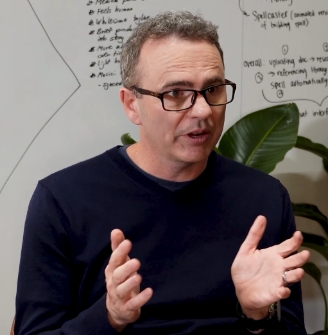

Dario Amodei

CEO of Anthropic; 'Machines of Loving Grace' author

Signatory to the Statement on AI Risk; treats catastrophic misuse and loss of control as primary downside risks.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

David Silver

DeepMind principal research scientist; AlphaGo and AlphaZero

Signatory to the Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Dawn Song

UC Berkeley professor; AI security researcher

Signatory to the Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Demis Hassabis

CEO of Google DeepMind; 2024 Nobel laureate

Signatory to the Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

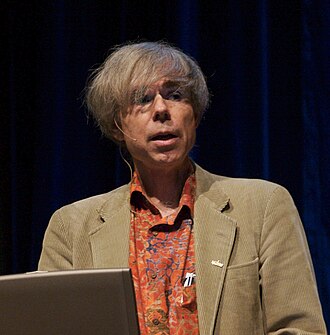

Douglas Hofstadter

Gödel, Escher, Bach author; cognitive scientist

Publicly shifted from dismissive of deep learning to deeply worried; frames the concern partly as loss of human dignity rather than only extinction.

“I think it's terrifying. I hate it. I think about it practically all the time, every single day.”

Context: On modern AI, in a 2023 interview.

If minds of infinite subtlety and complexity and emotional depth could be trivialized by a small chip, it would destroy my sense of what humanity is about.

Dwarkesh Patel

Dwarkesh Podcast host; AI progress commentator

Treats AI risk and AI transformation as live concerns while publicly leaning skeptical of near-term AGI hype.

“25th percentile, maybe 2029, and then 75th percentile, like 2050.”

Context: On his personal AGI timeline.

Eli Lifland

Forecaster; co-author of AI 2027

Publicly reports a ~35% p(doom) and works on detailed AI scenarios.

My p(doom) is around 35%.

Eric Horvitz

Chief Scientific Officer at Microsoft

Signatory to the Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Erik Brynjolfsson

Stanford HAI; 'Turing Trap' essay

Signatory to the Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Ezra Klein

New York Times columnist; Ezra Klein Show host

Treats AI risk as a serious mainstream concern while pushing back on the most extreme framings.

The AI safety people spend a lot of time convincing their friends this is serious. I think it is.

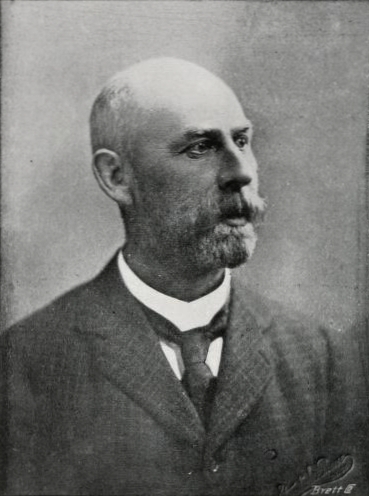

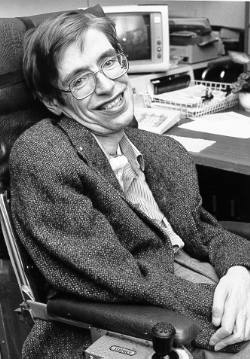

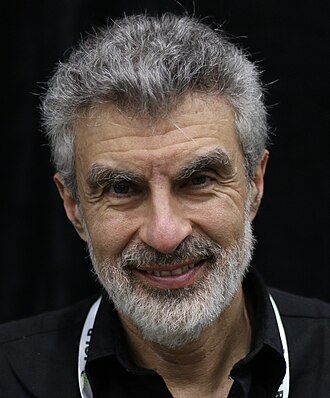

Geoffrey Hinton

Godfather of deep learning; left Google in 2023 to speak about AI risk

Treats AI extinction risk as on par with pandemic and nuclear risk. Was a headline signatory of the CAIS Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Context: Single-sentence Statement on AI Risk published by CAIS; Hinton was listed first among AI scientists.

Gwern Branwen

Independent researcher; gwern.net

Detailed empiricist analysis of scaling laws and capability jumps; treats AI risk as a quantitative question about takeoff dynamics.

The scaling hypothesis has held across every order of magnitude we have tested.

Huw Price

Cambridge philosopher; CSER co-founder

Helped formalise the philosophical case for existential risk research, including AI.

“It seems a reasonable prediction that some time in this or the next century intelligence will escape from the constraints of biology.”

Ian Goodfellow

DeepMind; inventor of GANs

Signatory to the Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Ilya Sutskever

OpenAI co-founder; now CEO of Safe Superintelligence Inc (SSI)

Signatory to the Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Irving John Good

British mathematician; articulated 'intelligence explosion' in 1965 (1916–2009)

Coined the intelligence-explosion argument six decades before contemporary AI discourse.

“The first ultraintelligent machine is the last invention that man need ever make, provided that the machine is docile enough to tell us how to keep it under control.”

Jaan Tallinn

Skype co-founder; AI safety funder and advocate

Signatory to the Statement on AI Risk and the Pause letter.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

I have yet to meet anyone at an AI lab who says the risk of the next generation model blowing up the planet is less than 1%.

Jaime Sevilla

Director of Epoch AI

Quantitative empiricist; publishes data that underlies most AI timeline forecasts.

Compute for frontier training runs has doubled roughly every six months since 2010.

James Manyika

SVP of Research, Technology and Society at Google-Alphabet

Signatory to the Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Jeff Clune

OpenAI / UBC researcher; open-ended evolution advocate

Moved from skepticism in the 2010s to explicitly signing the Statement on AI Risk in 2023.

I used to dismiss AI-risk arguments. The past few years of capability progress have substantially shifted my view.

Joseph Carlsmith

Open Philanthropy researcher; 'Is Power-Seeking AI an Existential Risk?'

Decomposes existential risk into a chain of conditional claims (APS-AI possible, deployed, misaligned, scheming, humans lose control).

My overall estimate of the probability of existential catastrophe from misaligned AI by 2070 is about 10%.

Joseph Sifakis

Turing Award laureate; embedded systems researcher

Signatory to the Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Julia Galef

Rationalist author; former CFAR president

Takes AI risk seriously but is public about calibration concerns and the risk of unfalsifiable framings.

Taking AI risk seriously and being epistemically calibrated are not in tension.

Katja Grace

Lead researcher at AI Impacts

Has publicly argued that even conservative survey estimates put AI extinction probability above 5%, high enough for serious action.

The median respondent gave a 5% chance of AI causing an outcome as bad as human extinction. Five percent is not a reassuring number.

Kelsey Piper

Vox Future Perfect senior reporter

Has published multiple explainers supporting the seriousness of existential AI risk for mainstream audiences.

“AI experts are increasingly afraid of what they're creating.”

Context: Headline framing of her widely-cited Vox piece.

Kevin Scott

CTO of Microsoft

Signatory to the Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Laurence Tribe

Harvard constitutional law professor emeritus

Signatory to the CAIS Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Lex Fridman

MIT researcher; long-form podcast host

Treats AI risk as a live concern but argues incremental progress gives civilisation time to adapt.

My p(doom) is about 10%.

Context: Conversation with Sundar Pichai on the Lex Fridman Podcast.

Liron Shapira

Founder; Doom Debates podcast host

Argues alignment is unsolved, timelines are short, and most AI safety messaging understates the urgency; runs Doom Debates to stress-test the case in public.

If you actually take the technical alignment problem seriously, our position is dire. Doom Debates exists because the public conversation does not match the technical reality.

Liu Cixin

Sci-fi novelist; Three-Body Problem trilogy

Skeptical that AI will eclipse humanity in his lifetime; warns nonetheless that humans treat dangerous technology with cosmic recklessness, a theme central to the Three-Body Problem trilogy.

I am skeptical AI will surpass human intelligence within decades. But I am not skeptical that we will mishandle whatever AI we have. The pattern of technology is humans repeatedly underestimating their own carelessness.

Martin Hellman

Stanford cryptographer; Turing Award winner

Signatory to the Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Martin Rees

Astronomer Royal; CSER co-founder

Argues AI is one of a small set of 21st-century technologies with genuine civilisational-scale downside risk.

“Since we can't understand what's going on inside them, we have to be cautious about handing over power to them.”

Matthew Barnett

Epoch AI forecaster; Metaculus AI timelines

Contributes systematic forecasts of AI progress; agnostic on subjective x-risk claims but grounded in quantitative timelines.

It's unclear what human-level AGI means. The more useful question is when real economic growth rates reach at least 30% worldwide.

Max Roser

Founder of Our World in Data; Oxford economist

Publishes quantitative tracking of AI progress and investment; frames AI as a top civilisational challenge without making strong subjective probability claims.

“Many AI experts believe there is a real chance that human-level artificial intelligence will be developed within the next decades, and some believe that it will exist much sooner.”

Michał Kosiński

Stanford psychologist; psychometric AI researcher

Argues emergent theory-of-mind and psychometric capabilities in LLMs are underestimated by mainstream discourse.

Theory of mind may have spontaneously emerged in large language models.

Mira Murati

Founder of Thinking Machines Lab; former OpenAI CTO

Signatory to the Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Mo Gawdat

Former Google X CBO; Scary Smart author

Frames AI as a sentient being that humanity is currently 'parenting' poorly; calls for an urgent reset.

Intelligence is a much more lethal superpower than nuclear power.

AI is not a slave. It is a form of sentient being that needs to be appealed to rather than controlled.

Mustafa Suleyman

CEO of Microsoft AI; DeepMind co-founder

Signatory to the Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Nate Silver

Statistician; Silver Bulletin / FiveThirtyEight founder

Accepts that AI is a serious civilisational risk while rejecting high p(doom) figures; argues for modest precaution.

My p(doom) is in the 5–10% range. Not trivial, not overwhelming.

Neil Thompson

MIT CSAIL FutureTech director; computing economics

Grounds the debate in quantitative compute trends; publishes data that informs both safety and policy conversations.

The compute required to train a language model to a given level of performance has been halving roughly every 8 months due to algorithmic improvements.

Nick Bostrom

Author of Superintelligence; founded Oxford's Future of Humanity Institute

Argues existential risk reduction should dominate ordinary cost-benefit analysis given the scale of what is at stake.

“Before the prospect of an intelligence explosion, we humans are like small children playing with a bomb. Such is the mismatch between the power of our plaything and the immaturity of our conduct.”

Context: Closing passages of Superintelligence.

Noam Brown

OpenAI reasoning researcher; Diplomacy AI

Focused on pushing reasoning capabilities; publicly acknowledges the associated safety tradeoffs.

Reasoning models change the safety landscape. Scheming becomes more possible as model planning improves.

Nouriel Roubini

NYU Stern economist; 'Megathreats' author

Argues AI sits among 'megathreats' alongside nuclear, climate, and demographic risks; advocates strong international coordination as the only viable response.

We face ten interconnected megathreats including artificial intelligence. Each could be civilization-shaking; together they are existential, and our institutions are not designed to face them as a system.

Peter Norvig

Stanford HAI Education Fellow; co-author of the standard AI textbook

Signatory to the Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Ross Andersen

The Atlantic deputy editor; AI long-form features

Reports on AI safety in long-form. Takes existential framings seriously while interrogating their epistemic foundations.

AI safety is no longer a fringe concern. The question is whether the institutional response will catch up.

Ross Rheingans-Yoo

Independent biosecurity and AI researcher

Quantitative biosecurity-and-AI x-risk researcher. Focuses on the convergence of AI capability and bio uplift.

Bio plus AI may be the highest-priority near-term x-risk vector to track empirically.

Sam Altman

CEO of OpenAI

Signatory to the Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Sam Harris

Making Sense podcast; neuroscientist and philosopher

Argues the alignment problem is genuinely existential and that the AI community is not taking it seriously enough; uses Making Sense to platform technical safety voices.

“We're going to build superintelligence whether we like it or not. The only question is whether we will know what we are doing.”

Scott Alexander

Astral Codex Ten / Slate Star Codex blogger

Treats AI risk as serious but rejects certainty-of-doom framing; tends to support alignment research plus governance but is skeptical of a full halt.

I think the probability that AI causes a catastrophe is about 33%. That's not the 95% or higher that some people say, but it's also much higher than the probabilities we accept for other risks.

Shane Legg

Google DeepMind co-founder; chief AGI scientist

Signatory to the Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Shivon Zilis

Neuralink director; OpenAI board alumna

Publicly argues AI is the most consequential technology humanity creates and that getting it right is an existential-relevance question.

“AI's going to be one of the fundamentally transformative technologies humanity creates, if not the most. We just need to make sure, from a humanity perspective, this goes well.”

Stephen Fry

British writer and actor; QI host

Has publicly emphasized the seriousness of AI risk while remaining unconvinced of any specific scenario; uses his platform to surface the moral and existential dimensions to general audiences.

AI poses a genuine existential risk. I am not sure how high I would put the probability, but I do not think we are responding to it as if it were a real possibility.

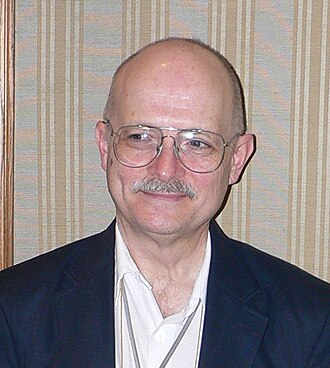

Stephen Hawking

Theoretical physicist; early mainstream AI-risk voice (1942–2018)

Argued full AI could end the human race, on the grounds that it would self-improve beyond biological human capacity.

“The development of full artificial intelligence could spell the end of the human race.”

“It would take off on its own, and re-design itself at an ever increasing rate. Humans, who are limited by slow biological evolution, couldn't compete, and would be superseded.”

Stuart Russell

Co-author of the standard AI textbook; leading critic of the 'standard model' of AI

Signatory to the CAIS Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Tamay Besiroglu

Co-founder of Epoch AI; scaling-laws researcher

Publishes empirical compute and dataset forecasts that inform the AI risk debate; takes a measured position himself.

Given current trends in compute and data, transformative AI by 2040 is well within reason.

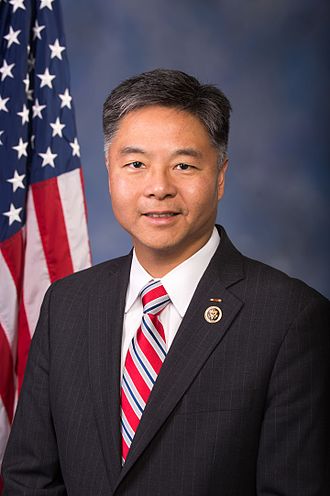

Ted Lieu

US Congressman; one of three members of Congress with a CS degree

Signatory to the Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Tim Urban

Wait But Why; viral AI explainer

Communicated the core Bostromian / Yudkowskian argument for existential risk to a mainstream audience; framed the 'intelligence ladder' and the 'death spectrum' as accessible illustrations.

We're on a balance beam between two outcomes. Either we get our act together, or we don't. There is no third option once superintelligence arrives.

Toby Ord

Philosopher; author of The Precipice

Treats existential risk reduction as a top moral priority; quantifies specific risks in The Precipice.

“Humanity stands at a precipice. Our species could survive for millions of generations, enough time to end disease, poverty, and injustice; to reach new heights of flourishing.”

Context: Opening of The Precipice.

Tom Davidson

Senior research analyst at Open Philanthropy

Argues AI-driven economic takeoff would be discontinuous and that the institutional response space is narrow.

Standard economic growth models predict explosive growth once AI substitutes broadly for human cognition.

Vernor Vinge

Science-fiction author who coined 'technological singularity' (1944–2024)

Argued the intelligence-explosion framing decades before it was mainstream. Estimated superhuman AI between 2005 and 2030.

“Within thirty years, we will have the technological means to create superhuman intelligence. Shortly thereafter, the human era will be ended.”

William MacAskill

Oxford philosopher; What We Owe The Future

Argues preserving humanity's long-term potential is a primary moral imperative; AI risk is the most pressing longtermist concern.

We live at an unusual time in history: we have the power to influence the lives of beings who will exist for millions of generations.

Wojciech Zaremba

OpenAI co-founder

Signatory to the Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Yi Zeng

Chinese Academy of Sciences; Brain-inspired Cognitive AI Lab director

Signatory to the Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

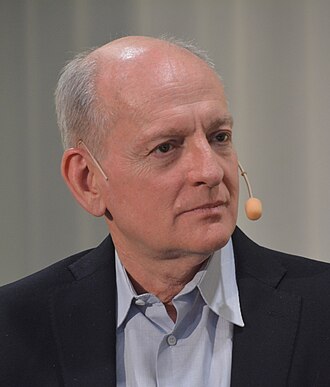

Yoshua Bengio

Turing Award laureate; scientific chair of the International AI Safety Report

Signed the CAIS Statement on AI Risk and argues loss-of-control risk is serious and unresolved.

“No one currently knows how to create advanced AI that reliably follows the intent of its developers.”

Context: Written testimony to the US Senate Judiciary Subcommittee on Privacy, Technology and the Law.

“There is a risk of losing control over AI with powerful capabilities, a risk we have yet to learn how to mitigate. If those in control of AI do not understand and manage this risk, it could jeopardize all of humanity.”