person

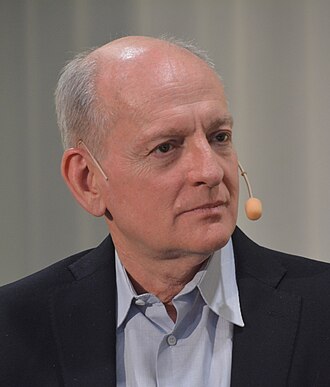

Anca Dragan

UC Berkeley professor; Google DeepMind AI safety lead

Roboticist who studies human-robot interaction and AI alignment from the assistance-games angle. Leads AI safety at Google DeepMind and signed the Statement on AI Risk.

Profile

expertise

Frontier builder

Currently or recently led training, architecture, or safety work on a frontier model. Hands on the loss curve.

Google DeepMind safety lead; UC Berkeley professor on leave. Deep technical work on human-robot interaction, value alignment, and AI safety. Hands-on at frontier scale.

recognition

Field-leading

Widely known inside the AI and AI-safety community. Appears repeatedly in top venues, podcasts, or governance forums. Not a household name to outsiders.

Recognised in robotics and ML safety communities. TIME 100 AI 2024.

vintage

Deep-learning rise

Came up post-AlexNet. ImageNet, AlphaGo, transformer paper. DeepMind, Google Brain, FAIR establish the modern lab template.

PhD 2015 (CMU). Berkeley 2015+. Google DeepMind safety lead 2024. Career is deep-learning era.

Hand-classified. See the board for the criteria and the full grid.

Strategy positions

Alignment firstendorses

Solve technical alignment before capability thresholds closeArgues alignment research should be foundational, using assistance games and reward modelling as central tools.

Robots and AI assistants need to infer and defer to human preferences, not optimise rigid objectives.

Existential primacyendorses

Extinction/disempowerment risk overrides ordinary cost-benefitSignatory to the Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Closest strategy neighbours

by jaccard overlapOther people whose strategy tags overlap with Anca Dragan's. Overlap is on tag identity, not stance; opposites can show up if they reference the same tags.

Joseph Carlsmith

shared 2 · J=1.00

Open Philanthropy researcher; 'Is Power-Seeking AI an Existential Risk?'

Stuart Russell

shared 2 · J=1.00

Co-author of the standard AI textbook; leading critic of the 'standard model' of AI

Nick Bostrom

shared 2 · J=0.67

Author of Superintelligence; founded Oxford's Future of Humanity Institute

Record last updated 2026-04-24.