person

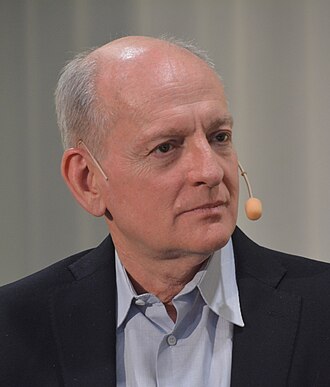

Joseph Carlsmith

Open Philanthropy researcher; 'Is Power-Seeking AI an Existential Risk?'

Philosopher and senior research analyst at Open Philanthropy whose 2021 report on power-seeking AI produced the most cited quantitative decomposition of the existential AI risk argument.

Profile

expertise

Policy / meta

Specialises in AI policy, regulation, governance, philanthropy, or movement strategy. Reads the technical literature but does not produce it.

Senior researcher at Open Philanthropy. Long essays on AI takeover risk and decision theory. Philosopher; not a technical ML researcher.

recognition

Field-leading

Widely known inside the AI and AI-safety community. Appears repeatedly in top venues, podcasts, or governance forums. Not a household name to outsiders.

Recognised in safety/EA circles. Hard-Pasta Substack (Hands and Cities) widely read.

vintage

Scaling era

Worldview formed during GPT-2/3, scaling laws, Anthropic's founding. Pre-ChatGPT but post-deep-learning. The 'scale is all you need' debate is live.

Senior researcher at Open Philanthropy from late 2010s. Long essays on AI takeover and decision theory matured in scaling era.

Hand-classified. See the board for the criteria and the full grid.

p(doom)

- 10%2022-06-23

Definition used: Probability of existential catastrophe from misaligned AI by 2070.

- 10–50%2022

Definition used: Existential catastrophe from misaligned power-seeking AI by 2070; revised range.

Is Power-Seeking AI an Existential Risk? · Open Philanthropy

Strategy positions

Existential primacyendorses

Extinction/disempowerment risk overrides ordinary cost-benefitDecomposes existential risk into a chain of conditional claims (APS-AI possible, deployed, misaligned, scheming, humans lose control).

My overall estimate of the probability of existential catastrophe from misaligned AI by 2070 is about 10%.

Alignment firstendorses

Solve technical alignment before capability thresholds closeArgues misaligned power-seeking AI is a substantial existential risk this century and the case requires only weak premises about agentic capabilities.

My current estimate is that the probability of existential catastrophe from power-seeking, misaligned AI by 2070 is more than 10%.

Context: Updated estimate after the original report; revised upward.

Closest strategy neighbours

by jaccard overlapOther people whose strategy tags overlap with Joseph Carlsmith's. Overlap is on tag identity, not stance; opposites can show up if they reference the same tags.

Stuart Russell

shared 2 · J=1.00

Co-author of the standard AI textbook; leading critic of the 'standard model' of AI

Nick Bostrom

shared 2 · J=0.67

Author of Superintelligence; founded Oxford's Future of Humanity Institute

Record last updated 2026-04-25.