person

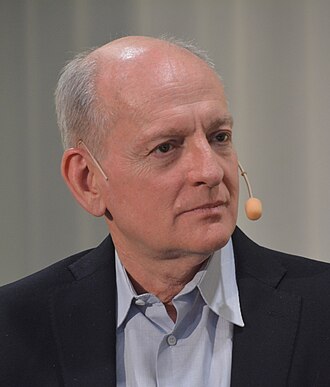

Andrew G. Barto

RL co-founder; 2024 Turing Award recipient

UMass Amherst emeritus professor and co-developer of reinforcement learning with Richard Sutton. Co-recipient of the 2024 ACM Turing Award.

Profile

expertise

Deep technical

Sustained peer-reviewed contribution to ML, alignment, interpretability, or safety techniques. Could review a frontier paper.

UMass Amherst emeritus. Co-author with Sutton of the standard RL textbook. Turing Award 2024 for foundational RL contributions.

recognition

Field-leading

Widely known inside the AI and AI-safety community. Appears repeatedly in top venues, podcasts, or governance forums. Not a household name to outsiders.

Recognised universally in ML. Less press visibility than Sutton.

vintage

Symbolic era

Career started in the GOFAI / expert-systems / early-rationalist period. Vinge's 1993 Singularity, MIRI founded 2000, Bostrom and Yudkowsky writing.

RL textbook with Sutton 1998; UMass through 2010s. Career anchored in pre-deep-learning RL.

Hand-classified. See the board for the criteria and the full grid.

Strategy positions

Alignment firstmixed

Solve technical alignment before capability thresholds closeOn accepting the Turing Award, expressed concern that companies are using RL methods to build powerful AI systems without sufficient regard to safety; framed scaling RL agents in the world as a serious societal risk.

Releasing software to millions of people without safeguards is not engineering. It would never fly in any other engineering field.

Existential primacyendorses

Extinction/disempowerment risk overrides ordinary cost-benefitSignatory to the Center for AI Safety's Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Closest strategy neighbours

by jaccard overlapOther people whose strategy tags overlap with Andrew G. Barto's. Overlap is on tag identity, not stance; opposites can show up if they reference the same tags.

Joseph Carlsmith

shared 2 · J=1.00

Open Philanthropy researcher; 'Is Power-Seeking AI an Existential Risk?'

Stuart Russell

shared 2 · J=1.00

Co-author of the standard AI textbook; leading critic of the 'standard model' of AI

Nick Bostrom

shared 2 · J=0.67

Author of Superintelligence; founded Oxford's Future of Humanity Institute

Record last updated 2026-04-25.