person

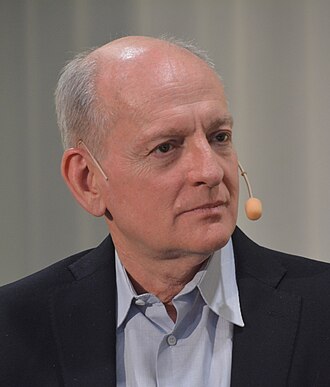

Nick Bostrom

Author of Superintelligence; founded Oxford's Future of Humanity Institute

Philosopher whose 2014 book Superintelligence made 'existential risk from AI' legible to mainstream audiences and policymakers. Frames the problem as a control problem requiring pre-committed solutions before we create superhuman systems.

Profile

expertise

Policy / meta

Specialises in AI policy, regulation, governance, philanthropy, or movement strategy. Reads the technical literature but does not produce it.

Founded Oxford's Future of Humanity Institute. 'Superintelligence' (2014) is the foundational existential-risk text. Philosopher; not a technical researcher.

recognition

Household name

Name recognition outside the AI/CS community. Featured by mainstream press, a Wikipedia page in many languages, a published bestseller, or holds a position the lay public knows.

Superintelligence was a NYT bestseller endorsed by Musk and Gates. Wikipedia entries in 30+ languages.

vintage

Symbolic era

Career started in the GOFAI / expert-systems / early-rationalist period. Vinge's 1993 Singularity, MIRI founded 2000, Bostrom and Yudkowsky writing.

Founded Oxford FHI 2005. Superintelligence (2014) was written before the deep-learning rise; his concept of AI is system-agnostic.

Hand-classified. See the board for the criteria and the full grid.

Strategy positions

Existential primacyendorses

Extinction/disempowerment risk overrides ordinary cost-benefitArgues existential risk reduction should dominate ordinary cost-benefit analysis given the scale of what is at stake.

“Before the prospect of an intelligence explosion, we humans are like small children playing with a bomb. Such is the mismatch between the power of our plaything and the immaturity of our conduct.”

Context: Closing passages of Superintelligence.

Alignment firstendorses

Solve technical alignment before capability thresholds closeArticulated the 'control problem': a superintelligence must have human-beneficial goals built in, because attempting to control a more capable agent after the fact is expected to fail.

“The fate of the gorillas now depends more on us humans than on the gorillas themselves; so too the fate of our species then would come to depend on the actions of the machine superintelligence.”

Long reflectionendorses

Use post-AGI stability for extended moral deliberation before locking inHis 2024 Deep Utopia explores what happens after superintelligence solves all practical problems, the 'post-instrumental' condition.

If we extrapolate this internal directionality to its logical terminus, we arrive at a condition in which we can accomplish everything with no effort. Superintelligence could whisk us the rest of the way.

Closest strategy neighbours

by jaccard overlapOther people whose strategy tags overlap with Nick Bostrom's. Overlap is on tag identity, not stance; opposites can show up if they reference the same tags.

Joseph Carlsmith

shared 2 · J=0.67

Open Philanthropy researcher; 'Is Power-Seeking AI an Existential Risk?'

Stuart Russell

shared 2 · J=0.67

Co-author of the standard AI textbook; leading critic of the 'standard model' of AI

Record last updated 2026-04-25.