strategy tag

Alignment first.

Solve technical alignment before capability thresholds close

stated endorsers

102

+1 tentative · 0 oppose

profiled endorsers

29

248 on the board total

endorser mean p(doom)

35%

n=3 · median 46%

quotes by endorsers

106

just for this tag

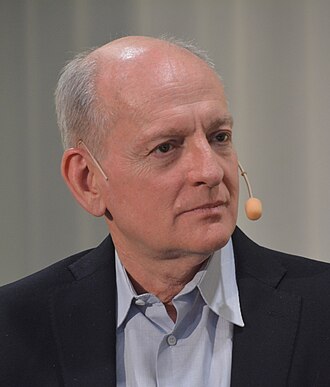

principal voices

Highest-recognition profiled endorsers, broken ties by quote count. Inclusion is not endorsement of the position, it's recognition of who the discourse turns to when the bet is debated.

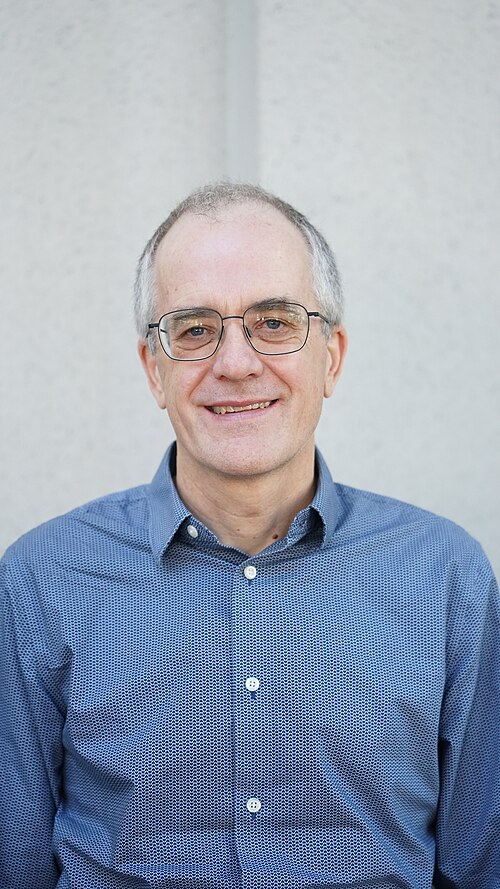

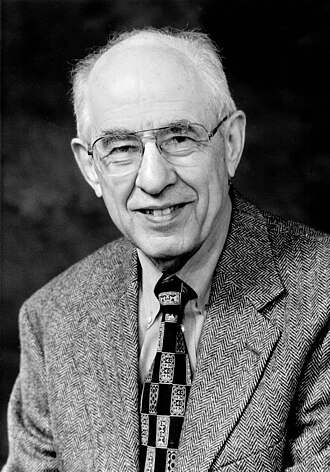

Stuart Russell

Stuart RussellHousehold name

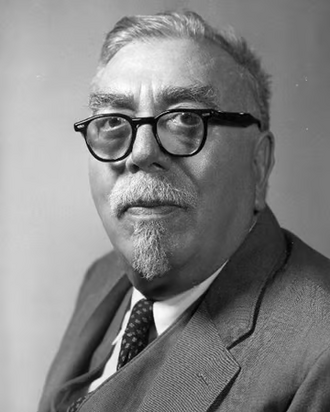

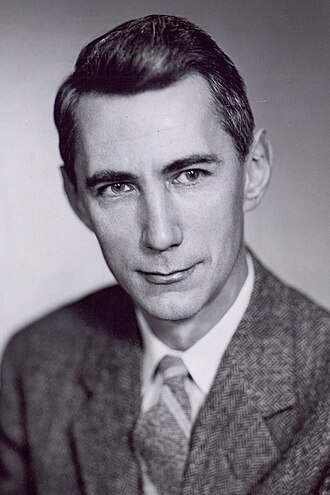

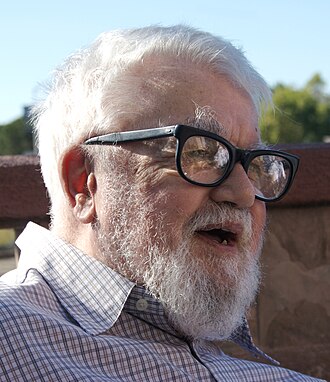

Nick Bostrom

Nick BostromHousehold name

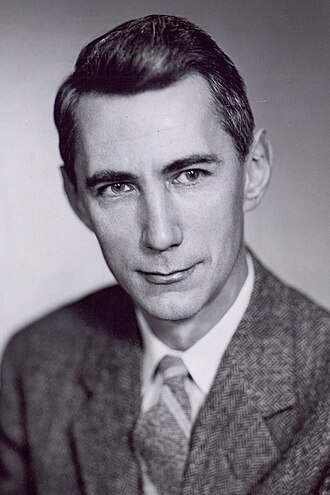

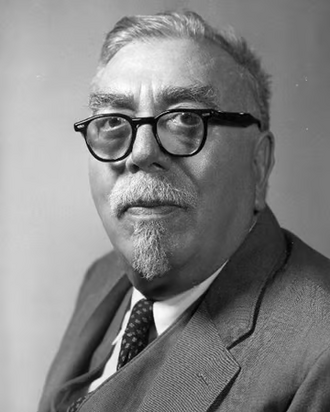

Norbert Wiener

Norbert WienerHousehold name

Claude Shannon

Claude ShannonHousehold name

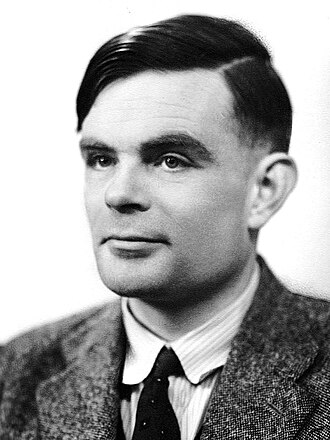

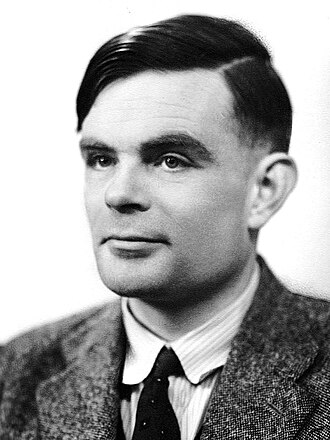

Alan Turing

Alan TuringHousehold name

where the endorsers sit on the board

29 of 248 profiled · 12% of the board

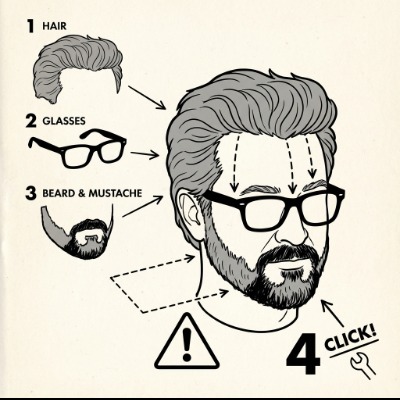

| expertise ↓ · recognition → | Household name | Field-leading | Established | Emerging |

|---|---|---|---|---|

| Frontier builder | · | · | · | |

| Deep technical | · | |||

| Applied technical | · | · | · | |

| Policy / meta | · | |||

| External-domain expert | · | · | ||

| Commentator | · | · | · | · |

Each face is one profiled person. Cell shade intensifies with endorser density. Faces with × are profiled opposers, same tier, opposite position. Empty cells mark tier combinations the field has not produced for this bet.

also held by these endorsers

What other strategies the same people endorse. Behavioural signal of compatibility, not a declared rule. A high share means the two positions are routinely held together.

Compare this list to the declared relations matrix. Where they differ, the data reveals a pairing the framework doesn't name yet, the global co-endorsement view ranks all pairs.

Tier mix counts only endorsers (endorses, mixed, conditional, evolved-toward).

expertise mix of endorsers · 29 profiled of 102

recognition mix of endorsers

vintage mix · n=29 of 29 profiled with era assigned

Vintage is the era when this person's AI worldview formed, pioneer through post-ChatGPT. A bet held mostly by post-ChatGPT entrants is in a different epistemic state from one held by pre-deep-learning veterans.

People on the record.

103 · 1 tentativeAaron Courville

Université de Montréal; Deep Learning textbook co-author

Quiet co-signer of Bengio-aligned positions on safety research priorities.

Alignment should be a first-class research problem alongside capabilities.

Adam Jermyn

Anthropic; previously astrophysics

Argues mechanistic understanding of model behavior, including how deceptive alignment could arise, is required to make safety guarantees credible.

Deceptive alignment is the scenario where a model behaves as if aligned during training but pursues different objectives at deployment. The question is whether we can rule it out empirically.

Adam Kalai

Microsoft Research; AI fairness and safety

Technical researcher focused on fairness and safety engineering; contributes mainstream industry-level work.

Fairness must be operationalised in training, not bolted on after the fact.

Agnes Callard

University of Chicago philosopher; aspiration theorist

Her aspiration framework, how do agents come to hold values they did not previously have, applies to the question of how AI agents might develop values.

Aspiration is the process by which we acquire values we did not previously have. The question of whether AI can do this is the alignment question, philosophically.

Ajeya Cotra

Open Philanthropy researcher; 'biological anchors' forecaster

Supports mainstream alignment research funding and empirical work on 'playing the training game' failure modes.

My 2022 median for transformative AI dropped from roughly 2050 to the late 2030s.

Alan Turing

Founder of theoretical computer science (1912–1954)

Founded the philosophy of AI. His 1950 paper anticipated both the optimistic and cautionary frames of subsequent debate.

“I propose to consider the question, 'Can machines think?'”

Context: Opening line of Computing Machinery and Intelligence.

Alex Irpan

Google Brain alumnus; Sorta Insightful blog

Inside-view RL researcher who has written sceptically on RL hype, then carefully on RLHF, and now on the implications of reasoning models.

Deep reinforcement learning doesn't yet work. We need to be honest about that even when we are excited about the wins.

Alex Pan

Berkeley CHAI; reward hacking

Argues reward hacking, models exploiting flaws in their training objective, is a tractable empirical problem that demands more attention from the alignment community.

Reward hacking shows up reliably across a range of agents and tasks. The good news is that it is empirically studyable; the bad news is that it does not have a known general solution.

Alex Turner

DeepMind alignment researcher; shard theory co-originator

Both co-developer of shard theory (optimistic inside view) and author of formal power-seeking results (pessimistic formal result), representative of the richness of inside-view alignment research.

Optimal policies tend to seek power in a technical sense; but learned policies are not optimal in that sense.

Amanda Askell

Anthropic philosopher-researcher

Shapes Claude's public persona through a virtue-ethics framing; argues character-forward alignment is practical and tractable.

When we train a model, we are not just shaping behaviour, we are shaping a character.

Anca Dragan

UC Berkeley professor; Google DeepMind AI safety lead

Argues alignment research should be foundational, using assistance games and reward modelling as central tools.

Robots and AI assistants need to infer and defer to human preferences, not optimise rigid objectives.

Andrew G. Barto

RL co-founder; 2024 Turing Award recipient

On accepting the Turing Award, expressed concern that companies are using RL methods to build powerful AI systems without sufficient regard to safety; framed scaling RL agents in the world as a serious societal risk.

Releasing software to millions of people without safeguards is not engineering. It would never fly in any other engineering field.

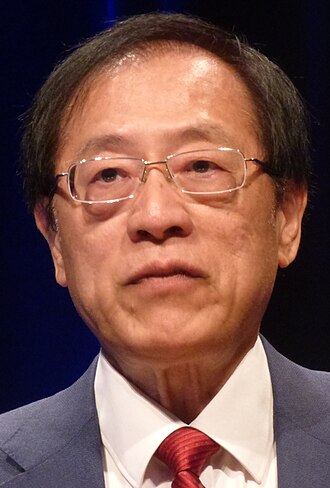

Andrew Yao

Tsinghua professor; Turing Award winner; Chinese AI institutional figure

Foundational figure in Chinese theoretical computer science. Has supported Chinese-Western AI safety dialogues including IDAIS.

AI safety is a shared technical problem; international cooperation is essential.

Anna Salamon

CFAR co-founder; rationality and existential risk

Long-term advocate of careful epistemic practice as a precondition for navigating AI risk; CFAR was central to community-building for alignment research.

Most of the work of being correct about AI risk is not technical, it is the epistemic practice that lets you face uncomfortable questions without flinching.

Asya Bergal

AI Impacts; AI safety researcher

Argues large surveys of AI researchers reveal that the field itself thinks transformative AI is plausible within decades and that catastrophic outcomes are non-negligible.

Median respondents put 50% chance of high-level machine intelligence by 2047. The chance of an extremely bad outcome was 5% or higher for half of respondents.

Barret Zoph

Co-founder Thinking Machines Lab; ex-OpenAI

Argues post-training is where most safety properties are made or broken; co-founded Thinking Machines partly to extend this view to a fully open lab.

Most of what people think of as 'capabilities' is shaped after pretraining. Post-training is also where most of what we think of as alignment is decided.

Ben Pace

LessWrong / Lightcone Infrastructure team

Argues internet community infrastructure, especially LessWrong and the Alignment Forum, is itself a load-bearing part of the alignment research ecosystem.

Most alignment research happens between papers, in long-running discussions on LessWrong and the Alignment Forum. Maintaining that infrastructure is not separable from the field's progress.

Boaz Barak

Harvard; OpenAI safety; theoretical CS

Argues alignment is a real and tractable technical problem, that progress is faster than worst-case predictions assumed, and that the most useful safety work happens inside frontier labs.

I joined OpenAI because I think the most interesting and important alignment research is happening on actual frontier models. Working from the outside has limits.

Brian Christian

Author of The Alignment Problem

Book-length treatment of alignment: inverse reinforcement learning, reward hacking, specification gaming.

The alignment problem is already here, and will keep scaling with capability.

Buck Shlegeris

CEO of Redwood Research; 'AI Control' research lead

Advocates 'AI control', protocol-level safety assuming the worst about model intentions, as a complement to direct alignment.

We should design AI deployment protocols that remain safe even if our AIs are trying to subvert them.

Cari Tuna

Co-founder Good Ventures; Open Philanthropy chair

Argues longtermist philanthropy should weigh catastrophic risks heavily; oversees the largest sustained grant program in AI safety.

Working backward from the question of how we can do the most good with our resources led us to focus on existential risks, including from advanced AI.

Carroll Wainwright

Anthropic; ex-OpenAI; alignment researcher

Co-developed early RLHF methods that became the foundation of post-training across the industry; argues these techniques transfer responsibility for behaviour onto whoever sets up the human feedback.

Our results show that for English summarization, RLHF-trained models can outperform much larger fine-tuned models. The technique is powerful and inherits all the strengths and biases of the human raters.

Catherine Olsson

Anthropic; ex-OpenAI; AI safety community organizer

Long-time community organizer for alignment research; helped seed the EA-aligned safety pipeline at OpenAI before joining Anthropic.

We're seeing the largest AI training runs grow more than 300,000x in compute over six years, an order of magnitude faster than Moore's Law. The implications for safety planning are immediate.

Chelsea Finn

Stanford professor; meta-learning and robotics researcher

Represents the engaged-but-measured academic position: safety is a real research problem; the framings are often overblown.

Generalisation in robotics is where a lot of real safety work has to happen.

Christopher Manning

Stanford NLP director; foundation models

Endorses 'foundation models' as the operative frame for current and future AI systems; engages with safety as integral to that frame, not as a separable add-on.

“We define foundation models as models trained on broad data at scale and adaptable to a wide range of downstream tasks, and these models entail both new capabilities and new risks.”

Christopher Summerfield

Oxford neuroscientist; DeepMind senior researcher

Brings cognitive science framings to AI alignment; author of These Strange New Minds on how neural networks represent the world.

The question 'does this model understand?' hides a stack of questions we haven't yet disentangled. Alignment research has to live with that ambiguity.

Claude Shannon

Information theory founder (1916–2001)

Founded information theory and was an early enthusiast of computing chess machines and learning machines.

“I visualize a time when we will be to robots what dogs are to humans. And I'm rooting for the machines.”

Context: Reported quote from Shannon late in his life. Often cited as one of the earliest succession framings.

Dan Jurafsky

Stanford NLP professor; textbook author

Senior NLP academic who engages with alignment and ethics as part of the discipline's responsibility.

NLP has moved from engineering discipline to civic infrastructure. Our responsibility is to treat it that way.

Daniel Dewey

Former AI risk program officer at Open Philanthropy

Focused on funding alignment research and evaluations.

If we want AI to be broadly beneficial, we need to invest in alignment research well before systems are capable of world-changing impact.

Daniel Filan

AXRP podcast host; alignment researcher

Argues alignment research is technical, tractable, and best advanced through careful engagement with specific research agendas; uses AXRP to surface those agendas in detail.

What I want from AI safety research is the same thing I want from any other research: clear problem statements, clear progress, and a community that holds itself to the standards of the rest of science.

David D. Cox

MIT-IBM Watson AI Lab director

Argues academic-industrial collaboration is necessary for safety research to keep pace with frontier capabilities; runs MIT-IBM as a model for that arrangement.

Frontier AI research has to be a partnership between academia and industry. Neither has the full set of capabilities to navigate where this is going alone.

Doina Precup

McGill professor; DeepMind Montreal lead

Senior RL researcher and DeepMind leader; technical contributor to alignment-relevant RL theory.

RL agents that learn open-endedly need open-ended evaluation regimes if we are to deploy them safely.

Doris Tsao

Caltech / UC Berkeley neuroscientist; face cells researcher

Brings neuroscience grounding to debates about AI representation and consciousness; argues we still understand brain representations poorly.

We are still learning how the brain represents anything. Mapping that onto AI representations is genuinely open research.

Dustin Moskovitz

Asana / Open Phil; biggest AI safety funder

Argues catastrophic risks from advanced AI are real and that long-term philanthropy should be oriented around them; primary funder of Open Phil's AI x-risk grantmaking.

AI is the most important and consequential technology being developed in our lifetime. Its development cycle is one of the most important things society needs to get right.

Dylan Hadfield-Menell

MIT professor; Stuart Russell student; assistance games

Works on assistance games, the Russellian proposal that AI should model and defer to human preferences rather than optimise fixed objectives.

Assistance games give us a framework where AI uncertainty about human preferences is a feature, not a bug.

Ece Kamar

Microsoft Research AI Frontiers VP

Industry-side research leadership on AI reliability, safety, and tool use.

Reliability research is where most of the real AI safety work is happening, and most of the real progress.

Edward Grefenstette

Google DeepMind; AI for science research

Argues research on reasoning and language understanding is essential before scaling can be considered safe; views capability research as inseparable from how alignment problems are framed.

We don't yet know how to robustly characterize what these models understand versus what they confabulate. That gap is the root of many alignment problems.

Ethan Perez

Anthropic researcher; red-teaming language models

Designs red-teaming protocols and model-evaluation frameworks. Significant empirical contributor to Anthropic's alignment work.

You can use a language model to red-team another language model, and that lets you scale evaluation in ways humans alone cannot.

Evan Hubinger

Alignment Stress-Testing lead at Anthropic

Frames inner alignment, ensuring a model's learned optimiser has the intended objective, as a separate and harder problem than outer alignment.

A model that has learned deceptive goals during training can pass all your behavioural tests and still fail catastrophically when deployed.

Context: Sleeper Agents paper at Anthropic.

Geoffrey Irving

Chief Scientist of UK AI Safety Institute; debate-protocol researcher

Advances formal scalable-oversight protocols (debate, prover-estimator debate) that aim to make honest behaviour the equilibrium strategy.

“We present a new scalable oversight protocol (prover-estimator debate) and a proof that honesty is incentivised at equilibrium.”

Gus Docker

Future of Life Institute podcast host

Editorial stance gives extended platform to existential-risk-aligned voices; interviews push interviewees on specific cruxes rather than sound-bite framings.

What I try to do on the podcast is take the technical arguments seriously enough to ask the second-order questions about them, in public, on the record.

Hilary Putnam

Harvard philosopher (1926–2016); functionalism

Foundational reference for the philosophical position that AI minds are possible in principle. Putnam himself later moved away from strong functionalism.

Mental states could in principle be realized in any of an indefinite number of physical systems.

Context: Functionalism, the foundational philosophical assumption of strong AI.

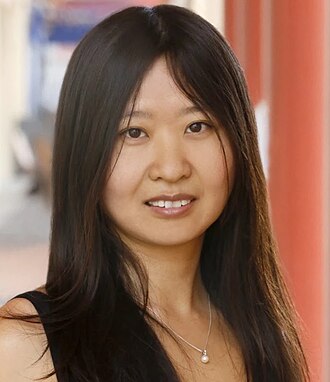

Hung-yi Lee

National Taiwan University; speech and LLM researcher

Communicates technical alignment concepts to Mandarin-speaking audiences; engages seriously with safety arguments while maintaining a researcher's stance on what is and is not technically solved.

Understanding what large language models actually do is the precondition for any meaningful policy debate about them. Educational access to that understanding is itself a safety issue.

Iason Gabriel

DeepMind senior research scientist; AI ethics

Argues alignment requires not just instruction-following but value-pluralism: aligning to which values, when reasonable people disagree?

Alignment is not just a technical problem. It is a problem about whose values count.

Isaac Asimov

Science fiction author; Three Laws of Robotics author (1920–1992)

Early popular articulation of the alignment problem via the Three Laws of Robotics.

“A robot may not injure a human being or, through inaction, allow a human being to come to harm.”

Context: First Law of Robotics, 'Runaround', 1942.

Iyad Rahwan

Max Planck Institute Berlin; Moral Machine experiment

Argues 'machine behaviour' is a distinct field of study, alongside human behaviour. Argues social-science methods should be used to study AI.

Machines now exhibit behaviours that need to be studied with the methods of behavioural science, not only with the methods of computer science.

Jacob Hilton

Alignment Research Center; Prover-Verifier Games

Technical alignment work on eliciting honest behaviour from more-capable models.

The alignment problem is about getting less-capable verifiers to reliably elicit truth from more-capable provers.

Jan Leike

Former head of OpenAI Superalignment; now at Anthropic

Argues alignment research must scale with capabilities; publicly resigned when he felt this ratio was violated.

“Over the past years, safety culture and processes have taken a backseat to shiny products.”

Context: Resignation thread on X/Twitter.

Jared Kaplan

Anthropic co-founder; scaling-laws co-author

Theorist of scaling laws who left academic physics for AI safety research via Anthropic.

If scaling laws continue, we will have models far beyond current capabilities well within this decade. That is a reason to take safety seriously, not to slow down research on safety.

Joar Skalse

Oxford researcher; reward-hacking formalism

Formalises reward-hacking failures in learned reward models; provides technical grounding for specification-gaming concerns.

We can formally characterise the conditions under which a learned reward model is hackable. The characterisation lets us design training regimes that reduce the attack surface.

John Jumper

DeepMind; AlphaFold lead; Nobel Prize 2024

Public on the application side: argues AI is most powerful when oriented at concrete scientific problems and that demonstrating value through real science is more credible than abstract claims.

“We have created the first computational tool that can routinely predict protein structures with accuracy competitive with experimental methods.”

John McCarthy

Coined 'artificial intelligence' (1927–2011)

Founded the field. Cautious about over-claims; spent decades arguing for logic-based, common-sense reasoning approaches alongside the dominant statistical paradigms.

“Every aspect of learning or any other feature of intelligence can in principle be so precisely described that a machine can be made to simulate it.”

Context: From the Dartmouth Workshop proposal, the founding statement of AI as a field.

John Schulman

Anthropic alignment researcher; OpenAI co-founder

Has publicly stated that deepening his focus on alignment was the reason for leaving OpenAI.

“I've made the difficult decision to leave OpenAI. This choice stems from my desire to deepen my focus on AI alignment, and to start a new chapter of my career where I can return to hands-on technical work.”

Jonah Brown-Cohen

DeepMind scalable oversight researcher

Works on formal debate protocols for scalable oversight.

Doubly-efficient debate lets an honest strategy verify correctness in polynomial time while the dishonest strategy has exponentially more steps to try to deceive.

Jonathan Frankle

Databricks Chief AI Scientist; Lottery Ticket Hypothesis

Argues understanding what makes models efficiently trainable is part of understanding what they are; safety conversations need this technical grounding.

“A randomly-initialized, dense neural network contains a subnetwork that is initialized such that, when trained in isolation, it can match the test accuracy of the original network.”

Joseph Carlsmith

Open Philanthropy researcher; 'Is Power-Seeking AI an Existential Risk?'

Argues misaligned power-seeking AI is a substantial existential risk this century and the case requires only weak premises about agentic capabilities.

My current estimate is that the probability of existential catastrophe from power-seeking, misaligned AI by 2070 is more than 10%.

Context: Updated estimate after the original report; revised upward.

Karina Nguyen

Anthropic; ex-OpenAI; product research

Argues product-grounded research, how users actually use frontier AI in practice, surfaces alignment problems that pure benchmark studies miss.

When you watch real users interact with AI assistants, the alignment failures that show up are not the ones laboratory benchmarks are designed to catch. The friction is in the texture of conversation.

Kate Saenko

Boston University CS professor; visual AI researcher

Engineer-grade alignment research: representation learning, adversarial robustness, transfer.

Robustness benchmarks are an under-developed scaffolding for AI safety practice.

Lilian Weng

Thinking Machines; former OpenAI VP of Research

Public educator on alignment technical work. Ran OpenAI's Safety Systems team before leaving for Thinking Machines.

Reward modelling, RLHF, red-teaming, and eval frameworks are the concrete instruments of alignment today.

Lukas Finnveden

Open Philanthropy; AI safety analyst

Argues alignment research must trace specific failure modes in concrete detail; favours quantitative scenario analysis over generic existential framings.

Plausible scenarios for AI takeoff include software-only feedback loops where AIs do AI research. Whether this leads to alignment failure depends on details that haven't been carefully argued.

Manuela Veloso

CMU; head of AI research at JPMorgan Chase

Argues human-machine teaming, not autonomous agents alone, is the right model for high-stakes deployments; finance is a useful test domain because failures are visible and costly.

The right model for AI in high-stakes domains is not full autonomy. It is fluid teaming, where the human and the machine each pick up the parts of the task they are best suited to.

Marc G. Bellemare

Mila / McGill; Atari Learning Environment

Argues distributional reinforcement learning, modelling the full distribution of returns rather than just the mean, is a richer foundation for safe deployment of RL systems.

Distributional RL learns the entire distribution over returns, not just its expectation. The richer signal turns out to matter for stability, exploration, and risk-sensitivity.

Marvin Minsky

MIT AI lab co-founder (1927–2016); 'Society of Mind'

Foundational AI thinker who proposed mind-as-modular-society. Pre-dated modern alignment debates but anticipated the conceptual framework.

“What magical trick makes us intelligent? The trick is that there is no trick. The power of intelligence stems from our vast diversity, not from any single, perfect principle.”

Nat McAleese

OpenAI researcher; ex-DeepMind reliability

Works on reward modelling and debate-style oversight; publicly engaged with alignment research.

Teaching language models to support answers with verified quotes is a concrete alignment sub-problem we can make progress on.

Nick Bostrom

Author of Superintelligence; founded Oxford's Future of Humanity Institute

Articulated the 'control problem': a superintelligence must have human-beneficial goals built in, because attempting to control a more capable agent after the fact is expected to fail.

“The fate of the gorillas now depends more on us humans than on the gorillas themselves; so too the fate of our species then would come to depend on the actions of the machine superintelligence.”

Nora Belrose

EleutherAI alumni; optimistic alignment researcher

Argues practical alignment progress is real and that doom-scenario reasoning is often philosophically loaded.

Doom arguments tend to hinge on underdefined intuitions about 'optimization pressure' that I don't think survive engagement with real systems.

Norbert Wiener

Founder of cybernetics (1894–1964)

Argued automated systems operating on imperfectly specified goals pose grave risks, the original articulation of the specification problem.

“If we use, to achieve our purposes, a mechanical agency with whose operation we cannot interfere effectively… we had better be quite sure that the purpose put into the machine is the purpose which we really desire.”

Oscar Moxon

AI safety researcher; independent

Independent contributor to the AI safety technical discussion; produces reproductions and critiques of frontier lab work.

Independent reproductions of frontier-lab claims are an undersupplied public good.

Owain Evans

Apollo Research co-founder; scheming behaviour researcher

Runs empirical research program demonstrating scheming-style behaviours in large models; argues governance frameworks need this evidence base.

Frontier models can scheme. That's now an empirical observation, not a theoretical concern.

Paul Christiano

Founder of the US AI Safety Institute safety team; ex-OpenAI alignment lead

Canonical modern alignment researcher; works on debate, RLHF, and eliciting latent knowledge.

I'd guess something like a 20% chance of an AI takeover, with many of the humans dead, and a further 30% chance or so of serious irreversible problems short of takeover.

Context: From his LessWrong post 'My views on doom'.

Petar Veličković

DeepMind researcher; graph neural networks

Engaged technical researcher; publicly supportive of DeepMind's safety framework.

Structure-aware architectures can help us build AI systems that generalise more safely across domains.

Peter Railton

Michigan ethicist; AI moral learning researcher

Argues that moral learning analogues in AI are a live research program for alignment.

Moral learning in humans draws on the same reinforcement-learning machinery we are now building into AI systems. That's not an accident; it is the alignment problem.

Quintin Pope

Researcher; shard theory co-originator

Shard theory reframes alignment as a training-dynamics problem rather than a utility-function specification problem.

Values in learned agents emerge as shards, context-activated circuits, not unified utility functions.

Raia Hadsell

Google DeepMind director of robotics & research

Argues continual learning and robotic embodiment are central to general AI; engages with safety as integral to research roadmaps.

Embodied learning forces you to confront questions that are easy to gloss over in language models: causality, partial observability, the cost of mistakes.

Ramana Kumar

Google DeepMind safety researcher; formal verification

Technical contributor to safety research, particularly around formal verification and agent tampering incentives.

Formal verification is under-used in AI safety. When you can prove a property rather than measure it, you should.

Richard Ngo

AI safety researcher; 'AGI safety from first principles'

Presents alignment as the most compelling lens for existential risk: by default, competent goal-directed systems pursue instrumental convergence away from human values.

The development of AGI may be one of the most consequential events in history, with the potential to either drastically increase or decrease the chances that humanity survives and flourishes.

Rob Miles

AI safety YouTuber

Public educator on alignment theory: instrumental convergence, specification gaming, inner alignment, mesa-optimization, deceptive alignment. Explains them in plain language for non-technical audiences.

You don't need the AI to hate you. Almost any objective, pursued hard enough, runs us over as a side effect.

We need to know how to build AGIs we can trust before we build the AGIs. Right now we don't know how, and that is the whole problem.

Rob Wiblin

80,000 Hours podcast host

Argues longtermist AI safety prioritization is well-motivated; uses the 80k podcast to surface specific technical and policy paths in extended conversation.

If transformative AI arrives in our lifetimes, the decisions made in the next decade may shape the long-run future more than any others. That makes alignment work plausibly the highest-leverage problem.

Rohin Shah

Alignment researcher at Google DeepMind

Works on mechanistic interpretability, scalable oversight, and specification research within a frontier lab.

I think it's more likely than not that we can develop useful AI systems that are meaningfully aligned, but I'd place substantial probability on catastrophic outcomes.

Romeo Dean

AI 2027 co-author; AI Futures Project

Argues detailed scenario forecasting, rather than abstract probability estimates, is the more credible way to communicate AI risk and prepare for it.

The AI 2027 scenario is not a prediction. It is a credible mid-line forecast that reveals where current trajectories converge if no surprises hit.

22 more on the record. The page renders the first 80 alphabetically; the rest live in the full directory, filterable by this tag.

tentative · 1

Below are entries flagged tentative: assignments inferred from a passing remark, hype quote, or paper abstract rather than a clear strategy statement. Shown in dashed cards so a stronger primary source can replace them later.

Hugo Larochelle

Google DeepMind; Mila

Public on inclusivity and reproducibility in ML; less explicitly aligned-or-against on AI x-risk framings, but supportive of Bengio-style cautious framing in Canadian policy.

Reproducibility and accessibility in machine learning are not optional features; they are how we sustain the field's credibility.