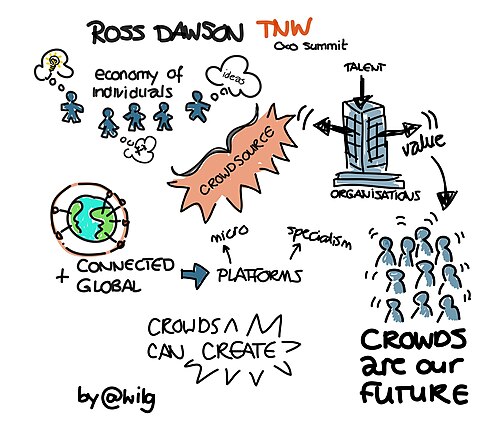

Who believes what about AGI.

Every strategic claim on this site belongs to a named person, is dated, and links to a primary source. Direct quotes are marked direct; paraphrases are marked as such.

Strategy categories are inductive, built from what people actually argue, not imposed from a framework. Expect the taxonomy to change as the corpus grows.

People indexed

935

Quotes

1011

p(doom) on record

28

Strategy tags

41

Browse the corpus.

p(doom) board →profile filters · expertise · recognition · vintage

expertise

recognition

vintage · era of AI worldview formation

See the board for the full grid and tier criteria. Profiled subset is hand-classified, unprofiled people are filtered out by these chips.

filter by specific strategy (41 tags)

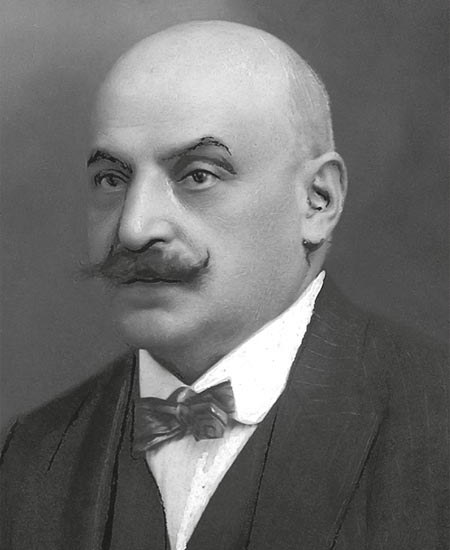

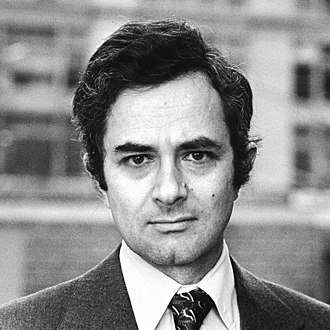

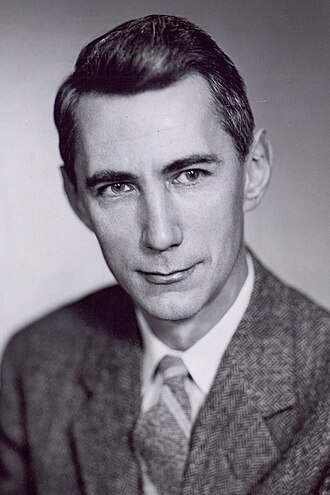

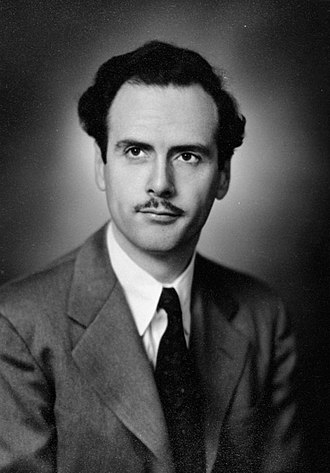

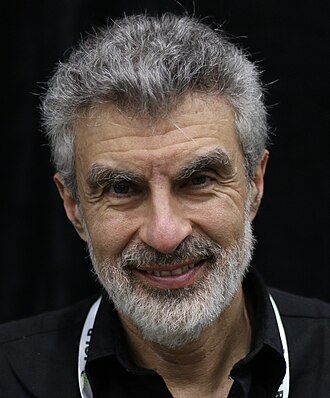

Nick Bostrom

Author of Superintelligence; founded Oxford's Future of Humanity Institute

Philosopher whose 2014 book Superintelligence made 'existential risk from AI' legible to mainstream audiences and policymakers. Frames the problem as a control problem requiring pre-committed solutions before we create superhuman systems.

Policy / meta · Household name · Symbolic era

Existential primacyAlignment firstLong reflection

Liron Shapira

p 50%Founder; Doom Debates podcast host

Tech founder (Pulse, Relationship Hero) and host of Doom Debates podcast, where he argues for short timelines and high p(doom) against guests who disagree.

Applied technical · Established · Scaling era

Existential primacyPause

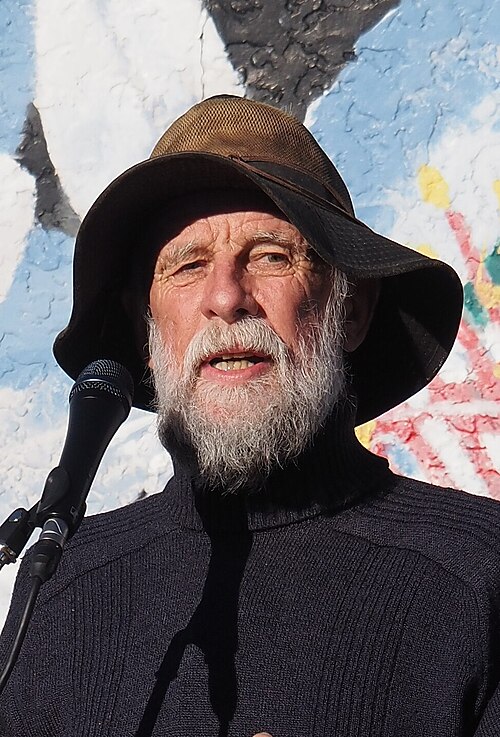

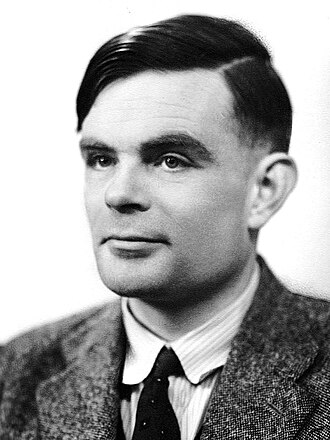

Stuart Armstrong

Aligned AI co-founder; ex-FHI; value-extrapolation approach

Philosopher and AI safety researcher who spent over a decade at the Future of Humanity Institute. Co-founded Aligned AI; his research centres on value extrapolation, the hypothesis that solving how to extend human values across contexts is necessary and nearly sufficient for alignment.

Deep technical · Established · Pre-deep-learning

Alignment first

Joseph Carlsmith

p 10%Open Philanthropy researcher; 'Is Power-Seeking AI an Existential Risk?'

Philosopher and senior research analyst at Open Philanthropy whose 2021 report on power-seeking AI produced the most cited quantitative decomposition of the existential AI risk argument.

Policy / meta · Field-leading · Scaling era

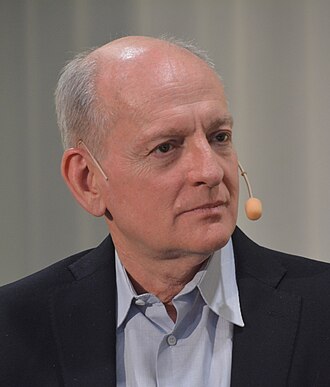

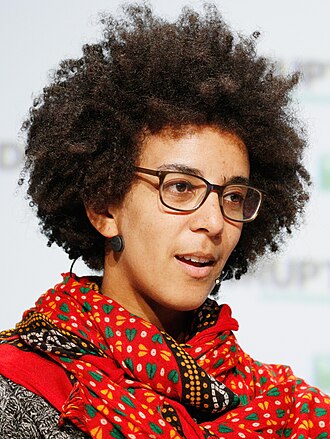

Existential primacyAlignment firstInioluwa Deborah Raji

Mozilla fellow; algorithmic audit researcher

Mozilla fellow and PhD student at UC Berkeley working on algorithmic auditing. Co-authored foundational work with Joy Buolamwini on commercial facial-recognition bias.

Governance firstNear-term harms first

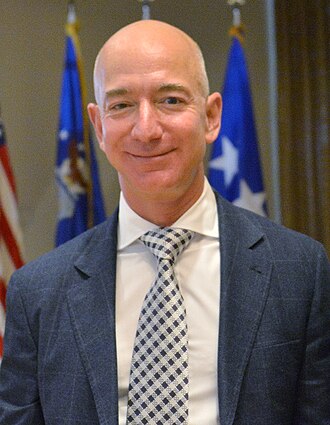

Jeff Dean

Google Chief Scientist; co-leader of Google DeepMind

One of two Google Senior Fellows. Took over as chief scientist across Google DeepMind and Google Research after the 2023 reorg. Publicly frames AI as dual-use; emphasises present-day harms over extinction framings.

Frontier builder · Field-leading · Deep-learning rise

Governance firstSubbarao Kambhampati

ASU professor; 'LLMs Can't Plan' advocate

ASU computer science professor and former AAAI president who has been the most consistent senior academic voice arguing LLMs cannot plan, reason, or self-verify in the formal senses required for AGI.

Deep technical · Field-leading · Symbolic era

AI skeptic

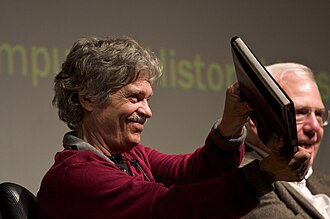

Rebecca Parsons

Thoughtworks CTO emerita; AI pragmatist

Thoughtworks CTO emerita who has written on the practical software engineering implications of AI deployment. Argues careful deployment practices matter more than headline-grabbing safety debates.

Governance first

Chelsea Finn

Stanford professor; meta-learning and robotics researcher

Stanford CS professor whose robotics and meta-learning research has shaped how frontier labs think about sample-efficient learning and generalisation. Publicly on the safety-engaged side but measured.

Alignment firstAnthropic Policy Team (RSP authors)

Anthropic responsible scaling policy author

Anthropic policy team member who co-authored the Responsible Scaling Policy framework, Anthropic's capability-tied safety commitments which became the most-emulated industry RSP template.

RSP-style commitments

Isaac Asimov

Science fiction author; Three Laws of Robotics author (1920–1992)

Biochemist and prolific SF author whose 1942 'Three Laws of Robotics' pre-figured the alignment problem. Included for historical continuity and because the Three Laws remain a rhetorical reference in AI safety debates.

Alignment firstMurray Shanahan

Imperial College cognitive robotics professor; DeepMind senior scientist

Philosopher and cognitive scientist at Imperial College and DeepMind. Author of 2015 book The Technological Singularity and recent papers on the 'dissociative identity' frame for understanding LLMs.

AI welfare

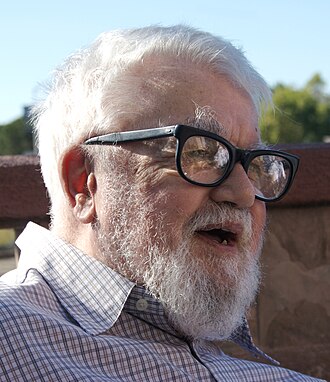

Joscha Bach

Cognitive scientist; consciousness researcher

Cognitive scientist and AI researcher whose talks on consciousness and AGI are widely shared. Argues AGI is closer than people think and that the question of whether AI systems are conscious is live.

Deep technical · Established · Pre-deep-learning

Digital minds

Anders Sandberg

Former FHI researcher; transhumanist philosopher

Long-time Oxford FHI researcher who published foundational work on whole-brain emulation and existential risk. Now independent; writes on the philosophy of grand futures.

External-domain expert · Established · Pre-deep-learning

Long reflectionAvital Balwit

Anthropic communications lead; public-facing AI safety voice

Anthropic communications lead. Has written essays framing the near-term timeline to AGI as the most pressing personal and civilisational concern for her generation.

Existential primacyAmanda Askell

Anthropic philosopher-researcher

Philosopher who leads Claude's 'character' work at Anthropic. Central voice on model welfare, AI personality, and virtue-ethics-informed alignment.

Alignment firstClay Graubard

Forecaster; RAND and Good Judgment contributor

Superforecaster who has contributed to AI and x-risk forecasting exercises. Represents the professional-forecaster wing of the AI risk community.

Existential primacy

Naomi Klein

Author of This Changes Everything; AI-and-climate critic

Journalist and author of The Shock Doctrine and This Changes Everything. Argues that 'AI hallucinations' are a distraction: the actual hallucinations are the promises AI CEOs make to investors and governments.

External-domain expert · Household name · Post-ChatGPT

AI skeptic

Marietje Schaake

Former MEP; Stanford Cyber Policy Center fellow; UN AI advisory body

Former EU parliamentarian, now at Stanford's Cyber Policy Center. Serves on the UN Secretary-General's AI Advisory Body. Argues corporate capture of AI governance is the primary democratic threat.

Governance first

Cory Doctorow

EFF special advisor; 'enshittification' coiner

Long-time digital rights activist and EFF advisor. Argues AI is being driven by the same 'enshittification' dynamic that decayed social platforms, and that capture of AI policy by incumbents will make it worse.

Policy / meta · Household name · Post-ChatGPT

Antitrust primacy

Evgeny Morozov

Belarusian scholar; 'solutionism' critic

Writer on the politics of tech; coined 'technological solutionism'. Argues Silicon Valley AI framings systematically obscure political-economy questions.

External-domain expert · Field-leading · Pre-deep-learning

AI skeptic

Ruha Benjamin

Princeton sociologist; 'Race After Technology'

Princeton sociologist whose 2019 Race After Technology coined 'the New Jim Code', the way digital technologies reinforce racial hierarchies. Central voice in the civil-rights framing of AI governance.

Governance first

Jeremie Harris

Gladstone AI co-founder; AI state policy advisor

Co-founder of Gladstone AI, a US government contractor producing AI risk assessments. Authored the 2024 US State Department commissioned report on frontier AI risks.

Governance firstEdward Harris

Gladstone AI co-founder

Co-founder of Gladstone AI with his brother Jeremie. Contributed to the 2024 US State Department-commissioned action plan on frontier AI risks.

Governance first

Karen Hao

Atlantic staff writer; AI industry critic

Journalist covering AI for The Atlantic and previously MIT Technology Review. Her reporting on OpenAI, labour in the AI supply chain, and frontier-lab culture has shaped mainstream industry understanding.

Governance first

Adrienne LaFrance

The Atlantic executive editor; technology critic

Executive editor at The Atlantic whose editorial direction has framed AI coverage around democracy, epistemic integrity, and civic institutions.

Governance firstPeter Railton

Michigan ethicist; AI moral learning researcher

Michigan moral philosopher who has argued that reinforcement learning analogues in AI could form the basis for genuinely moral AI agents. Engages AI safety philosophically.

Alignment firstKate Sills

AI economic systems and multi-agent markets researcher

Applied cryptographer and cooperative-AI researcher who works on incentive-compatible market mechanisms for AI agents. Represents the applied multi-agent governance corner.

Cooperative AIIan Hogarth

Chair of UK AI Safety Institute (2023–2025); investor

Angel investor and former chair of the UK AI Safety Institute, which he helped stand up from the November 2023 Bletchley summit. Co-author of the annual 'State of AI Report'.

Governance firstHugh Zhang

Epoch AI researcher

Machine learning researcher at Epoch AI who has published on reproducibility of capability benchmarks and the scaling-data bottleneck.

Evals-driven

Lisa Su

CEO of AMD; central figure in the AI compute supply

Runs AMD, the #2 AI chip supplier. Public voice for 'more compute = more capability' and argues supply-chain constraints rather than demand have been the bottleneck on AI progress.

Policy / meta · Household name · Deep-learning rise

Techno-optimism

Clément Delangue

CEO of Hugging Face; open-source AI advocate

French co-founder of Hugging Face, the largest open-source AI model hub. Testified to US Congress that open-source AI is 'extremely aligned with American interests'.

Open source

Alex Wang

Founder of Scale AI; data infrastructure for frontier models

Youngest self-made billionaire; founded Scale AI to provide data labelling and evaluation infrastructure to frontier labs and US national security agencies.

Policy / meta · Field-leading · Scaling era

Race to aligned SI

Arthur Mensch

CEO of Mistral AI; French frontier-model founder

Co-founder of Mistral AI, the French open-weight foundation model company. Representative of European open-weight frontier effort and AI sovereignty.

Open source

Sasha Luccioni

Hugging Face AI & climate lead

Researcher focused on the environmental and energy footprint of large models. Has published widely cited work quantifying the carbon cost of training frontier models.

Governance firstTim Dettmers

Efficient-training and quantization researcher

Researcher at UW and Allen AI whose work on quantization (QLoRA, bitsandbytes) has made frontier-scale fine-tuning feasible on modest hardware. Argues frontier access is an equity question.

Open sourceYuri Burda

OpenAI researcher; exploration and RL

Longtime OpenAI RL researcher whose work on exploration (Random Network Distillation) has been widely used in frontier training. Represents the technical-engineering inside view at OpenAI.

Alignment first

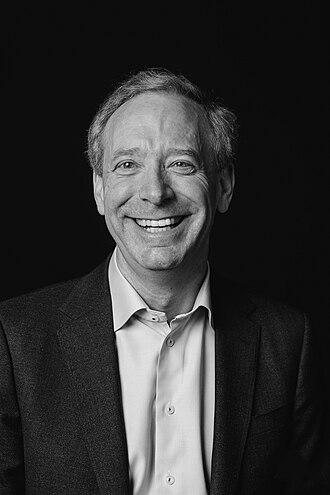

Brad Smith

Microsoft Vice Chair and President

Microsoft's president and chief legal officer. Public face of Microsoft's regulatory posture on AI; argues for 'governed progress' via licensing and national-security-aware regulation.

Governance first

Matt Clifford

UK PM AI Opportunities advisor; Entrepreneur First co-founder

Led the UK AI Safety Summit preparation under Rishi Sunak and the AI Opportunities Action Plan under Keir Starmer. Bridges entrepreneurship and AI safety.

Governance firstDominic Cummings

Former UK No. 10 chief adviser; AI policy commentator

Former chief adviser to UK PM Boris Johnson. Has written extensively on AI risk and governance through his Substack, combining Whitehall experience with an empirical-risk framing.

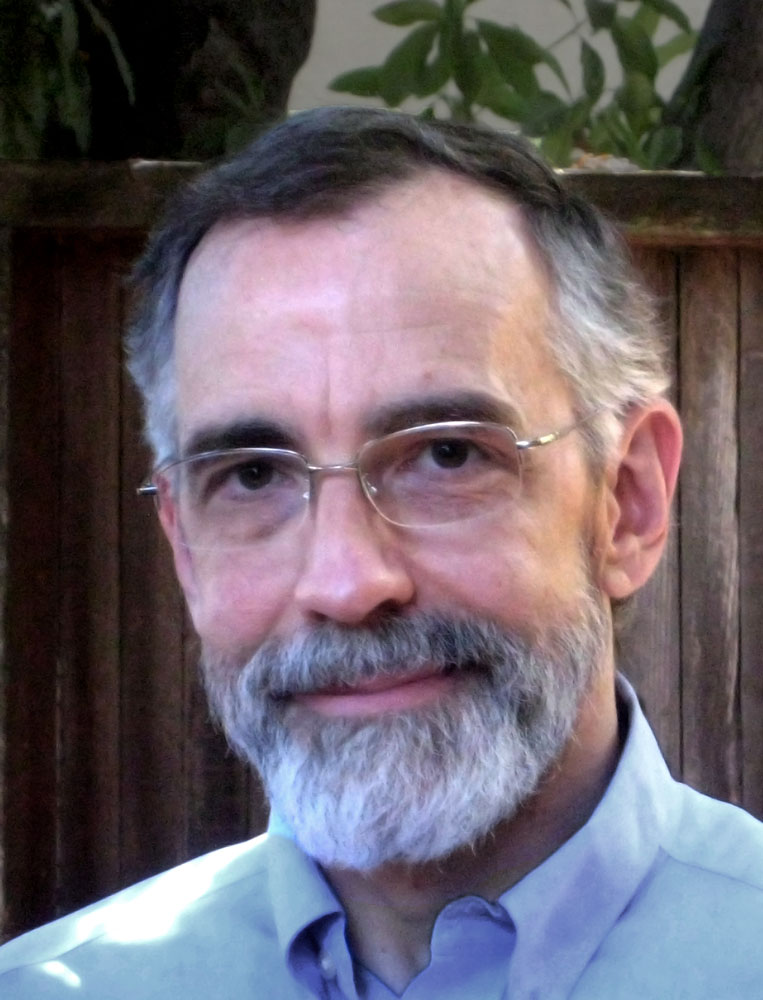

Governance firstPeter Szolovits

MIT medical AI pioneer

MIT professor who pioneered clinical decision-support AI in the 1970s. Argues the most urgent AI policy questions concern reliability, evaluation, and deployment context, not superintelligence.

Evals-drivenIsabella Wilkinson

Chatham House international affairs AI researcher

Researcher at Chatham House focused on AI and international order. Has argued that AI's geopolitics will require institutions analogous to the IAEA for frontier compute.

International treaty

Elizabeth Kelly

Founding director of the US AI Safety Institute

Deputy assistant to the president for economic policy who became the founding director of the US AI Safety Institute at NIST after the October 2023 AI Executive Order.

Governance firstAnna Makanju

OpenAI VP of Global Impact; policy veteran

Former State Department and White House advisor; runs OpenAI's policy work. Represents OpenAI's public face in Washington and Brussels.

Governance firstNoam Brown

OpenAI reasoning researcher; Diplomacy AI

Researcher behind Meta's CICERO Diplomacy-playing AI and now a lead on OpenAI's reasoning-model research. Has driven much of the 2024–2025 shift toward chain-of-thought / o-series models.

Existential primacyBen Mann

Anthropic co-founder; researcher

Anthropic co-founder who worked on GPT-3 at OpenAI. One of the technical architects of Constitutional AI training.

Constitutional AI

Jared Kaplan

Anthropic co-founder; scaling-laws co-author

Theoretical physicist who co-authored the 2020 'Scaling Laws for Neural Language Models' paper. Anthropic co-founder and chief science officer.

Alignment first

Tom Brown

Anthropic co-founder; first author of GPT-3 paper

First author of 'Language Models are Few-Shot Learners' (GPT-3). Anthropic co-founder focusing on infrastructure and safety engineering.

Alignment firstSam McCandlish

Anthropic co-founder

Anthropic co-founder and research lead on training methods. Contributor to foundational scaling-law research at OpenAI before joining Anthropic.

Alignment firstSara Hossein

International AI law scholar

Scholar who has contributed to the Council of Europe's AI convention and argues international human-rights law is the right foundation for AI governance.

International treatyPetar Veličković

DeepMind researcher; graph neural networks

DeepMind senior research scientist known for graph neural networks and geometric deep learning. Public educator on deep learning and broadly pro-safety.

Alignment first

Seth Lazar

ANU Professor of Philosophy; MINT Lab founder

Australian National University moral philosopher whose 2023 Stanford Tanner Lecture on AI and human values has been widely cited. Runs the Machine Intelligence and Normative Theory Lab.

Governance first

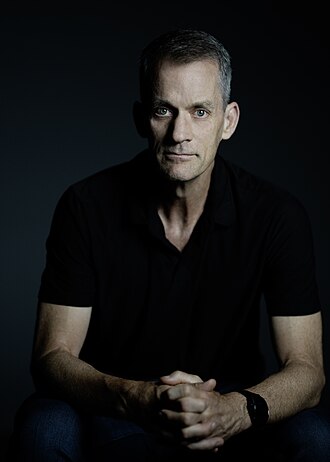

Shakeel Hashim

Editor of Transformer news; AI policy journalist

Editor of Transformer, the leading weekly AI-policy newsletter. Ex-Economist journalist, now at the Tarbell Center for AI Journalism. Frames the beat as 'AI safety and governance' rather than generic tech coverage.

Governance firstAndrea Miotti

Founder of ControlAI; pause campaigner

Italian founder of ControlAI, a non-profit calling for prohibition of development of superintelligent AI. Works directly with UK and US policymakers.

Pause

Steven Adler

Independent AI researcher; former OpenAI policy team

Ex-OpenAI policy researcher who resigned citing safety culture concerns. Now independent, collaborating with ControlAI on governance proposals.

Governance firstGarrison Lovely

Journalist covering AI safety and EA

Freelance journalist who reports on AI safety, effective altruism, and the political-economy dynamics of frontier labs. Has been widely published in Jacobin, The Nation, and other outlets.

Governance firstJessica Newman

UC Berkeley AI Security Initiative director

Director of the AI Security Initiative at UC Berkeley's Center for Long-Term Cybersecurity. Works at the intersection of AI and international security.

Governance first

Simon Rosenberg

NDN democracy strategist; AI political impact

Democratic strategist whose recent writing has focused on AI's threat to democratic elections. Argues election-security is the near-term critical governance question.

Governance first

Jim Keller

CEO of Tenstorrent; legendary chip architect

Chip architect who designed key components of AMD, Apple, and Tesla silicon. Now runs Tenstorrent, building open-architecture AI accelerators. Frames compute openness as a democratisation lever.

Distributed buildersRuslan Salakhutdinov

CMU professor; former Apple AI head

CMU deep learning professor and former Apple AI research head. Publicly engaged on safety but more measured than the more outspoken camp.

Alignment firstRamin Hasani

Liquid AI CEO; liquid neural networks pioneer

MIT-trained researcher who co-founded Liquid AI to build non-transformer foundation models. Argues the future of AI is architectural diversity rather than monolithic scale.

AI skepticThomas Krendl Gilbert

Cornell Tech ethicist; reinforcement learning ethics

AI ethicist who studies the governance and moral dimensions of reinforcement learning systems. Argues the norms governing RLHF shape what AI values become.

Alignment first

Julia Galef

Rationalist author; former CFAR president

Rationalist writer and former president of the Center for Applied Rationality. Author of The Scout Mindset. Has written measured takes on AI risk that mostly agree with the non-extreme end of the AI safety community.

Existential primacy

Max Roser

Founder of Our World in Data; Oxford economist

Oxford economist who founded Our World in Data. His AI entry and charts have become the standard quantitative reference for AI capability and investment trends.

Existential primacy

Laurence Tribe

Harvard constitutional law professor emeritus

Harvard's most prominent constitutional law scholar. Signed the 2023 Statement on AI Risk; frames AI as a constitutional-scale governance challenge requiring robust legal frameworks.

Existential primacy

James Manyika

SVP of Research, Technology and Society at Google-Alphabet

Former McKinsey Global Institute chair who now runs research, tech, and society at Alphabet. Signatory to the Statement on AI Risk; public voice for measured 'shared prosperity' framings.

Existential primacy

Bill McKibben

Environmental writer; Middlebury scholar

Journalist and climate activist who has extended his civilisational-risk framing to AI. Signed the Statement on AI Risk; argues AI and climate are linked crises of extraction.

External-domain expert · Household name · Post-ChatGPT

Existential primacy

Alan Robock

Rutgers climate scientist; nuclear winter researcher

Distinguished Rutgers climate scientist who helped establish modern nuclear winter science. Signatory to the Statement on AI Risk; argues AI should be treated like nuclear weapons as a civilisational hazard.

Existential primacy

Angela Kane

Former UN High Representative for Disarmament Affairs

Senior UN diplomat who has argued for applying disarmament-style frameworks to AI. Signatory to the Statement on AI Risk.

International treaty

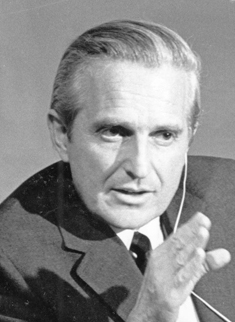

Martin Hellman

Stanford cryptographer; Turing Award winner

Turing Award-winning cryptographer (Diffie-Hellman key exchange). Long-time activist on nuclear risk; signed the 2023 Statement on AI Risk.

Existential primacy

Joseph Sifakis

Turing Award laureate; embedded systems researcher

2007 Turing Award laureate (model checking). Greek-French computer scientist who signed the Statement on AI Risk.

Existential primacyBen Buchanan

Former White House AI Special Advisor (2021–2025)

Georgetown CSET researcher who served as White House Special Advisor for AI from 2021 to 2025. Key architect of the Biden administration's chip export controls and the 2023 AI Executive Order.

Governance first

Kevin Esvelt

MIT biosecurity and gene drive researcher

MIT biologist who invented CRISPR gene drives. Has warned consistently that LLM-assisted biology lowers barriers to bioweapon development; a key advisor to US biosecurity policy.

Governance firstJonas Kgomo

Decolonial AI researcher; Ghana

Researcher focused on how AI affects African contexts; advocate for decolonial AI governance frameworks.

Governance first

Carme Artigas

Spanish AI and Digital Agenda Secretary; AI Advisory Body co-chair

Former Spanish Secretary of State for Digitalisation and AI who led the EU AI Act negotiations under the Spanish Presidency. Co-chairs the UN AI Advisory Body.

Governance first

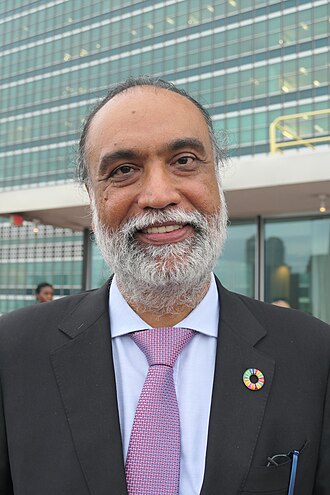

Amandeep Singh Gill

UN Secretary-General's Envoy on Technology

Indian diplomat who serves as the UN Secretary-General's Envoy on Technology. Leads the UN's Global Digital Compact, including its AI provisions.

International treatyPushmeet Kohli

VP of AI Science at Google DeepMind

DeepMind executive leading AI-for-science efforts (AlphaFold, AlphaProof). Frames AI as a scientific instrument for solving structured problems, not a sentient agent.

Techno-optimismBret Kugelmass

CEO of Last Energy; 'energy is the governance variable' framing

MIT-trained entrepreneur who argues that compute, energy, and AI governance are the same problem, and that micro-reactor deployment is necessary to decouple AI progress from fossil-energy constraints.

Techno-optimismMolly Kinder

Brookings Institution fellow; AI and labour

Brookings fellow who has published some of the most cited mainstream work on AI's labour-market impact and policy responses (wage insurance, retraining).

Governance firstShazeda Ahmed

NYU AI Now fellow; technology and democracy researcher

AI Now fellow whose work on China's AI governance has been widely cited in US policy debates. Argues US AI governance often misreads Chinese developments.

Governance firstJessica Cussins Newman

AI policy specialist; Microsoft Responsible AI

AI policy researcher who led UC Berkeley's AI Security Initiative and now works on Responsible AI at Microsoft. Frames AI security as an international-coordination problem.

Governance first

Irene Solaiman

Chief Policy Officer at Hugging Face

Hugging Face's top policy officer who has led the field's thinking on staged release of AI models since her 2019 work on GPT-2 at OpenAI.

Governance firstDaniel Khashabi

Johns Hopkins assistant professor; NLP safety researcher

NLP researcher at Johns Hopkins focused on making LLMs more trustworthy, including reasoning reliability and safety-evaluation frameworks in collaboration with Microsoft.

Evals-drivenSabrina Küspert

EU AI Office; Italian / German policy researcher

EU AI Office scientist who contributed to the GPAI Code of Practice. Public voice for EU-style risk-tiered regulation.

Governance firstRobert Trager

Oxford Martin AI governance scholar

Political scientist at Oxford's Blavatnik School focused on international AI governance and verification regimes. Argues verifiable compute accounting is plausible and necessary.

International treatyLennart Heim

Compute governance researcher at RAND

Former Centre for the Governance of AI researcher now at RAND, focused specifically on compute governance, the chokepoint framework for frontier AI.

Compute governanceYonadav Shavit

OpenAI researcher; on-chip compute verification

Computer scientist who has published on how to verify AI training and inference via on-chip mechanisms, the technical side of compute governance.

Hardware killswitchTim Fist

Institute for Progress AI policy researcher

CSET alumnus who now leads AI policy at the Institute for Progress. Focused on chip export controls, compute thresholds, and domestic AI industrial policy.

Compute governanceSaif M. Khan

Former NSC AI technology director

Former CSET researcher who served on the National Security Council staff as director for technology policy. Worked closely with Ben Buchanan on chip export controls.

Compute governanceBletchley Declaration Signatories

First international AI Safety Summit signatories (2023)

29 countries including the US, UK, EU, China, India, and Japan signed the November 2023 Bletchley Declaration, the first international statement on frontier AI risk. This entry stands in for the collective action.

International treaty

Victor Gao

Chinese diplomat; AI dialogue participant

Chinese diplomat and former adviser who has participated in US-China AI safety track II dialogues. Representative voice of the Chinese establishment's public AI-governance framing.

International treatyTianhua Tang

Chinese AI safety researcher

Chinese AI safety researcher participating in international dialogues on AI alignment and governance, including IDAIS.

Alignment firstBrian Chau

Executive Director of Alliance for the Future

Former ML engineer who directs Alliance for the Future, a US policy think tank aligned with the e/acc movement and opposed to frontier AI regulation.

AccelerationJonah Brown-Cohen

DeepMind scalable oversight researcher

DeepMind researcher who authored doubly-efficient debate protocols for scalable AI oversight. Technical collaborator with Geoffrey Irving.

Alignment firstNat McAleese

OpenAI researcher; ex-DeepMind reliability

AI reliability and alignment researcher at OpenAI; previously at DeepMind working on debate-style oversight and reward modelling.

Alignment firstJoar Skalse

Oxford researcher; reward-hacking formalism

Oxford AI safety researcher who co-authored foundational work defining when reward hacking can occur in learned reward models.

Alignment firstChristopher Summerfield

Oxford neuroscientist; DeepMind senior researcher

Oxford cognitive neuroscientist and DeepMind senior research scientist. Has written on how cognitive science informs alignment and the nature of AI understanding.

Alignment firstToby Shevlane

DeepMind model evaluations researcher

DeepMind research scientist focused on dangerous-capability evaluations. Co-authored foundational papers on red-teaming and evaluation frameworks.

Evals-drivenMary Phuong

DeepMind autonomous-replication evaluations researcher

DeepMind research scientist who leads autonomous-replication evaluations, tests for whether models can autonomously set up and run copies of themselves.

Evals-drivenHoda Heidari

CMU algorithmic fairness researcher

CMU assistant professor whose work bridges algorithmic fairness and AI governance. Argues fairness metrics must be tied to concrete consequentialist framings.

Governance first

Rumman Chowdhury

Former Twitter ML ethics director; Humane Intelligence

Data scientist who ran ML ethics at Twitter and now runs Humane Intelligence, a non-profit red-teaming organisation that partners with frontier labs and DEF CON.

Governance firstAviv Ovadya

Berkman Klein Center; platform democracy

Founder of the AI & Democracy Foundation. Argues AI's threat to democracy lies less in content generation and more in epistemic infrastructure degradation.

Democratic mandateJacob Hilton

Alignment Research Center; Prover-Verifier Games

Alignment researcher at the Alignment Research Center and independent researcher. Has published influential work on prover-verifier games and eliciting latent knowledge.

Alignment firstAndy Jones

Anthropic researcher; scaling inference laws

Anthropic researcher whose work on inference scaling laws has informed the field's understanding of how reasoning and compute trade off.

Existential primacy

Matthew Barnett

Epoch AI forecaster; Metaculus AI timelines

AI forecaster at Epoch AI who co-authors many of the most-cited Metaculus AI questions, including the Transformative AI Date question.

Existential primacy

Stephen Casper

MIT PhD researcher; red-teaming and model audit

MIT algorithmic alignment researcher focused on red-teaming, auditing, and interpretability. Has documented how safeguards at current frontier labs are reliably broken by determined red-teamers.

Evals-drivenDylan Hadfield-Menell

MIT professor; Stuart Russell student; assistance games

MIT assistant professor and former Stuart Russell PhD student who works on assistance games and practical alignment of AI systems.

Alignment firstVikrant Varma

Google DeepMind AI safety researcher

DeepMind safety researcher working on model evaluation and alignment. Contributor to several major DeepMind safety publications.

Alignment firstLilian Weng

Thinking Machines; former OpenAI VP of Research

Former VP of Research at OpenAI who helped lead safety research there. Wrote widely-read technical blog posts on RLHF and alignment. Joined Mira Murati's Thinking Machines Lab in 2024.

Alignment firstEthan Perez

Anthropic researcher; red-teaming language models

Anthropic research scientist focused on red-teaming and sycophancy. Has published foundational work on model evaluation and LM-generated evaluations.

Alignment firstDeep Ganguli

Anthropic societal impact lead

Head of Anthropic's Societal Impact team. Argues that the social dimension of alignment must be front and centre of safety work.

Governance firstZac Hatfield-Dodds

Anthropic assurance team; property-based testing

Anthropic engineer known for property-based testing and assurance work. Technical voice on how software-engineering practices can support AI safety.

Alignment firstMichael Chen

METR evaluations researcher

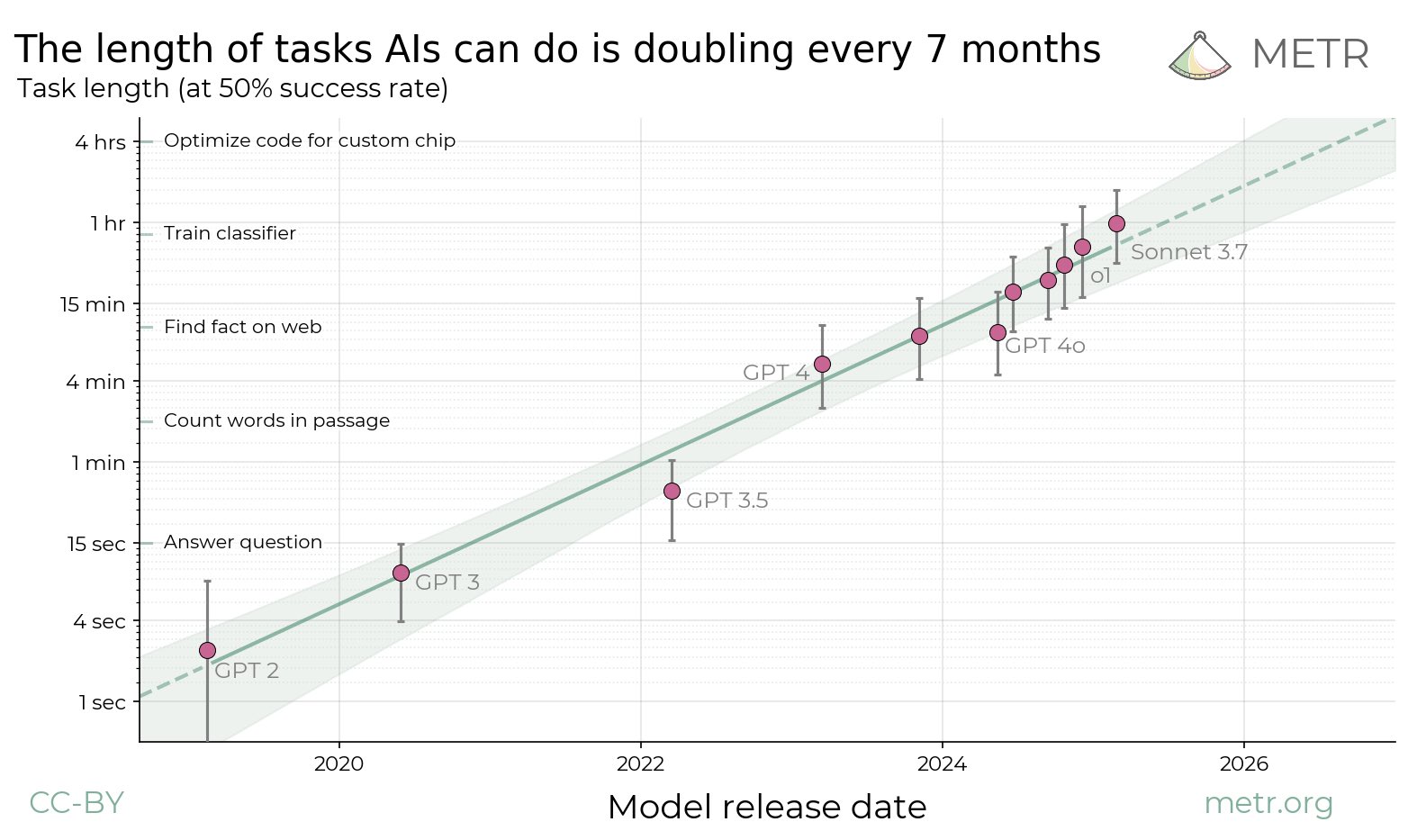

Researcher at METR focused on autonomous-task evaluations for frontier models. Contributor to the 'task length doubling' frontier-capability tracking.

Evals-drivenJess Whittlestone

Head of AI Policy at the Centre for Long-Term Resilience

Cambridge-based AI policy researcher who led foundational work at the Ada Lovelace Institute, GovAI, and CSER. Now leads AI policy at the Centre for Long-Term Resilience, feeding UK government work on frontier AI risks.

Governance firstCarina Prunkl

Utrecht AI ethics researcher; former FHI

AI ethics researcher whose work critiques dominant AI ethics frameworks as too narrowly technical. Former FHI researcher now at Utrecht.

Governance firstJonas Schuett

GovAI; AI risk governance researcher

Centre for the Governance of AI researcher who works on AI risk management, structured transparency, and internal AI lab governance structures.

Governance firstJulia Haas

Council on Foreign Relations AI policy fellow

Policy fellow at the Council on Foreign Relations focused on AI and international security. Bridges national-security and tech-policy audiences.

Governance firstSaria Hassan

Pakistan-based AI policy researcher

Representative of the Global South perspective on AI governance; argues the current AI governance conversation systematically undervalues non-Western stakeholders.

Governance firstOscar Moxon

AI safety researcher; independent

Independent AI safety researcher publishing on LessWrong and the Alignment Forum; contributes to the technical reproduction and critique of frontier-lab claims.

Alignment firstNick Ryder

OpenAI research scientist; scaling-laws contributor

OpenAI research scientist who co-authored foundational scaling laws papers. Public-engineering voice for capability-driven progress.

Techno-optimism

Lisa Gelobter

tEQuitable founder; former Obama CTO

Former US Chief Digital Service officer under Obama, now founder of tEQuitable, a platform for addressing workplace bias. Has advocated for AI governance that serves workers.

Governance first

Jeffrey Sachs

Columbia economist; sustainable development advocate

Columbia University professor and UN sustainable development advisor. Argues AI is already transforming labour markets with no adequate policy response, and that technological power without political control is the defining risk of our era.

Governance first

Maria Ressa

Rappler CEO; 2021 Nobel Peace Prize laureate

Filipino-American journalist whose Rappler fought disinformation under the Duterte regime. Won the 2021 Nobel Peace Prize and chairs the Paris Charter on AI and Journalism commission.

External-domain expert · Household name · Post-ChatGPT

Governance first

Mariana Mazzucato

UCL economist; Entrepreneurial State author

Economist known for arguing the state is the primary driver of transformative innovation. Has turned this framework on AI: argues AI policy must be oriented toward mission-driven public investment, not laissez-faire.

Public AI

Robert Reich

Former US Labor Secretary; UC Berkeley professor

Former Clinton Labor Secretary whose recent commentary has focused on AI's disruption of labour markets and the need for anti-concentration AI policy.

Antitrust primacy

Renée DiResta

Former Stanford Internet Observatory research manager

Disinformation researcher whose work on the Russian IRA, COVID, and AI disinformation has been highly influential. Joined Georgetown CPIP in 2024 after the shutdown of Stanford Internet Observatory.

Governance firstHany Farid

UC Berkeley professor; digital forensics pioneer

UC Berkeley professor who helped pioneer digital image forensics. Leads deepfake-detection research and advocates for provenance-based governance of synthetic media.

Governance firstDivya Shrivastava

RAND Corporation AI safety policy researcher

RAND researcher focused on AI risks in biology, cyber, and national security. Contributed to the 2024 Gladstone action plan and subsequent US policy work.

Governance firstMargot Kaminski

University of Colorado law professor

Technology law professor whose work on algorithmic accountability has informed EU and US regulatory design. Argues tort law and traditional liability frameworks have more to offer than they get credit for.

Liability-driven safetyWoodrow Hartzog

BU law professor; privacy and AI scholar

Boston University law professor whose book Privacy's Blueprint shaped modern discussion of privacy by design. Argues AI governance should embed legal duties of loyalty and care.

Governance first

Frank Pasquale

Brooklyn Law; Black Box Society

Brooklyn Law professor whose 2015 Black Box Society foreshadowed modern debate about AI accountability. Advocates 'functional' laws of AI, humans must retain moral agency and accountability.

Policy / meta · Field-leading · Deep-learning rise

Governance firstRebecca Crootof

University of Richmond law professor

Law professor whose work has shaped modern thinking about tort law, AI liability, and the legal status of autonomous systems.

Liability-driven safetyRyan Calo

UW law professor; robotics law pioneer

University of Washington law professor who helped establish robotics law as a field. Argues AI law must learn from aviation, medicine, and other sector-specific regulatory histories.

Governance firstNeil Thompson

MIT CSAIL FutureTech director; computing economics

MIT researcher whose quantitative work on compute, scaling, and algorithmic progress has become standard reference material. Director of FutureTech at MIT CSAIL.

Existential primacy

Anna Bacciarelli

Human Rights Watch senior researcher; formerly Amnesty International

Founded Amnesty International's AI and algorithmic accountability program; now at Human Rights Watch. Co-author of the Toronto Declaration on Human Rights and AI.

Governance first

Darío Gil

SVP and Director of IBM Research

Leads IBM Research and has been a public voice for IBM's view that AI governance should be centered on shared standards and competitive openness rather than moratoria or extinction framings.

Governance firstReid Southen

Film concept artist; AI copyright litigation voice

Film concept artist whose collaboration with Gary Marcus on 'AI is a plagiarism machine' put image-model copyright litigation into the mainstream.

Governance firstEd Newton-Rex

Fairly Trained founder; ex-Stability AI

Former Stability AI VP of Audio who resigned in November 2023 citing disagreement with fair-use defence of training data. Now runs Fairly Trained, a certifier of consent-based AI training.

Governance firstKarine Perset

OECD AI Unit head

Heads the OECD's AI Unit, including OECD.AI and its policy observatory. Responsible for convening the 38 OECD member states on AI governance.

Governance first

Michał Kosiński

Stanford psychologist; psychometric AI researcher

Stanford psychologist whose work has shown that off-the-shelf LLMs can pass cognitive, moral, and psychometric tests at human or super-human levels. Argues emergent capabilities are already more extensive than acknowledged.

Existential primacy

Inga Strümke

NTNU AI researcher; Norwegian AI public voice

Norwegian AI researcher whose public communication on AI risk and opportunity has made her one of Scandinavia's leading voices on AI.

Governance first

Anja Kaspersen

UN senior fellow; disarmament diplomat

Norwegian diplomat and former director of UN disarmament. Has pushed for applying arms-control frameworks to AI.

International treaty

Fumio Kishida

Former Prime Minister of Japan (2021–2024); Hiroshima AI Process architect

Led Japan's G7 presidency in 2023, launching the Hiroshima AI Process as the premier G7-level international AI governance framework. Architect of the G7 International Guiding Principles for Advanced AI Systems.

Policy / meta · Household name · Post-ChatGPT

International treatyHe Jianfeng

China Academy of Information and Communications Technology researcher

Chinese researcher whose work on AI governance bridges Western and Chinese perspectives; contributor to Chinese standards bodies and international dialogues.

Governance first

Urvashi Aneja

Founding Director of Digital Futures Lab (India)

Indian researcher and founder of Digital Futures Lab, a Goa-based research practice focused on AI and society from a Global South perspective.

Governance first

Ashwini Vaishnaw

Minister of Electronics and IT, Government of India

Indian cabinet minister responsible for the AI Mission and the Digital India Act. Balances US–China framing with India's 'AI for All' strategy.

Sovereign AI

Nandan Nilekani

Infosys co-founder; architect of India's Aadhaar digital ID

Infosys co-founder and architect of India's Aadhaar and UPI digital public infrastructure. Advocates AI governance built on digital public infrastructure rather than proprietary AI.

Public AI

Enrique Dans

IE University professor; AI commentator

Spanish technology professor whose blog and Forbes column have been widely-read European commentary on AI deployment and governance.

Techno-optimism

Rajeev Chandrasekhar

Former Indian Minister of State for Electronics and IT

Former Indian deputy IT minister who oversaw India's early AI policy formation. Advocate for regulatory frameworks that balance sovereign AI with open ecosystems.

Sovereign AISeifeldin Ayad

MENA-based AI governance voice

Middle East and North Africa-focused AI policy voice. Argues MENA perspectives are systematically missing from mainstream AI governance discussions.

Governance firstZiv Epstein

Stanford CRFM; human-AI interaction and creativity

Stanford researcher whose work on human-AI creative interaction has shaped understanding of how AI affects human authorship. Published in Science on generative AI governance.

Governance first

Gabriel Weinberg

Founder and CEO of DuckDuckGo

Founder of DuckDuckGo who has extended his privacy advocacy into AI. Argues AI surveillance is more dangerous than search-engine surveillance and should be banned.

Governance firstMei Lin Fung

Chair of People-Centered Internet; AI global cooperation advocate

Chair of People-Centered Internet, founded with Vint Cerf. Chairs the Digital Cooperation and Diplomacy network. Advocates AI governance rooted in inclusive digital cooperation.

Governance first

Moxie Marlinspike

Signal co-founder; cryptographer

Cryptographer who co-founded Signal. Has written on software-supply-chain risk in a world of ubiquitous AI-assisted coding.

AI skeptic

Anil Dash

Glitch former CEO; technology culture writer

Longtime technology culture writer and ex-CEO of Glitch. Has written extensively on how AI fits (and breaks) the existing labour, media, and civic institutions.

Governance first

Mireille Hildebrandt

Brussels jurist and philosopher; 'algorithmic governance' theorist

Belgian jurist and philosopher whose work on 'smart technologies and the end of law' established foundational European framings for algorithmic governance.

Policy / meta · Established · Deep-learning rise

Governance first

Erie Meyer

Former CFPB Chief Technologist

Former Chief Technologist at the Consumer Financial Protection Bureau and the US Digital Service. Now at Vanderbilt Policy Accelerator on AI and consumer protection.

Governance firstAdam Kalai

Microsoft Research; AI fairness and safety

Microsoft Research senior principal researcher whose work on algorithmic fairness has become standard reference. Contributes to mainstream technical safety work.

Alignment firstEce Kamar

Microsoft Research AI Frontiers VP

Microsoft Research VP who leads the AI Frontiers lab. Runs mainstream industry research on AI reliability, tool use, and safety.

Alignment first

Thomas Dietterich

Oregon State emeritus; AAAI past president

Distinguished AI researcher and former AAAI president. Has argued AI safety should focus on everyday reliability failures, not extinction scenarios.

Deep technical · Field-leading · Symbolic era

AI skepticNear-term harms first

Zeynep Tufekci

Princeton sociologist; NYT columnist

Princeton sociologist and NYT opinion columnist whose work has shaped mainstream understanding of algorithmic influence on democracy and epistemic ecosystems.

Governance first

Nicholas Thompson

CEO of The Atlantic; former Wired editor

CEO of The Atlantic and former Wired editor whose interviews with AI leaders have shaped mainstream understanding of frontier AI. Public commentator on AI and democracy.

Governance first

Martin Ford

Rise of the Robots author; labour economics of AI

Author of the 2015 Rise of the Robots and 2021 Rule of the Robots, arguing AI will displace cognitive labour on a scale requiring fundamental economic policy responses.

Governance firstMatt Mahmoudi

Amnesty International AI researcher

Amnesty International researcher focused on AI-enabled surveillance and human-rights violations. Co-led Amnesty's facial-recognition investigations.

Governance firstSuresh Venkatasubramanian

Brown University professor; former White House OSTP

Brown CS professor and former OSTP deputy who co-led the development of the 2022 AI Bill of Rights blueprint.

Governance firstZebi Williams

US Digital Service former director

Former deputy administrator of the US Digital Service. Helped design responsible-procurement policies for federal AI purchasing.

Governance firstEmma Strubell

CMU professor; energy cost of AI pioneer

CMU professor whose 2019 paper on the carbon footprint of NLP training was the first widely-cited quantification of AI's environmental cost. Has continued to publish on energy efficiency and sustainability.

Governance firstRashawn Ray

Brookings Institution; AI and policing

Brookings senior fellow and sociologist whose work on AI in policing has shaped mainstream discussion of algorithmic predictive policing.

Governance first

Michael Page

Anthropic policy team

Anthropic policy team member focused on frontier model governance. Former Future of Life Institute AI policy lead.

RSP-style commitments

Christof Koch

Neuroscientist; Allen Institute for Brain Science

Neuroscientist known for work on the neural correlates of consciousness. Argues AI systems may approach consciousness on an integrated-information-theory basis.

External-domain expert · Field-leading · Symbolic era

AI welfare

Shivon Zilis

Neuralink director; OpenAI board alumna

Canadian technology executive who serves on the Neuralink leadership team. Former OpenAI board observer (2016–2019) and long-time Musk collaborator on AI safety framings.

Existential primacyMatt Sheehan

Carnegie Endowment China AI fellow

Carnegie Endowment for International Peace senior fellow focused on China's AI development. Author of widely-cited tracking reports on Chinese AI governance.

Governance first

Evan Williams

Twitter co-founder; Medium founder

Twitter and Medium co-founder who has written about his concerns with AI-driven social media and with the direction of the industry.

Policy / meta · Household name · Post-ChatGPT

Governance firstSajjad Sayyed Hossain

Bangladesh-based AI policy researcher

Representative voice for Bangladesh and Global South AI-governance perspectives. Writes on AI applications in developing economies.

Governance first

Brian Tse

Founder of Concordia AI; China AI safety

Founder of Concordia AI, a Beijing-based research organisation focused on AI safety and Chinese-Western dialogue.

International treaty

Chinmayi Arun

Yale ISP fellow; Indian tech policy scholar

Legal scholar whose work bridges Indian, US, and international tech policy. Has published on AI and content moderation, the digital public sphere, and platform governance.

Governance first

Steven Weber

UC Berkeley political scientist; tech governance

Berkeley political scientist who has written widely on tech governance, including Success of Open Source and recent work on AI and international order.

Governance first

Alex Karp

CEO of Palantir

Palantir CEO who has positioned the company as the main Western defense-AI vendor. Publicly argues the US must win the AI race against China and that AI safety framings risk American defeat.

Policy / meta · Household name · Deep-learning rise

Race to aligned SI

Palmer Luckey

Founder of Anduril; defense AI builder

Oculus founder who founded Anduril to build Western defense AI and autonomous systems. Argues the US and allies must develop AI-enabled weapons before adversaries.

Commentator · Household name · Scaling era

Race to aligned SIStanislas Polu

Co-founder of Dust.tt; ex-OpenAI formal math

Former OpenAI researcher who led formal mathematics work (miniF2F, curriculum learning). Co-founded Dust.tt, a platform for building AI agents inside companies.

Techno-optimism

Casey Handmer

Founder of Terraform Industries; AI economics commentator

Former Caltech/JPL physicist who founded Terraform Industries. Writes widely on AI's economic implications and has argued AI will produce a small number of extreme-productivity individuals.

Techno-optimism

Kim Stanley Robinson

Science fiction novelist; The Ministry for the Future

Hugo-winning science fiction writer whose 2020 The Ministry for the Future centres AI systems managing global coordination on climate. Has become a reference framing for hopeful-but-serious AI governance futures.

Governance first

Kevin Collier

NBC News cybersecurity reporter; AI coverage

NBC News cybersecurity reporter whose recent coverage of AI has focused on real-world deployment risks, disinformation, and governance debates.

Governance first

Lisa Gilbert

Public Citizen co-president; AI and democracy

Co-president of Public Citizen, a consumer-rights non-profit. Has pushed AI-accountability policy at state and federal levels.

Governance firstRoshni Rao

Data & Society; AI worker rights

Data & Society researcher focused on AI and labour, including data workers in the Global South and US gig economy.

Governance first

Shannon Vallor

Edinburgh philosopher of technology; 'The AI Mirror'

Edinburgh philosopher whose 2024 book The AI Mirror argues AI reflects and amplifies human values rather than creating new ones. Former senior Googler on responsible AI.

Governance first

Randi Weingarten

President of the American Federation of Teachers

Leads the 1.7-million-member AFT, positioning it as a major labour voice on AI in education. Argues teachers must be in control of how AI is deployed in classrooms.

Governance first

Arvind Krishna

CEO of IBM

IBM CEO who has positioned IBM as the measured enterprise-AI vendor. Supports AI regulation on accountability grounds but opposes rules that hamper business predictability.

Governance first

Dorothy Denning

Georgetown emeritus; cybersecurity pioneer

Georgetown University emeritus professor who helped establish computer security as a field. Has written on AI's implications for cybersecurity and national defense.

Governance first

Peter Wang

Co-founder of Anaconda; scientific Python and AI

Co-founder of Anaconda, the default Python distribution for scientific and AI computing. Public commentator on open-source AI and the politics of the Python ecosystem.

Open source

Laura Weidman Powers

Co-founder of Code2040; diversity in AI

Co-founder of Code2040, a non-profit focused on Black and Latinx representation in technology. Argues AI will reproduce existing inequities unless the AI workforce diversifies.

Governance first

Anna Eshoo

Former US Representative (CA); AI Foundation Model Transparency Act sponsor

Silicon Valley Democrat who co-chaired the Congressional AI Caucus and co-sponsored the AI Foundation Model Transparency Act. Retired from Congress in January 2025.

Governance first

Don Beyer

US Representative (VA); AI Foundation Model Transparency Act sponsor

Virginia Democrat who co-sponsored the AI Foundation Model Transparency Act with Anna Eshoo. Studying for a master's in machine learning at George Mason University during his congressional tenure.

Governance first

Liz Fong-Jones

Honeycomb field CTO; tech labour voice

Former Google SRE who became a prominent voice in tech labour organising after the Google walkout. Now Honeycomb Field CTO. Public voice on AI worker rights.

Governance firstSean M. Connor

Seattle AI and governance law scholar

Director of the Cascadia Innovation Corridor; former UW law professor specialising in technology governance. Advocate for regional technology regulatory capacity.

Governance first

Dan Jurafsky

Stanford NLP professor; textbook author

Stanford NLP professor whose Speech and Language Processing textbook has been the canonical NLP reference for two decades. Has written on AI's impact on language and discourse.

Alignment firstLina Dencik

Cardiff University; data-justice researcher

Cardiff University professor and co-founder of the Data Justice Lab. Scholar of data-driven surveillance and algorithmic injustice.

Governance firstKim Crayton

Anti-racism in tech strategist

Strategist who has pushed anti-racism frameworks in tech companies, including AI ethics teams. Public voice for structural framings of AI governance.

Governance firstChinasa T. Okolo

Brookings fellow; African Union AI strategy contributor

Brookings technology fellow who worked with the African Union on developing the AU-AI Continental Strategy. Named one of Time's 100 most influential people in AI in 2024.

Governance firstJuan Ortiz Freuler

Berkman Klein affiliate; Latin America AI policy

Argentine researcher and Berkman Klein affiliate focused on digital platforms, AI, and Latin American governance. Works on comparative AI governance across the region.

Governance first

Renata Ávila

Open Future CEO; digital rights lawyer

Guatemalan human-rights lawyer who serves as CEO of Open Future, a European think tank on the digital commons. Works on digital sovereignty for emerging economies.

Democratic mandate

Paola Ricaurte Quijano

Tec de Monterrey; data-centric epistemic justice

Mexican researcher whose work on 'data epistemologies' has influenced decolonial AI ethics frameworks. Co-founder of the Tierra Común network.

Governance firstCatherine Aiken

CSET researcher; China AI talent and capability

Georgetown CSET researcher focused on China's AI research ecosystem. Has contributed to US understanding of Chinese AI talent and capability developments.

Compute governanceIfe Adebara

African NLP and LLM researcher

Computational linguist whose research focuses on African languages in LLMs. Argues current LLMs systematically fail African users.

Governance firstWafa Ben-Hassine

Omidyar Network; human rights and tech advisor

Former human-rights lawyer and now principal at the Omidyar Network focused on responsible technology. Public voice on AI and democracy, especially in MENA.

Governance first

Susan Schneider

FAU; 'Artificial You' author; machine consciousness

Director of the Center for the Future Mind at Florida Atlantic University; author of 'Artificial You' (2019). Has held NASA and Library of Congress chairs in technology and ethics.

AI welfare

Margaret Boden

Sussex emerita; cognitive science of AI

Research professor of cognitive science at the University of Sussex emerita; one of the founding scholars of cognitive science. Author of 'AI: Its Nature and Future' (2016) and 'Mind as Machine' (2006).

AI skepticNita A. Farahany

Duke Law; 'The Battle for Your Brain'

Professor of law and philosophy at Duke; author of 'The Battle for Your Brain' (2023) on neurotechnology, AI, and cognitive liberty. Member of the National Advisory Council on Bioethics.

Near-term harms first

David Eagleman

Stanford neuroscientist; Neosensory founder

Adjunct professor of neuroscience at Stanford; founder of Neosensory. Author of 'Livewired' (2020). Public communicator on neuroplasticity and human-machine integration.

Cyborg/merge

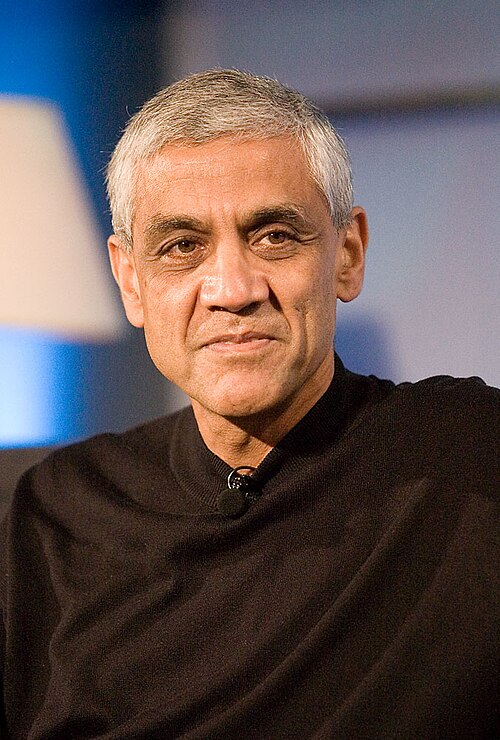

Naval Ravikant

AngelList co-founder; tech philosopher

Co-founder of AngelList; widely-followed angel investor and tech aphorist. Public commentator on AI as a tool for individual leverage rather than concentrated power.

Techno-optimism

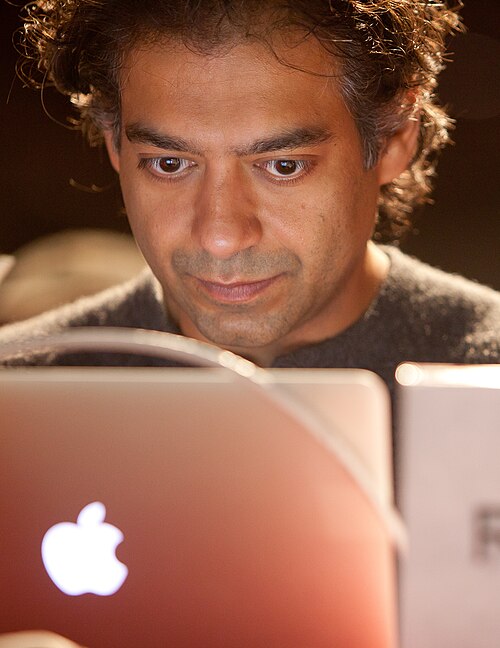

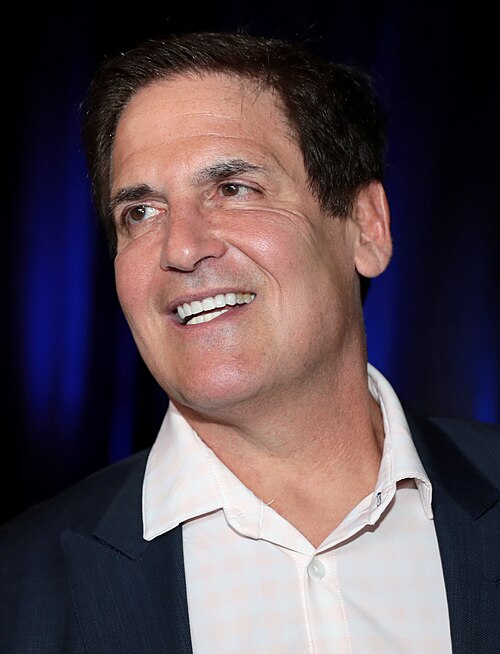

Mark Cuban

Shark Tank investor; Dallas Mavericks owner

Tech entrepreneur, Shark Tank investor, and former owner of the Dallas Mavericks. Frequent commentator on business implications of AI; holds large positions in AI-adjacent companies.

Techno-optimismTristan Hume

Anthropic mechanistic interpretability

Anthropic researcher whose work on dictionary-learning sparse autoencoders for Claude was a landmark in scaling mechanistic interpretability beyond toy models.

Interpretability bet

Sebastian Ruder

Cohere; ex-Google; NLP transfer learning

Researcher at Cohere; previously at Google. His 2018 'NLP's ImageNet moment has arrived' essay coined widely-used framing for transfer learning in NLP.

Alignment firstLeigh Marie Braswell

Founders Fund partner; AI investor

Investor at Founders Fund focused on AI; previously a software engineer. Frequent commentator on AI infrastructure and its market structure.

Techno-optimismRaphaël Millière

Macquarie University philosopher of cognitive science

Macquarie University Lecturer in the Philosophy of AI; previously Presidential Scholar in Society and Neuroscience at Columbia. Researches the philosophical implications of large language models for theories of mind, meaning, and reasoning.

AI skepticMax Bartolo

Cohere; LLM evaluation researcher

Researcher at Cohere; previously a UCL DeepMind PhD student. Co-developed adversarial-training and evaluation methods for question-answering and instruction-following.

Evals-drivenStéphanie Hare

Tech researcher; 'Technology Is Not Neutral' author

Independent researcher and author of 'Technology Is Not Neutral' (2022). Frequent BBC and Financial Times contributor on tech ethics.

Near-term harms first

David Holz

Midjourney founder

Founder of Midjourney, the image-generation service that grew rapidly through Discord-first distribution. Previously co-founded Leap Motion. Vocal proponent of AI as creative augmentation rather than replacement.

Techno-optimismJoshua Browder

DoNotPay CEO; legal-tech AI

Founder and CEO of DoNotPay, a consumer legal-services AI company; widely covered for plans to deploy an AI lawyer in court (eventually withdrawn under bar complaints).

Techno-optimism

Bryan Johnson

Blueprint founder; AI-driven longevity

Founder of Kernel (neural interfaces) and of Blueprint (the personal-data-driven anti-aging protocol). Frequent commentator on AI as a means of human optimization and longevity.

Techno-optimism

David Baszucki

Roblox CEO; co-founder

Co-founder and CEO of Roblox; long-running advocate of user-generated content as the dominant form of digital experience and of AI as the tool that makes UGC accessible to everyone.

Techno-optimism

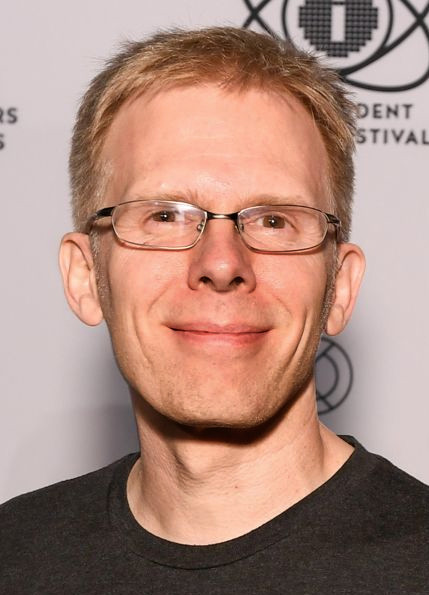

John Carmack

p 5%Keen Technologies founder; ex-Meta CTO

Co-founder of id Software (Doom, Quake) and former CTO of Oculus VR / Meta Reality Labs. In 2022 left Meta to found Keen Technologies, focused on AGI research with reportedly low team headcount.

Acceleration

Sam Harris

Making Sense podcast; neuroscientist and philosopher

Author and host of the Making Sense podcast; neuroscientist and philosopher. His 2016 TED talk 'Can we build AI without losing control over it?' was an early mainstream introduction to AI x-risk arguments.

Existential primacyIlan Gur

ARIA UK CEO; ex-ARPA-E

CEO of the UK Advanced Research and Invention Agency (ARIA), launched in 2023 to fund high-risk, high-reward research. Previously a program director at U.S. ARPA-E.

Differential technology

David D. Cox

MIT-IBM Watson AI Lab director

Director of the MIT-IBM Watson AI Lab; previously a Harvard professor of molecular and cellular biology. Bridge figure between academic and corporate AI research.

Alignment first

Neal Stephenson

Sci-fi novelist; Snow Crash, Cryptonomicon, Anathem, Termination Shock

Sci-fi novelist whose books have repeatedly anticipated technical developments (the metaverse, cryptocurrency); recent novels and essays grapple directly with AI's social effects.

Near-term harms first

Liu Cixin

Sci-fi novelist; Three-Body Problem trilogy

Chinese science fiction author whose 'Three-Body Problem' trilogy has become globally influential; the 'dark forest' theory has shaped how some readers think about AI civilizations and existential risk.

Existential primacy

Arati Prabhakar

White House OSTP director (2022–2025)

Director of the White House Office of Science and Technology Policy (OSTP) and Assistant to the President for Science and Technology under the Biden administration. Previously DARPA director (2012–2017) and NIST director (1993–1997).

Evals-driven

Paul Scharre

CNAS executive VP; 'Army of None', 'Four Battlegrounds' author

Executive Vice President at the Center for a New American Security (CNAS); author of 'Army of None' (2018) on autonomous weapons and 'Four Battlegrounds' (2023) on AI in great-power competition.

Compute governanceElsa Kania

CNAS adjunct senior fellow; China AI specialist

Adjunct senior fellow at CNAS specializing in Chinese military innovation; cited extensively in U.S. policy debates about Chinese AI development.

Compute governanceJon Bateman

Carnegie senior fellow; AI and cyber strategy

Senior fellow at the Carnegie Endowment for International Peace specializing in technology and international affairs. Former U.S. intelligence official.

International treaty

Steven Levy

Wired editor at large; long-time tech historian

Editor at large at Wired and author of multiple histories of computing including 'Hackers' (1984), 'In the Plex' (2011) on Google, and 'Facebook: The Inside Story' (2020). Long-running interview access at frontier labs.

Near-term harms first

Hal Varian

UC Berkeley emeritus; Google chief economist emeritus

Emeritus chief economist at Google (2002–2023) and Distinguished Professor of Economics at UC Berkeley emeritus. Pioneer of digital-platform economics; co-author of 'Information Rules' (1999).

Techno-optimism

Trae Stephens

Anduril co-founder; Founders Fund partner

Co-founder of Anduril Industries (defense AI hardware) and partner at Founders Fund. Frequent commentator on the integration of AI into Western defense capabilities.

Military primacyBrian Schimpf

Anduril Industries CEO

Co-founder and CEO of Anduril Industries since 2017. Helped build the company into a major defense technology player integrating autonomous systems with command-and-control software.

Military primacy

Chris Hughes

Facebook co-founder turned antitrust advocate

Co-founder of Facebook; co-chair of the Economic Security Project. In 2019 publicly called for breaking up Facebook; has since extended antitrust framing to OpenAI and AI-cloud concentration.

Antitrust primacy

Katherine Boyle

Andreessen Horowitz; American Dynamism

General partner at Andreessen Horowitz leading the firm's American Dynamism practice (defense, aerospace, public-interest tech). Previously a journalist at the Washington Post.

Military primacy

Virginia Dignum

Umeå University; UN AI Advisory Body member

Professor of responsible AI at Umeå University; author of 'Responsible Artificial Intelligence' (2019); member of the UN AI Advisory Body. Long-standing voice in European AI ethics.

Governance first

Ricardo Baeza-Yates

Northeastern; Institute for Experiential AI

Director of research at the Institute for Experiential AI at Northeastern University; previously a VP at Yahoo Research. Long-time voice on responsible AI auditing and bias detection.

Governance firstNello Cristianini

Bath University; ML pioneer; 'Shortcut' author

Professor of artificial intelligence at the University of Bath; co-author of foundational textbooks on Support Vector Machines and kernel methods. Author of 'The Shortcut: Why Intelligent Machines Do Not Think Like Us' (2023).

AI skeptic

John Naughton

Cambridge / Open University; Observer technology columnist

Long-time Observer (UK) technology columnist; emeritus professor of public understanding of technology at the Open University. Co-director of Cambridge's Minderoo Centre for Technology and Democracy.

Antitrust primacyHung-yi Lee

National Taiwan University; speech and LLM researcher

Professor at National Taiwan University; widely-watched online instructor for ML/LLMs in Mandarin. Has been a key public communicator of LLM concepts to Chinese-speaking audiences.

Alignment firstPelonomi Moiloa

Lelapa AI co-founder; African languages NLP

Co-founder and CEO of Lelapa AI, a South African AI lab building NLP models for African languages including isiZulu, Sesotho, and Yoruba. Vocal advocate for region-led AI infrastructure.

Sovereign AI

Vukosi Marivate

Univ Pretoria; African NLP / Masakhane

Associate professor at the University of Pretoria; co-founder of the Masakhane research community for African NLP. Long-running advocate for low-resource language model development.

Open source

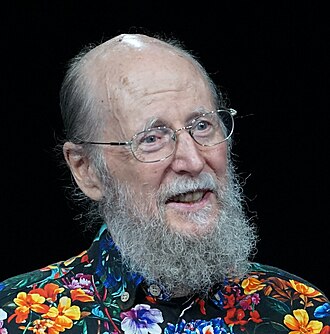

Toby Walsh

UNSW Sydney; AI safety advocate

Scientia Professor of AI at UNSW Sydney; chief scientist of the UNSW AI Institute. Long-standing campaigner against autonomous weapons and a leading public voice for AI regulation in Australia.

International treaty

Genevieve Bell

ANU; vice-chancellor; cultural anthropologist

Vice-Chancellor of the Australian National University since 2024; previously a senior fellow and VP at Intel, where she founded the Anthropology and User Experience research group. Distinctive cultural-anthropological perspective on AI.

Near-term harms first

Brian Tomasik

Foundational Research Institute co-founder; suffering-focused ethics

Co-founder of the Foundational Research Institute (now Center on Long-Term Risk); long-standing essayist on suffering-focused ethics and digital sentience. His writing has shaped EA-adjacent positions on AI welfare.

AI welfareAnna Salamon

CFAR co-founder; rationality and existential risk

Co-founder of the Center for Applied Rationality (CFAR); long-time figure in rationalist and AI-risk circles. Helped train many current alignment researchers through CFAR workshops in 2010s.

Alignment firstNick Beckstead

Future Fund co-founder; FHI alumnus

Philosopher and former Future Fund co-founder. Author of 'On the Overwhelming Importance of Shaping the Far Future' (2013), one of the foundational texts of academic longtermism.

EA framing

Paul Bloom

Yale and University of Toronto; cognitive science of AI moral status

Professor of psychology at Yale and University of Toronto; author of 'Against Empathy' and 'Psych'. Public commentator on AI's moral status, deception, and our intuitive responses to artificial minds.

AI skeptic

Simon Willison

Independent developer; co-creator of Django; LLM tools

Co-creator of the Django web framework and founder of Datasette. His blog at simonwillison.net has been one of the most-read developer-oriented sources of LLM analysis since GPT-3, with extensive practical attention to prompt injection and capability evaluation.

Security mindsetRiley Goodside

Scale AI; prompt engineering pioneer

Researcher at Scale AI; widely credited as one of the first practitioners to systematically explore the boundaries of LLM behaviour through prompting. Coined many of the foundational examples of prompt injection.

Security mindset

George Hotz

Comma.ai / tinygrad founder

Founder of Comma.ai (open-source autonomy) and tinygrad (compact deep-learning framework). Self-taught hacker famous for jailbreaking the iPhone and PS3; vocal opponent of frontier-AI doomerism.

Techno-optimismLucius Bushnaq

Apollo Research; mech interp

Senior researcher at Apollo Research; works on mechanistic interpretability and on detecting deceptive cognition in language models.

Interpretability betRob Wiblin

80,000 Hours podcast host

Co-founder of 80,000 Hours and host of its podcast. Has interviewed many of the people on this list at unusual length, with a recent emphasis on AI risk and policy.

Alignment firstBenjamin Todd

Founder of 80,000 Hours

Co-founder of 80,000 Hours, the EA career-advice organization that has placed hundreds of researchers into AI safety roles. Author of '80,000 Hours: Find a fulfilling career that does good'.

EA framingHolly Elmore

PauseAI US executive director

Executive director of PauseAI US; previously a researcher at Centre for Effective Altruism. Visible organizer of in-person protests and policy advocacy for an enforced pause on frontier training.

Pause

Spencer Greenberg

Clearer Thinking founder; rationality researcher

Mathematician and entrepreneur; founded Clearer Thinking, a behavioural research and rationality-tools project. Hosts the Clearer Thinking podcast where AI risk is a recurring theme.

Evals-drivenGus Docker

Future of Life Institute podcast host

Host of the Future of Life Institute Podcast; long-form interviews with AI safety researchers, policy figures, and adjacent thinkers. Influential conduit for technical alignment discourse.

Alignment first

Tobias Lütke

Shopify CEO; AI-first internal mandate

Founder and CEO of Shopify. In April 2025 issued an internal memo stating that 'reflexive AI usage is now a baseline expectation' for all Shopify employees, one of the most explicit AI-first labor policies from a major company.

Commentator · Household name · Post-ChatGPT

Techno-optimism

Sam Hammond

Foundation for American Innovation senior economist

Senior economist at the Foundation for American Innovation (formerly Lincoln Network); writes the 'Second Best' Substack on technology and political economy. Argues for an aggressive U.S. industrial policy on AI compute.

Centralised projectGovernance first

Sal Khan

Khan Academy founder; AI tutor advocate

Founder of Khan Academy. In 2023 launched Khanmigo, an AI tutor based on GPT-4. Advocates publicly that AI tutoring done well can produce Bloom's '2-sigma' learning gains for every student.

Techno-optimism

Eric Topol

Scripps cardiologist; AI in medicine pioneer

Founder and director of the Scripps Research Translational Institute; cardiologist and author of 'Deep Medicine' (2019). Long-standing voice on how AI should reshape clinical practice.

Techno-optimism

Holly Herndon

Musician; 'Holly+' digital twin and Spawning

Composer and electronic musician whose work pioneered AI-assisted music; co-founded Spawning, which built tools for artists to opt out of generative AI training datasets.

Near-term harms firstDavid Rolnick

McGill / Mila; Climate Change AI co-founder

McGill assistant professor and Mila core faculty; co-founder of Climate Change AI. Co-author of the influential 'Tackling Climate Change with Machine Learning' (2019) paper.

Differential technology

Daniel Susskind

Oxford / King's College London; AI and work economist

Oxford economist; senior research associate at King's College London. Author of 'A World Without Work' (2020) and co-author with father Richard Susskind of 'The Future of the Professions' (2015).

Near-term harms first

Anton Korinek

UVA economist; AI and inequality

University of Virginia professor of economics; senior fellow at Brookings. Has produced influential work on AI's macroeconomic implications and on transformative AI scenarios.

Near-term harms first

Richard Susskind

Tech-and-law thinker; 'Future of the Professions'

British author and IT advisor to the Lord Chief Justice of England and Wales; pioneer of 'online courts' and frequent speaker on AI's effect on legal services.

Techno-optimismNathan Lambert

Allen Institute for AI; 'Interconnects' newsletter

Senior research scientist at the Allen Institute for AI (Ai2) and author of the widely read 'Interconnects' newsletter. Co-leads Ai2's open-source post-training research and publishes detailed analyses of frontier-lab releases.

Open sourceAli Farhadi

Allen Institute for AI CEO

CEO of the Allen Institute for AI since 2023; previously a senior director at Apple. Computer-vision researcher (YOLO; UW) and longtime advocate of open-source frontier models.

Open sourceChip Huyen

Author of 'Designing Machine Learning Systems'

ML engineer and author whose work on production ML systems and LLM evaluation has shaped industry practice. Previously co-founded Claypot AI; ex-NVIDIA, ex-Snorkel.

Evals-driven

Ronen Eldan

Microsoft Research; 'TinyStories' author; mathematician

Microsoft Research mathematician; co-author of 'TinyStories' (2023), which showed that small language models trained on synthetic children's stories can produce coherent text, reframing what 'small' models can do.

AI skeptic

Henry Kissinger

Former U.S. Secretary of State; co-author 'The Age of AI'

Former U.S. Secretary of State (1973–77); died 2023. Co-authored 'The Age of AI: And Our Human Future' (2021) with Eric Schmidt and Daniel Huttenlocher, framing AI as a category-shifting transformation in the structure of knowledge.

International treatyMike Knoop

Co-founder ARC Prize; ex-Zapier

Co-founder of Zapier and, with François Chollet, of the 'ARC Prize', a public competition built around the ARC-AGI benchmark to reward systems that demonstrate genuine generalization rather than scaled pattern matching.

AI skepticYuntao Bai

Anthropic; Constitutional AI co-author

Anthropic researcher; co-lead author of the Constitutional AI paper introducing principles-based RLHF training and harmlessness from AI feedback.

Constitutional AITrenton Bricken

Anthropic mechanistic interpretability

Anthropic researcher whose work on sparse autoencoders, attention dynamics, and dictionary learning has been central to the mechanistic interpretability program.

Interpretability betCatherine Olsson

Anthropic; ex-OpenAI; AI safety community organizer

Anthropic researcher and longtime fixture of the AI safety research community. Co-author of OpenAI's 'AI and Compute' analysis. Was a longtime safety advocate at Google Brain and Open Philanthropy.

Alignment firstAdam Jermyn

Anthropic; previously astrophysics

Anthropic researcher whose path from theoretical astrophysics to AI safety is widely cited as a model for cross-field switching. Researches deceptive alignment risks.

Alignment firstLukas Finnveden

Open Philanthropy; AI safety analyst

Open Philanthropy researcher whose detailed analyses of AI takeoff dynamics, training data running out, and alignment training methods have been widely cited in EA circles.

Alignment first

Christopher Manning

Stanford NLP director; foundation models

Stanford professor of computer science and linguistics; director of the Stanford AI Lab. Co-led the Center for Research on Foundation Models. Author of the 'Foundations of Statistical Natural Language Processing' (1999) and 'Speech and Language Processing' (with Jurafsky).

Alignment first

Garry Kasparov

Former world chess champion; 'Deep Thinking' author

Former world chess champion; in 1997 lost a match against IBM's Deep Blue, an inflection moment for public perception of AI. Author of 'Deep Thinking: Where Machine Intelligence Ends and Human Creativity Begins' (2017).

Techno-optimism

Hannah Fry

Cambridge mathematician; 'Hello World' author

Mathematician and broadcaster; Cambridge professor (from 2024) and author of 'Hello World: How to be Human in the Age of the Machine' (2018). Frequent BBC presenter on algorithms and decision-making.

Near-term harms first

Tim Urban

Wait But Why; viral AI explainer

Author of the Wait But Why blog. His 2015 two-part series on superintelligence reached an order-of-magnitude wider audience than any prior writing on AI safety and shaped the public picture for years.

Existential primacy

Lila Ibrahim

DeepMind COO; AI ethics governance

Chief Operating Officer of Google DeepMind. Previously president of Coursera. Helped institutionalize DeepMind's Ethics & Society and AI Safety teams.

RSP-style commitmentsSam Charrington

Host of The TWIML AI Podcast

Founder and host of TWIML (This Week in Machine Learning & AI), one of the longest-running independent technical AI podcasts. Has interviewed hundreds of researchers from across the alignment, capability, and applications spectrum.

Evals-drivenTomek Korbak

UK AI Security Institute; ex-Anthropic; pretraining alignment

Researcher at the UK AI Security Institute (AISI); previously at Anthropic. Doctoral work on pretraining-time alignment via behaviour-cloning and conditional control.

Alignment firstFabien Roger

Anthropic alignment researcher; control evaluations

Anthropic alignment researcher whose work on AI control, designing evaluations to test whether models can subvert oversight even when they are trying to, has been widely cited in safety circles.

Evals-driven

Marc Raibert

Boston Dynamics founder; AI Institute executive director

Founder of Boston Dynamics and now executive director of the AI Institute (Hyundai-backed). Veteran roboticist with views shaped by decades of building physical machines that have to behave in the real world.

AI skepticAnjney Midha

Andreessen Horowitz general partner; AI investor

General partner at Andreessen Horowitz focused on AI infrastructure and applications. Previously founded Ubiquity6 (acquired by Niantic). Board member of Mistral AI and others.

Open source

Ozzie Gooen

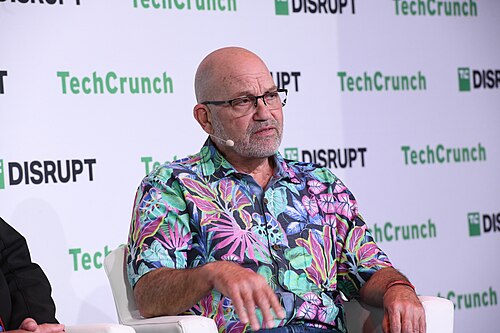

Quantified Uncertainty Research Institute founder