person

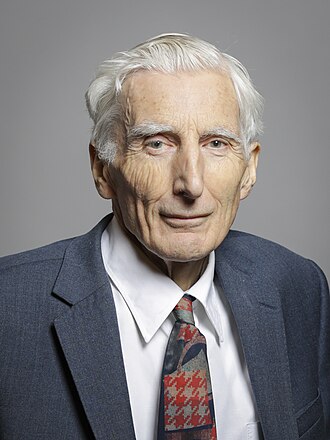

Martin Rees

Astronomer Royal; CSER co-founder

Former Astronomer Royal who co-founded the Centre for the Study of Existential Risk at Cambridge with Huw Price and Jaan Tallinn. Frames AI alongside bioengineering as the most serious civilisational-scale risks this century.

Profile

expertise

External-domain expert

Recognised expert outside AI (philosophy, economics, biology, journalism) who weighs in on AI consequences from that vantage.

UK Astronomer Royal. Co-founded Cambridge Centre for the Study of Existential Risk. Cosmologist; engages AI through x-risk lens.

recognition

Household name

Name recognition outside the AI/CS community. Featured by mainstream press, a Wikipedia page in many languages, a published bestseller, or holds a position the lay public knows.

Member of the House of Lords; mainstream UK press regular.

vintage

Pre-deep-learning

Active before AlexNet. The existential-risk frame matures (FHI, OpenPhil, EA). Public AI commentary still rare; deep learning not yet dominant.

CSER co-founded ~2012. Astronomer Royal long before. Pre-deep-learning x-risk frame.

Hand-classified. See the board for the criteria and the full grid.

Strategy positions

Existential primacyendorses

Extinction/disempowerment risk overrides ordinary cost-benefitArgues AI is one of a small set of 21st-century technologies with genuine civilisational-scale downside risk.

“Since we can't understand what's going on inside them, we have to be cautious about handing over power to them.”

Closest strategy neighbours

by jaccard overlapOther people whose strategy tags overlap with Martin Rees's. Overlap is on tag identity, not stance; opposites can show up if they reference the same tags.

Record last updated 2026-04-24.