person

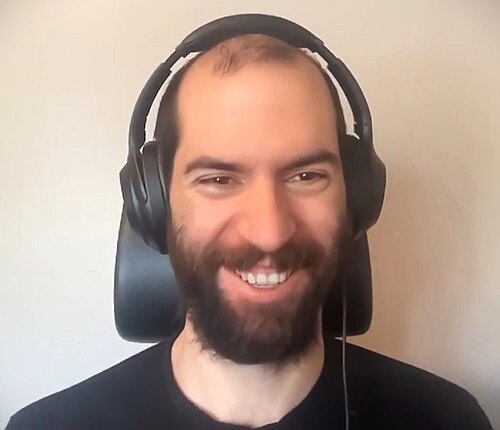

Nicholas Carlini

Anthropic adversarial-ML researcher; ex-Google Brain

Adversarial machine learning researcher widely cited for memorization, jailbreak, and privacy attacks on large models. Argues current LLM safety is unusually fragile compared to mature security fields.

current Member of Technical Staff, Anthropic

past Research Scientist, Google Brain / DeepMind (2018–2024)

Strategy positions

Security mindsetendorses

Treat safety as adversarial security; assume systems break under attackArgues ML systems are routinely broken by simple attacks and that the field treats safety claims with insufficient adversarial scrutiny.

I think the difficulty of attacking machine learning models is grossly overestimated, and the difficulty of defending them grossly underestimated.

Closest strategy neighbours

by jaccard overlapOther people whose strategy tags overlap with Nicholas Carlini's. Overlap is on tag identity, not stance; opposites can show up if they reference the same tags.

Record last updated 2026-04-25.