person

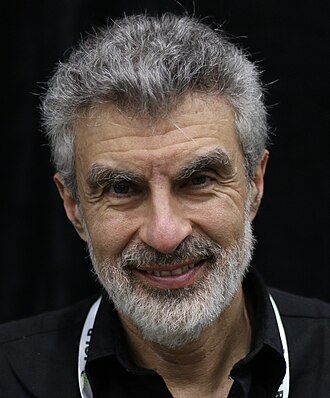

Yoshua Bengio

Turing Award laureate; scientific chair of the International AI Safety Report

Switched from deep-learning capability research to full-time AI safety work after GPT-4. Testified to the US Senate in 2023 about loss-of-control risk and now leads the international scientific AI safety report. Supports compute governance, liability, and a conditional pause.

Profile

expertise

Deep technical

Sustained peer-reviewed contribution to ML, alignment, interpretability, or safety techniques. Could review a frontier paper.

Turing Award 2018 with Hinton and LeCun. Most-cited computer scientist in 2022. Co-author of foundational deep-learning textbook. Now leads International AI Safety Report and runs Mila.

recognition

Household name

Name recognition outside the AI/CS community. Featured by mainstream press, a Wikipedia page in many languages, a published bestseller, or holds a position the lay public knows.

Public face of the AI safety mainstream. Repeated NYT, FT, BBC coverage. Convened by UN Secretary-General; commissioned to lead the International AI Safety Report.

vintage

Symbolic era

Career started in the GOFAI / expert-systems / early-rationalist period. Vinge's 1993 Singularity, MIRI founded 2000, Bostrom and Yudkowsky writing.

PhD 1991 (McGill). Worked through the symbolic→connectionist transition. Co-creator of the deep-learning era; his foundational priors precede it.

Hand-classified. See the board for the criteria and the full grid.

p(doom)

- 20%2023-07-15

Definition used: Probability of AI catastrophe (reported in ABC News piece).

What's your p(doom)? AI researchers worry catastrophe · ABC News

Timelines

Human-level AI 2028–2043

stated 2023-07-25

My testimony in front of the U.S. Senate · US Senate

Strategy positions

Existential primacyendorses

Extinction/disempowerment risk overrides ordinary cost-benefitSigned the CAIS Statement on AI Risk and argues loss-of-control risk is serious and unresolved.

“No one currently knows how to create advanced AI that reliably follows the intent of its developers.”

Context: Written testimony to the US Senate Judiciary Subcommittee on Privacy, Technology and the Law.

“There is a risk of losing control over AI with powerful capabilities, a risk we have yet to learn how to mitigate. If those in control of AI do not understand and manage this risk, it could jeopardize all of humanity.”

Governance firstendorses

Lead with regulation, treaties, liability regimesPushes for regulatory regimes, mandatory safety evaluations, and international coordination; has floated a 'humanity defense organisation'.

We are creating entities that may be smarter than us, pursuing goals that may not align with ours. That's the risk.

Context: TED2025 talk 'The Catastrophic Risks of AI, and a Safer Path'.

We need a humanity defense organization that is looking out specifically for existential risk from AI.

Closest strategy neighbours

by jaccard overlapOther people whose strategy tags overlap with Yoshua Bengio's. Overlap is on tag identity, not stance; opposites can show up if they reference the same tags.

Record last updated 2026-04-24.