person

Sam Altman

CEO of OpenAI

Argues government intervention is necessary to mitigate risks from increasingly powerful AI, while running the most visible frontier lab and framing AI as 'the most important technology in human history'. Signatory to the Statement on AI Risk.

Profile

expertise

Policy / meta

Specialises in AI policy, regulation, governance, philanthropy, or movement strategy. Reads the technical literature but does not produce it.

CEO of OpenAI. Not a research scientist, sets strategy, capital, and policy direction. Deep operational knowledge of frontier deployment and lab incentives.

recognition

Household name

Name recognition outside the AI/CS community. Featured by mainstream press, a Wikipedia page in many languages, a published bestseller, or holds a position the lay public knows.

Defining public-face figure of the AI era. Covered globally; testifying to US Senate; Saudi sovereign-fund and trillion-dollar capital negotiations.

vintage

Scaling era

Worldview formed during GPT-2/3, scaling laws, Anthropic's founding. Pre-ChatGPT but post-deep-learning. The 'scale is all you need' debate is live.

OpenAI co-founder 2015. AI as a strategic-capital problem starts with him in this era, pre-OpenAI he was YC president, not an AI figure.

Hand-classified. See the board for the criteria and the full grid.

Strategy positions

Governance firstendorses

Lead with regulation, treaties, liability regimesExplicitly asked the Senate to regulate AI including via licensing frontier models and creating an oversight agency.

“My worst fears are that we cause significant, we, the field, the technology, the industry, cause significant harm to the world. I think if this technology goes wrong, it can go quite wrong.”

Context: US Senate Judiciary Subcommittee on Privacy, Technology and the Law, oversight of AI hearing.

“I think if this technology goes wrong, it can go quite wrong, and we want to be vocal about that.”

Context: Lex Fridman Podcast #367, widely viewed framing of Altman's public safety stance.

Existential primacyendorses

Extinction/disempowerment risk overrides ordinary cost-benefitSignatory to the Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Closest strategy neighbours

by jaccard overlapOther people whose strategy tags overlap with Sam Altman's. Overlap is on tag identity, not stance; opposites can show up if they reference the same tags.

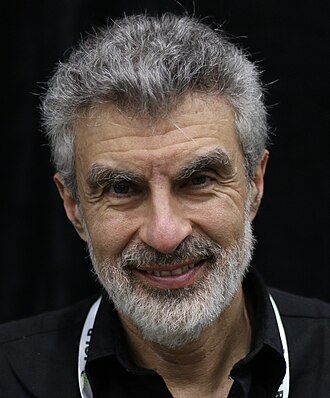

Yoshua Bengio

shared 2 · J=1.00

Turing Award laureate; scientific chair of the International AI Safety Report

Record last updated 2026-04-24.