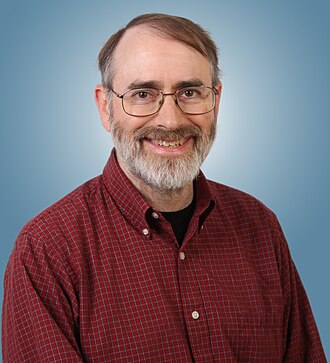

person

Thomas Dietterich

Oregon State emeritus; AAAI past president

Distinguished AI researcher and former AAAI president. Has argued AI safety should focus on everyday reliability failures, not extinction scenarios.

Profile

expertise

Deep technical

Sustained peer-reviewed contribution to ML, alignment, interpretability, or safety techniques. Could review a frontier paper.

Oregon State emeritus. Past AAAI president. Long ML publication record; engages safety from technical-pragmatist view.

recognition

Field-leading

Widely known inside the AI and AI-safety community. Appears repeatedly in top venues, podcasts, or governance forums. Not a household name to outsiders.

Recognised in academic ML; less mainstream press.

vintage

Symbolic era

Career started in the GOFAI / expert-systems / early-rationalist period. Vinge's 1993 Singularity, MIRI founded 2000, Bostrom and Yudkowsky writing.

Oregon State from 1985. Long ML career predates deep learning. AAAI president; recognised across both eras.

Hand-classified. See the board for the criteria and the full grid.

Strategy positions

AI skepticmixed

AGI risk narratives overstated; real harms are mundane and currentArgues mundane reliability failures, not superintelligence takeover, are the real AI risk.

“The biggest risk is that those algorithms may not always work. We need to be conscious of this risk and create systems that can still function safely even when the AI components commit errors.”

Near-term harms firstendorses

Documented present harms outweigh speculative existential narrativesArgues the field over-weights existential risk relative to documented harms from deployed systems and that solid engineering for robustness, validation, and oversight does most of the safety work.

The risk of superintelligent AI taking over the world is much less concerning than the risk of dumb AI being deployed in safety-critical systems without proper validation.

Closest strategy neighbours

by jaccard overlapOther people whose strategy tags overlap with Thomas Dietterich's. Overlap is on tag identity, not stance; opposites can show up if they reference the same tags.

Record last updated 2026-04-25.