strategy tag

Near-term harms first.

Documented present harms outweigh speculative existential narratives

also known as: AI ethics

stated endorsers

36

no opposers yet

profiled endorsers

1

248 on the board total

endorser p(doom)

·

no estimates on record

quotes by endorsers

36

just for this tag

People on the record.

36

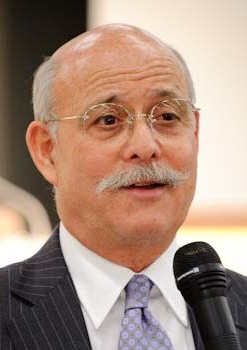

Adam Grant

Wharton organizational psychologist

Argues AI's effects on knowledge work depend on whether organizations adopt it as a substitute for human judgment or as a scaffolding for it; treats this as a managerial choice, not a technological inevitability.

AI does not have to replace expertise; it can extend it. The question is whether managers treat AI tools as a way to deskill workers or as a way to give them leverage.

Anna Lauren Hoffmann

UW iSchool; 'data violence' theorist

Argues 'fairness' framings underweight the concept of violence done through information systems; calls for moral language adequate to the structural harms produced by data-driven systems.

Data violence describes the cumulative harm produced through everyday operations of data systems, not the spectacular event but the continuous structural injury.

Anton Korinek

UVA economist; AI and inequality

Argues AI's impact on the labor share of income is the central macroeconomic concern, and that policy needs to start preparing for transformative scenarios where labor becomes a smaller fraction of value.

If AI eventually substitutes for most cognitive labor, traditional macroeconomic models break down: wages no longer track productivity, and political economy stops resembling anything we have institutional experience with.

Brian Merchant

Tech writer; 'Blood in the Machine' author

Argues AI's labour effects are following a historical pattern that the original Luddites resisted intelligently; calls for organized labor responses rather than passive acceptance.

The Luddites were not against technology. They were against being immiserated by it. The pattern repeats with AI, and so should the resistance.

Clay Shirky

NYU emeritus; 'Here Comes Everybody', 'Cognitive Surplus'

Argues universities have no plan for AI's effect on student writing and assessment; current institutional inertia means policy will be set by individual instructors making contradictory choices.

Higher education has not yet absorbed the fact that the writing it requires of students no longer requires the students. The institutional response is being made one syllabus at a time, with no coordination.

Cynthia Breazeal

MIT Media Lab; social robotics; founder Jibo

Argues AI literacy must reach K–12 to give the next generation agency over AI rather than leaving design choices solely to labs and platforms.

AI literacy needs to be a basic skill for all students, not just future computer scientists. The technology is reshaping every domain they will inhabit.

Daniel Susskind

Oxford / King's College London; AI and work economist

Argues we are heading toward 'technological unemployment', not because AI will eliminate all jobs, but because it will reduce demand for human labor across enough domains that distribution becomes the central political question.

The threat of technological unemployment is real. As task encroachment by machines accelerates, the question is no longer whether to redistribute, but how.

Edward Tian

GPTZero founder

Argues educational and journalistic systems urgently need AI-text detection to maintain trust; founded GPTZero specifically as a response to ChatGPT's release.

I built GPTZero because I think there's something fundamentally important about preserving humanity's ability to know what was written by humans.

Esther Dyson

Wellville chair; long-time tech investor and futurist

Argues AI's most damaging effects are mediated through advertising and engagement business models; safety must address those incentive structures, not just model capabilities.

AI is downstream from incentives. As long as the business models reward engagement and addiction over user welfare, AI will amplify the worst tendencies of the existing system.

Gemma Galdon Clavell

Eticas Foundation founder; algorithmic audits

Argues independent third-party audits, not lab self-attestation, are how AI systems should be regulated; pioneered audit methodologies for government and platform AI.

Audit is not a one-time exercise. It is a process of continuous accountability that needs legal teeth and independent practitioners.

Genevieve Bell

ANU; vice-chancellor; cultural anthropologist

Argues AI deployment is fundamentally a cultural and institutional question that current technical framings miss; calls for cyber-physical-social systems thinking as an alternative.

AI is best understood as a cyber-physical-social system. The technology, the infrastructure, and the cultural practices around it are the unit of analysis.

Hannah Fry

Cambridge mathematician; 'Hello World' author

Argues algorithmic decision-making in finance, criminal justice, and healthcare is the high-stakes ground for AI ethics; pushes back on framings that treat the question as primarily about future AGI.

Algorithms are already making decisions that change lives. The question of how much trust to give them is one we have to answer now, not in some future when AI is much more capable.

Holly Herndon

Musician; 'Holly+' digital twin and Spawning

Argues artists should hold property and consent rights over AI training; co-built Spawning's 'Have I Been Trained?' tool to make this possible at scale.

AI is being trained on artists' work without consent or compensation. We built Spawning so artists can see what's been used and opt out, individually and collectively.

Ifeoma Ajunwa

Emory Law; workplace AI scholar

Argues workplace algorithmic management is a primary surface for AI harm and that labor law and civil-rights law should govern it; AGI debates distract from where harm is happening.

Algorithmic management transforms workers into quantified objects subject to surveillance and discipline. The legal regime for this is incomplete and increasingly inadequate.

Inioluwa Deborah Raji

Mozilla fellow; algorithmic audit researcher

Argues system-level audits, rather than dataset audits or model cards alone, are required to capture real-world harms; supports legal frameworks that mandate access to deployed models.

Algorithmic audits are most effective when they look at the entire sociotechnical system, including the people, processes, and incentives surrounding the deployed model.

James Vincent

Senior reporter, The Verge

Argues AI hype outpaces capability and that practical reporting on what models actually do, and fail to do, is more useful than amplifying lab claims.

Half my job covering AI is checking whether the demo is the same thing as the product. Often it is not, and the gap between them is where the actual story sits.

Jasmine Sun

Tech writer; Substack contributor on AI culture

Argues both x-risk and tech-criticism camps under-weight the social and political effects of AI on knowledge work and the texture of public conversation.

What we should be asking is not whether AI will be 'aligned' or 'open', but what kind of cultural infrastructure we want to build with it. Tech and policy debates have largely ignored that question.

Jeremy Rifkin

Foundation on Economic Trends president; futurist

Argued in 1995 that automation would dramatically reduce demand for human labor; updates this thesis to argue AI is the long-delayed continuation of that trajectory.

We are in the early stages of a long economic transition where machines are progressively replacing the human workforce in every sector. AI is the latest and most consequential chapter.

Jonathan Stray

Berkeley CHAI; AI in journalism and recommender systems

Argues recommender-system harms are a primary AI safety question; deployed engagement-maximizing recommenders may already be the highest-impact AI in the world.

Recommender systems are the largest deployed AI systems in the world. If we get them wrong, we are getting AI safety wrong at scale already, before any AGI shows up.

Julia Angwin

Investigative journalist; ex-ProPublica; The Markup founder

Argues algorithmic systems already cause large-scale harm in criminal justice, hiring, and credit; calls for adversarial journalism and audit infrastructure rather than corporate self-policing.

“Machine bias: there's software used across the country to predict future criminals. And it's biased against blacks.”

Madhumita Murgia

Financial Times AI editor; 'Code Dependent' author

Argues the human cost of AI's data and moderation pipelines is invisible by design and that surfacing it is essential to honest debate about AI governance.

Behind every smooth chatbot is an army of underpaid workers cleaning, labelling, and moderating data, often in the Global South. AI dependency is also dependence on this hidden workforce.

Mark Riedl

Georgia Tech; AI ethics and storytelling

Argues the AI policy conversation should center documented sociotechnical harms, labor, copyright, surveillance, hallucination harms, rather than speculative existential scenarios.

AI hype is a strategy to make people fearful so they will accept governance regimes that benefit incumbents. Real harms are present-tense and unevenly distributed.

Melissa Heikkilä

Financial Times AI correspondent (ex-MIT Tech Review)

Argues AI's labor and bias harms are real and disproportionately affect Global Majority populations; pushes back on narratives that center only existential risk.

The conversation about AI harms is dominated by people in San Francisco. The harms themselves are most often felt by people very far from there.

Meredith Broussard

NYU journalism prof; 'Artificial Unintelligence', 'More Than a Glitch'

Argues AI failures are structural rather than glitches; calls for regulatory enforcement of audit requirements over voluntary lab self-governance.

What we call AI bias is more than a glitch. It is the predictable outcome of using historical data to make decisions in the present.

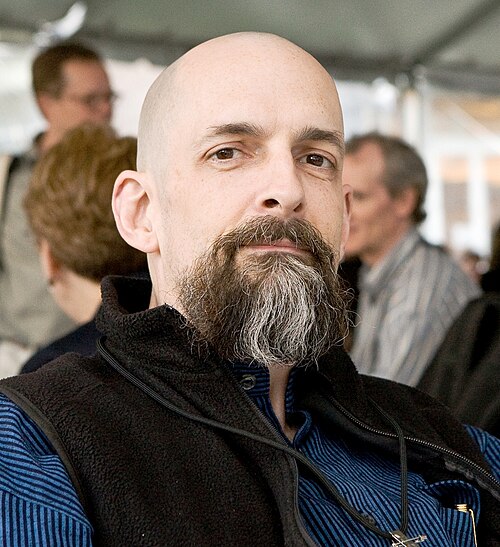

Neal Stephenson

Sci-fi novelist; Snow Crash, Cryptonomicon, Anathem, Termination Shock

Argues the most consequential AI risks may be social and epistemological rather than existential; warns against AI-mediated 'editing of reality' that destabilizes shared truth.

The most disturbing thing about generative AI is not that it might become superintelligent, but that it might make it impossible for humans to share a common factual reality.

Niloofar Mireshghallah

Carnegie Mellon postdoc; LLM privacy

Argues LLMs systematically violate users' contextual privacy norms in ways that current alignment work does not address; privacy is a first-order alignment problem.

Models leak the wrong information to the wrong audiences. Solving this requires teaching them norms about which audiences should hear which kinds of information, a contextual integrity problem.

Nita A. Farahany

Duke Law; 'The Battle for Your Brain'

Argues cognitive liberty, the right to mental privacy and self-determination over one's own thoughts, must be enshrined as a foundational right in the AI/neurotech era.

Cognitive liberty is the right to mental self-determination. Without it, AI and neurotech turn the brain itself into the next frontier for surveillance and manipulation.

Paris Marx

Tech Won't Save Us host

Argues mainstream coverage of AI accepts industry framing too readily; pushes back on both x-risk and corporate techno-optimism in favour of focus on labour, environment, and material costs.

Both AI doomers and AI accelerationists want you to believe the technology is more capable than it is. The first sells regulation, the second sells stock. Neither is talking about what AI actually does to workers and the environment.

Seda Gürses

TU Delft; computational law and privacy

Argues 'AI safety' framings collapse when separated from the data extraction and labour systems that produce models; advocates regulating those substrates rather than the models alone.

There is no way to talk about AI safety without talking about how the data, the labour, and the infrastructure that produce these systems are governed.

Stéphanie Hare

Tech researcher; 'Technology Is Not Neutral' author

Argues every technology embeds choices about whose interests it serves; AI systems are not neutral and must be evaluated against the political economy of their deployment.

“Technology is not neutral. Every choice about what to build, what to ignore, and what to deploy is a political choice.”

Steven Levy

Wired editor at large; long-time tech historian

Brings a historian's perspective: argues each new wave of computing has been heralded as transformative, and that AI is similar, real and overstated at the same time.

I have covered every major computing inflection since the personal computer. Each one was heralded as world-changing; some were, some were not. The pattern with AI is closer to the former.

Tegan Maharaj

HEC Montréal; ML safety, ecology, and ethics

Argues ML safety must integrate ecological, economic, and labor harms, not only model misalignment, into a single research agenda.

Concrete safety problems are environmental, social, and political at the same time as they are technical. The boundary the field draws around 'safety' is itself a normative choice.

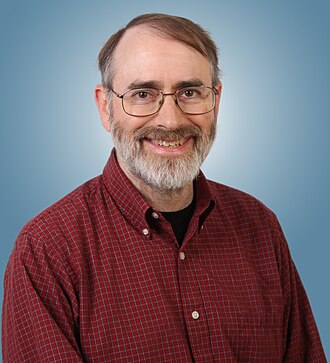

Thomas Dietterich

Oregon State emeritus; AAAI past president

Argues the field over-weights existential risk relative to documented harms from deployed systems and that solid engineering for robustness, validation, and oversight does most of the safety work.

The risk of superintelligent AI taking over the world is much less concerning than the risk of dumb AI being deployed in safety-critical systems without proper validation.

Trevor Paglen

Artist; AI surveillance and computer vision

Argues computer vision systems encode racialized and political assumptions through their training data, and that revealing these assumptions through art is part of the political work.

Computer vision doesn't see the world neutrally. It sees through the categories its training data inherited, and those categories are political all the way down.

Vivek Wadhwa

Tech writer and ex-academic; serial AI critic

Argues AI hype obscures concentrated power and labor disruption; calls for global coordination and public-interest research investment as the alternative to lab-led regulation.

We are letting Silicon Valley write the rules for technologies that will reshape every other industry. That is not how democracies are supposed to work.

Will Douglas Heaven

MIT Technology Review senior AI editor

Editorial work consistently treats AI hype claims with skepticism while taking the underlying technical advances seriously; frames safety reporting around concrete failure modes rather than meta-debates.

The challenge in covering AI is that the technology is moving faster than the framework we use to evaluate claims about it. Our job is to slow down enough to test what is actually being demonstrated.