person

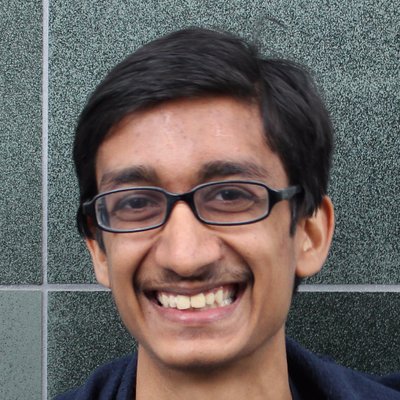

Rohin Shah

Alignment researcher at Google DeepMind

Author of the Alignment Newsletter (2018–2022) and now a DeepMind alignment researcher. Provides measured, inside-view assessments that sit between Yudkowskian pessimism and LeCunian dismissal.

Profile

expertise

Deep technical

Sustained peer-reviewed contribution to ML, alignment, interpretability, or safety techniques. Could review a frontier paper.

Google DeepMind alignment lead. Author of Alignment Newsletter. Long publication record on inverse reward design, scalable oversight.

recognition

Established

Reliable, recognised voice within their specific subfield. Cited and invited but not central to general AI discourse.

Recognised inside AI safety; lower public profile.

vintage

Deep-learning rise

Came up post-AlexNet. ImageNet, AlphaGo, transformer paper. DeepMind, Google Brain, FAIR establish the modern lab template.

PhD UC Berkeley CHAI; Alignment Newsletter 2018. Career bridges deep-learning into scaling era.

Hand-classified. See the board for the criteria and the full grid.

Strategy positions

Alignment firstendorses

Solve technical alignment before capability thresholds closeWorks on mechanistic interpretability, scalable oversight, and specification research within a frontier lab.

I think it's more likely than not that we can develop useful AI systems that are meaningfully aligned, but I'd place substantial probability on catastrophic outcomes.

Closest strategy neighbours

by jaccard overlapOther people whose strategy tags overlap with Rohin Shah's. Overlap is on tag identity, not stance; opposites can show up if they reference the same tags.

Record last updated 2026-04-24.