person

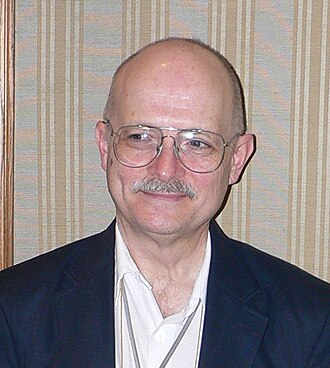

Vernor Vinge

Science-fiction author who coined 'technological singularity' (1944–2024)

Mathematician and SF author whose 1993 NASA paper 'The Coming Technological Singularity' proposed that superhuman intelligence by 2030 would end the human era as we know it. A founding formal statement of what later became AGI discourse.

Profile

expertise

External-domain expert

Recognised expert outside AI (philosophy, economics, biology, journalism) who weighs in on AI consequences from that vantage.

Mathematician and SF author (1944–2024). Coined modern 'singularity' usage in his 1993 essay. Not a technical AI contributor.

recognition

Household name

Name recognition outside the AI/CS community. Featured by mainstream press, a Wikipedia page in many languages, a published bestseller, or holds a position the lay public knows.

Hugo-Award-winning SF author; widely cited in singularity discourse.

vintage

Symbolic era

Career started in the GOFAI / expert-systems / early-rationalist period. Vinge's 1993 Singularity, MIRI founded 2000, Bostrom and Yudkowsky writing.

1944–2024. Singularity essay 1993. Pre-deep-learning singularity frame.

Hand-classified. See the board for the criteria and the full grid.

Strategy positions

Existential primacyendorses

Extinction/disempowerment risk overrides ordinary cost-benefitArgued the intelligence-explosion framing decades before it was mainstream. Estimated superhuman AI between 2005 and 2030.

“Within thirty years, we will have the technological means to create superhuman intelligence. Shortly thereafter, the human era will be ended.”

Closest strategy neighbours

by jaccard overlapOther people whose strategy tags overlap with Vernor Vinge's. Overlap is on tag identity, not stance; opposites can show up if they reference the same tags.

Record last updated 2026-04-24.