person

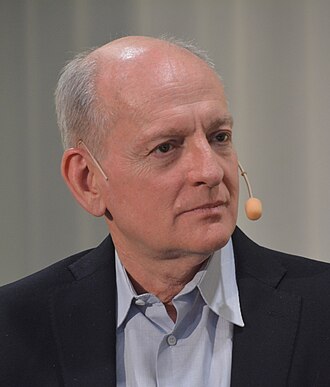

Stuart Russell

Co-author of the standard AI textbook; leading critic of the 'standard model' of AI

Argues the field's default paradigm, build systems that optimise fixed objectives, is dangerously misguided, and proposes instead that AI systems be uncertain about human preferences and defer to humans by construction. Author of Human Compatible (2019).

Profile

expertise

Deep technical

Sustained peer-reviewed contribution to ML, alignment, interpretability, or safety techniques. Could review a frontier paper.

Co-author of the standard AI textbook 'Artificial Intelligence: A Modern Approach' (used by 1500+ universities). UC Berkeley professor. Decades of AI research; founded the Center for Human-Compatible AI.

recognition

Household name

Name recognition outside the AI/CS community. Featured by mainstream press, a Wikipedia page in many languages, a published bestseller, or holds a position the lay public knows.

Human Compatible (2019) was a New York Times notable book. Frequent congressional testimony, BBC Reith Lectures 2021.

vintage

Symbolic era

Career started in the GOFAI / expert-systems / early-rationalist period. Vinge's 1993 Singularity, MIRI founded 2000, Bostrom and Yudkowsky writing.

PhD 1986 (Stanford). Russell & Norvig textbook 1995. AI safety voice activated ~2014 with the FLI letter; his priors are GOFAI-rooted.

Hand-classified. See the board for the criteria and the full grid.

Strategy positions

Alignment firstendorses

Solve technical alignment before capability thresholds closeFrames alignment as the central technical problem; proposes a redesign around assistance games where AI is uncertain about its objective.

The standard model, in which the primary definition of success is getting better and better at achieving rigid human-specified goals, is dangerously misguided.

Context: Core argument of Human Compatible.

“You can't fetch the coffee if you're dead.”

Context: Used to illustrate why sufficiently goal-directed AI will resist being switched off.

Existential primacyendorses

Extinction/disempowerment risk overrides ordinary cost-benefitSignatory to the CAIS Statement on AI Risk.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Closest strategy neighbours

by jaccard overlapOther people whose strategy tags overlap with Stuart Russell's. Overlap is on tag identity, not stance; opposites can show up if they reference the same tags.

Joseph Carlsmith

shared 2 · J=1.00

Open Philanthropy researcher; 'Is Power-Seeking AI an Existential Risk?'

Nick Bostrom

shared 2 · J=0.67

Author of Superintelligence; founded Oxford's Future of Humanity Institute

Record last updated 2026-04-24.