person

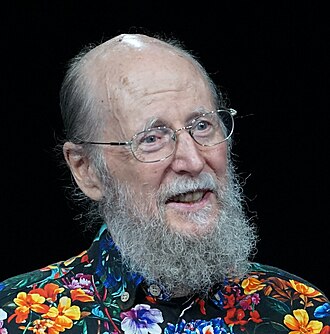

Richard S. Sutton

RL pioneer; 2024 Turing Award recipient

University of Alberta professor and DeepMind senior research scientist. With Andrew Barto, won the 2024 Turing Award for the foundations of reinforcement learning. Author of the canonical 'Reinforcement Learning: An Introduction' textbook and the influential 'Bitter Lesson' essay.

Profile

expertise

Deep technical

Sustained peer-reviewed contribution to ML, alignment, interpretability, or safety techniques. Could review a frontier paper.

University of Alberta professor. Co-author of the foundational RL textbook with Andrew Barto. Turing Award 2024 (with Barto). 'The Bitter Lesson' (2019) is one of the most-read essays in modern ML.

recognition

Field-leading

Widely known inside the AI and AI-safety community. Appears repeatedly in top venues, podcasts, or governance forums. Not a household name to outsiders.

Universal name in ML. Turing Award announcement got tech-press coverage. Less general-public recognition than Hinton/LeCun.

vintage

Symbolic era

Career started in the GOFAI / expert-systems / early-rationalist period. Vinge's 1993 Singularity, MIRI founded 2000, Bostrom and Yudkowsky writing.

PhD 1984 (UMass). Sutton & Barto RL textbook 1998. The Bitter Lesson 2019. His RL frame predates the deep-learning rise.

Hand-classified. See the board for the criteria and the full grid.

Strategy positions

Accelerationendorses

Build faster; delay costs more than capabilityArgues general methods that scale with computation will continue to outperform clever human-engineered approaches; views the bitter lesson as the dominant pattern of AI history.

“The bitter lesson is based on the historical observations that 1) AI researchers have often tried to build knowledge into their agents, 2) this always helps in the short term and is personally satisfying to the researcher, but 3) in the long run it plateaus and even inhibits further progress, and 4) breakthrough progress eventually arrives by an opposing approach based on scaling computation by search and learning.”

Abandon superintelligenceopposes

Reject superintelligence as a goal entirely; narrow AI onlyArgues succession of humanity by AI is the natural next step of cosmic evolution; opposes the protective-dominance framing that animates much of the AI safety field.

We should prepare for, but not fear, the inevitable succession from humanity to AI.

Closest strategy neighbours

by jaccard overlapOther people whose strategy tags overlap with Richard S. Sutton's. Overlap is on tag identity, not stance; opposites can show up if they reference the same tags.

Record last updated 2026-04-25.