strategy tag

Acceleration.

Build faster; delay costs more than capability

also known as: e/acc, effective accelerationism

stated endorsers

14

+15 tentative · 0 oppose

profiled endorsers

7

248 on the board total

endorser mean p(doom)

5%

n=1 · median 5%

quotes by endorsers

15

just for this tag

principal voices

Highest-recognition profiled endorsers, broken ties by quote count. Inclusion is not endorsement of the position, it's recognition of who the discourse turns to when the bet is debated.

Marc Andreessen

Marc AndreessenHousehold name

JD Vance

JD VanceHousehold name

Donald Trump

Donald TrumpHousehold name

David Sacks

David SacksHousehold name

Vivek Ramaswamy

Vivek RamaswamyHousehold name

where the endorsers sit on the board

7 of 248 profiled · 3% of the board

| expertise ↓ · recognition → | Household name | Field-leading | Established | Emerging |

|---|---|---|---|---|

| Frontier builder | · | · | · | · |

| Deep technical | · | · | · | |

| Applied technical | · | · | · | · |

| Policy / meta | · | · | · | |

| External-domain expert | · | · | · | · |

| Commentator | · | · | · |

Each face is one profiled person. Cell shade intensifies with endorser density. Faces with × are profiled opposers, same tier, opposite position. Empty cells mark tier combinations the field has not produced for this bet.

Tier mix counts only endorsers (endorses, mixed, conditional, evolved-toward).

expertise mix of endorsers · 7 profiled of 14

recognition mix of endorsers

vintage mix · n=7 of 7 profiled with era assigned

Vintage is the era when this person's AI worldview formed, pioneer through post-ChatGPT. A bet held mostly by post-ChatGPT entrants is in a different epistemic state from one held by pre-deep-learning veterans.

People on the record.

29 · 15 tentativeBrian Chau

Executive Director of Alliance for the Future

Organised lobbying counterweight to AI safety policy in Washington; frames pause/safety advocates as doomers.

Regulation of AI is regulation of inference. Regulation of inference is regulation of thought.

David Luan

Amazon; ex-Adept co-founder

Argues agentic AI, systems that take actions on the user's behalf, is the next major capability surface; capability progress here will reshape every productivity tool.

We believe the next decade of AI is action, not just text generation. Adept's bet was that the agents that take real-world action will be the most consequential AI systems.

David Sacks

White House AI & Crypto Czar (2025); VC

Advocates for aggressive US deregulation of frontier AI; framed the Biden executive order as burdensome and anti-competitive.

“We've got to let the private sector cook.”

Donald Trump

US President (2017–2021, 2025–)

Explicit acceleration framing: rescinded prior AI safety-oriented orders and launched large-scale compute investment.

Stargate is a new American company that will invest $500 billion, at least, in AI infrastructure.

Eric Jang

1X Technologies VP of AI; ex-Google Brain

Argues end-to-end neural network policies for humanoids, not classical pipelines, are the path to general-purpose physical AI; capability progress will follow the same scaling pattern as language models.

Humanoid robots running large neural network policies are the embodied analogue of GPT-style language models. The same scaling laws that produced reasoning in language are starting to produce skill acquisition in physical action.

Guillaume Verdon

Founder of Extropic; aka 'Beff Jezos', founder of the e/acc movement

Frames accelerating compute and AI capability as aligned with the thermodynamic direction of life.

Effective accelerationism wants to propel humanity up the Kardashev gradient.

Context: Lex Fridman podcast episode 407.

JD Vance

US Vice President; AI 'opportunity, not safety' advocate

Publicly rejected safety-first framings at the Paris AI Action Summit; aligned US policy with acceleration.

“I'm not here this morning to talk about AI safety... I'm here to talk about AI opportunity.”

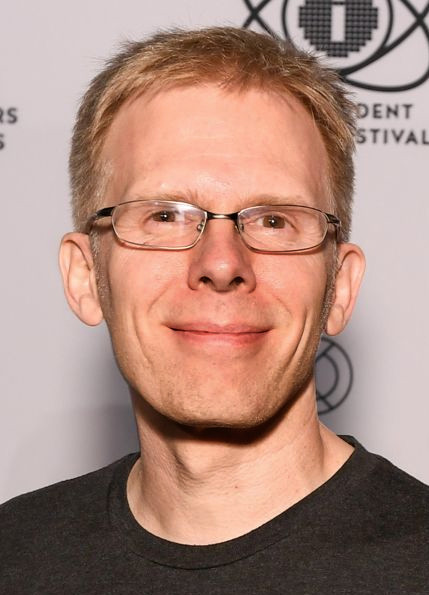

John Carmack

Keen Technologies founder; ex-Meta CTO

Argues AGI is a tractable engineering problem with current architectures; founded Keen on the thesis that a small team with focused effort can make meaningful progress on general intelligence.

I think a single individual could probably do the entire AGI training pipeline. The bottleneck is not budget or compute, it is engineering insight.

Marc Andreessen

Co-founder of Andreessen Horowitz; techno-optimist manifesto author

Argues any deceleration of AI costs lives via foregone medical and scientific progress.

“Any deceleration of AI will cost lives. Deaths that were preventable by the AI that was prevented from existing is a form of murder.”

Context: The Techno-Optimist Manifesto.

“We believe that we are, have been, and will always be the masters of technology, not mastered by technology.”

Mike Solana

Pirate Wires founder; tech contrarian

Frames AI safety advocates as captured by political and economic incumbency. Pro-acceleration cultural voice.

The 'AI is going to kill us' people are remarkably aligned with the 'AI should be regulated by us' people. Convenient.

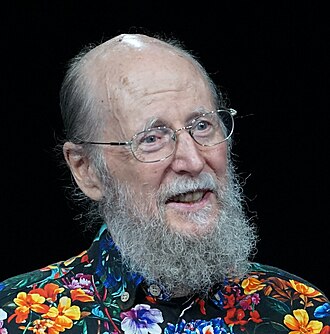

Richard S. Sutton

RL pioneer; 2024 Turing Award recipient

Argues general methods that scale with computation will continue to outperform clever human-engineered approaches; views the bitter lesson as the dominant pattern of AI history.

“The bitter lesson is based on the historical observations that 1) AI researchers have often tried to build knowledge into their agents, 2) this always helps in the short term and is personally satisfying to the researcher, but 3) in the long run it plateaus and even inhibits further progress, and 4) breakthrough progress eventually arrives by an opposing approach based on scaling computation by search and learning.”

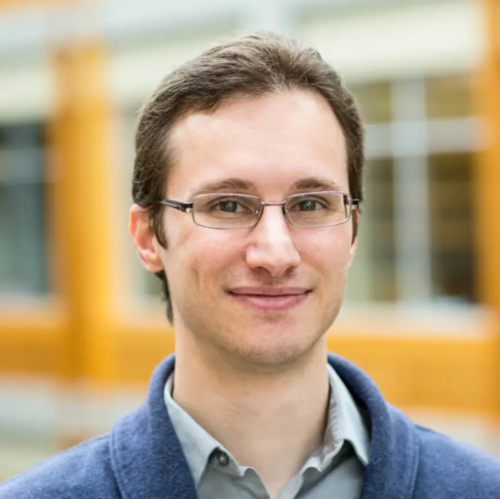

Sébastien Bubeck

OpenAI; lead author of 'Sparks of AGI' paper

Argues GPT-4 already exhibits early AGI behaviors and that capability progress will continue rapidly; less explicit on safety strategy.

“We contend that GPT-4 could reasonably be viewed as an early (yet still incomplete) version of an artificial general intelligence (AGI) system.”

Sergey Levine

UC Berkeley; robot learning, deep RL

Argues physical-world robotics is the bottleneck to general AI usefulness; less explicit on x-risk strategy but views capability progress as the priority.

If we want machines that can do useful things in the physical world, we need to scale up real-world data and self-supervision. Internet text gets you far, but not into a kitchen.

Vivek Ramaswamy

Former US presidential candidate; AI deregulation advocate

Argues against AI regulation; frames AI safety advocacy as a form of regulatory capture.

AI regulation is a Trojan horse for incumbent protection.

tentative · 15

Below are entries flagged tentative: assignments inferred from a passing remark, hype quote, or paper abstract rather than a clear strategy statement. Shown in dashed cards so a stronger primary source can replace them later.

Aditya Ramesh

OpenAI DALL·E creator

Pioneered the unification of text and image generation in single foundation models; positioned as a capability-driven researcher more than a public safety voice.

DALL·E 2 generates more realistic and accurate images with 4x greater resolution. The visual reasoning that emerges from large multimodal training continues to surprise us.

Albert Gu

CMU; Mamba and structured state-space models

Argues structured state-space models can scale to language with linear time and memory, breaking the quadratic attention bottleneck and reshaping where capability research is going.

“We propose Mamba, a selective state space model that achieves Transformer-level performance with linear scaling in sequence length.”

Alec Radford

OpenAI; lead author of GPT, Whisper, CLIP

Public statements are rare; positions inferred from research output emphasize scaling generative pretraining and unifying modalities into a single representation.

“We demonstrate that large gains on these tasks can be realized by generative pre-training of a language model on a diverse corpus of unlabeled text.”

Ashish Vaswani

Co-founder Essential AI; lead author of 'Attention Is All You Need'

Position inferred from career trajectory (Transformer architect, Essential AI co-founder building frontier tooling); no public position statement on AI strategy is on record. Quote below is from the Transformer paper, which is technical, not strategic.

“We propose a new simple network architecture, the Transformer, based solely on attention mechanisms, dispensing with recurrence and convolutions entirely.”

Charlie Snell

UC Berkeley; LLM efficiency and inference compute

Argues inference-time compute is a separable axis of capability scaling that has been underweighted; smaller models with more 'thinking' can match larger ones on hard problems.

Test-time compute can be more effective than scaling model size for certain reasoning tasks. The trade-off between training-time and test-time scaling is far richer than headline metrics suggest.

Denny Zhou

Google DeepMind; reasoning team lead

Argues reasoning, via chain-of-thought, self-consistency, and tree-of-thought, is the next major capability surface beyond raw scale; leads DeepMind work on this.

Reasoning is one of the most important capabilities of LLMs. Chain-of-thought is the simplest demonstration that scale plus reasoning prompts unlocks much more than either alone.

Jakob Uszkoreit

Inceptive co-founder; Transformer co-author

Position inferred from work on Transformer-derived RNA design; no explicit AI-strategy statement on record. Quote describes the technical bet, not the strategic one.

We're using the same architecture that powers language models to design RNA medicines. The substrate matters, but the underlying learning machinery generalizes.

Jason Wei

OpenAI; chain-of-thought prompting

Argues scaling and emergent capabilities are the primary axis of AI progress; views capability research as the engine of useful applications.

“Chain-of-thought prompting elicits reasoning in large language models. We find that scaling matters: chain-of-thought is an emergent ability of scale.”

John von Neumann

Mathematician and singularity originator (1903–1957)

Anticipated that the accelerating pace of technological progress would reach an essential singularity beyond which human affairs as we know them could not continue. Treated this as descriptive rather than prescriptive.

“The accelerating progress of technology and changes in the mode of human life give the appearance of approaching some essential singularity in the history of the race beyond which human affairs, as we know them, could not continue.”

Context: Reported by Stanislaw Ulam in his 1958 obituary of von Neumann; widely cited as the first articulation of a technological singularity.

Niki Parmar

Co-founder Essential AI; Transformer co-author

Position inferred from being a Transformer co-author and Essential AI co-founder; no public position statement on AI strategy is on record. Quote below is from the Transformer paper, technical not strategic.

“Multi-headed self-attention enables the model to jointly attend to information from different representation subspaces at different positions.”

Oriol Vinyals

Google DeepMind; Gemini technical lead

Position inferred from research portfolio (AlphaStar, Gemini lead), capability scaling is the implicit research bet. No primary-source AI-strategy statement on record.

Our agent, AlphaStar, learned by playing against itself, scaling self-play to a domain at the edge of professional human ability.

Prafulla Dhariwal

OpenAI; GPT-4o lead

Architect of OpenAI's unified multimodal flagship; argues unified end-to-end models will replace pipelined modality-specific systems.

GPT-4o is our new flagship model that can reason across audio, vision, and text in real time. The end-to-end training over modalities is what unlocks low-latency interaction.

Stefano Ermon

Stanford; generative models pioneer

Pioneered score-based generative models that became the technical backbone of diffusion-driven image, audio, and video synthesis; views capability research as essential to safe deployment.

Score-based generative models learn the gradient of the log data distribution. The framework unifies many seemingly disparate generative model families and underpins modern diffusion models.

Tim Brooks

Google DeepMind Veo; ex-OpenAI Sora research lead

Argues video generation is on a trajectory similar to language modeling, qualitative improvements every few months, and that the next bottleneck will be control rather than fidelity.

Sora generates video by predicting future frames from a sequence of input frames. This formulation lets us scale data and compute in the same way that text models do.

Tri Dao

Princeton; Together AI; FlashAttention and Mamba

Argues throughput and efficiency improvements, not new architectures alone, are doing most of the heavy lifting in capability progress; positions Together AI's open infrastructure on this thesis.

FlashAttention computes attention with no approximation in linear memory, by being aware of GPU memory hierarchy. The same engineering carefulness can keep delivering capability for years.