Speed ↑ · speed timing

Acceleration

Speed of capability itself is the safety lever; alignment improves with compute, defence compounds with offence, and wealth funds resilience.

Mechanism

Accelerate capability development and sustain a minority safety effort inside the same organisation; frozen incumbents are the worst scenario.

Falsification signal

A visible harm large enough that policy overrides the growth coalition (2008 financial crisis analogue).

A strategy held without a falsification signal is not strategy; it is affiliation. Continued support after this signal lands is identity, not bet. See the identity diagnostic.

Self-undermining threshold

overshoot riskWhen adopted by more than one actor.

The race dynamic it assumes away triggers. Each actor cuts safety corners faster as the others do.

Every strategy has a stable region where it reinforces itself and an unstable region where pursuit defeats it. The threshold between them is usually narrower than advocates acknowledge.

People on the record

29Profiled figures appear first, with their tier in small caps. Each face links to the person and their full quote record. Tag: acceleration.

expertise mix · 7 profiled

recognition mix

A strategy whose endorsement skews to commentators or external-domain experts is in a different epistemic state from one endorsed mostly by frontier-builders. The mix is read carefully across both axes; see the board for criteria. Counts are over the 7 profiled people on this strategy (22 unprofiled excluded).

David Sacks

Governance, policy, strategy · Mass-public recognition

Donald Trump

Governance, policy, strategy · Mass-public recognition

Guillaume Verdon

Deep ML / safety technical · Known across the AI/safety field

JD Vance

Governance, policy, strategy · Mass-public recognition

Marc Andreessen

Public-square commentator · Mass-public recognition

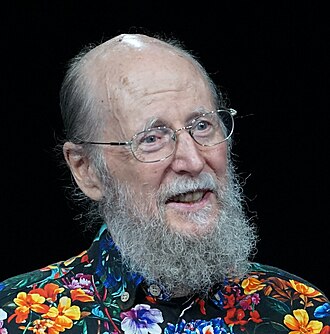

Richard S. Sutton

Deep ML / safety technical · Known across the AI/safety field

Vivek Ramaswamy

Public-square commentator · Mass-public recognition

Aditya Ramesh

OpenAI DALL·E creator

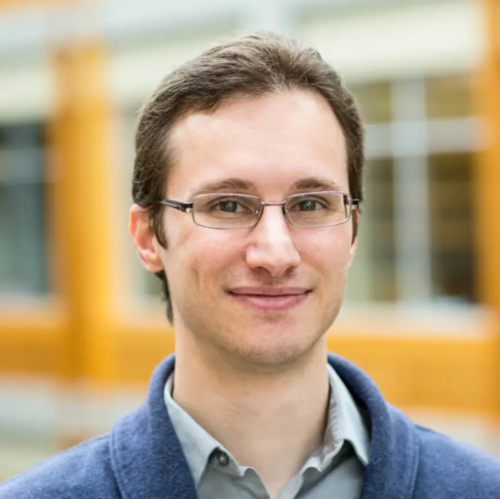

Albert Gu

CMU; Mamba and structured state-space models

Alec Radford

OpenAI; lead author of GPT, Whisper, CLIP

Ashish Vaswani

Co-founder Essential AI; lead author of 'Attention Is All You Need'

Brian Chau

Executive Director of Alliance for the Future

Charlie Snell

UC Berkeley; LLM efficiency and inference compute

David Luan

Amazon; ex-Adept co-founder

Denny Zhou

Google DeepMind; reasoning team lead

Eric Jang

1X Technologies VP of AI; ex-Google Brain

Jakob Uszkoreit

Inceptive co-founder; Transformer co-author

Jason Wei

OpenAI; chain-of-thought prompting

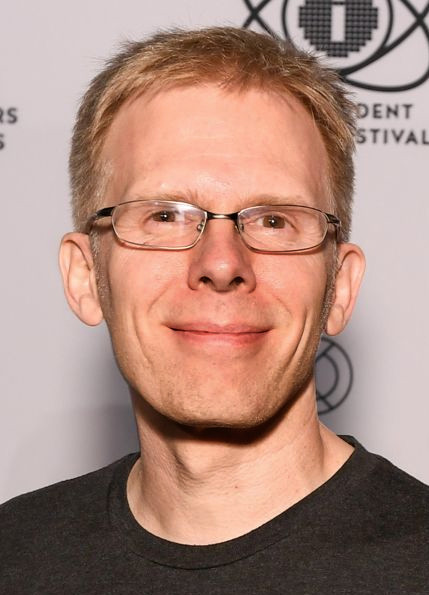

John Carmack

Keen Technologies founder; ex-Meta CTO

John von Neumann

Mathematician and singularity originator (1903–1957)

Mike Solana

Pirate Wires founder; tech contrarian

Niki Parmar

Co-founder Essential AI; Transformer co-author

Oriol Vinyals

Google DeepMind; Gemini technical lead

Prafulla Dhariwal

OpenAI; GPT-4o lead

Sébastien Bubeck

OpenAI; lead author of 'Sparks of AGI' paper

Sergey Levine

UC Berkeley; robot learning, deep RL

Stefano Ermon

Stanford; generative models pioneer

Tim Brooks

Google DeepMind Veo; ex-OpenAI Sora research lead

Tri Dao

Princeton; Together AI; FlashAttention and Mamba

Load-bearing commitments

Worldview positions this strategy quietly assumes. If the claim fails empirically or philosophically, the strategy loses its target or its premise.

Faster is better because the trajectory is good.

Fails if: If the trajectory is bad, faster is simply arriving at catastrophe sooner.

Coordination will fail anyway, defect first.

Fails if: If coordination was in fact achievable, acceleration was a self-fulfilling defection.

Coordinates

Conflicts, grouped by mechanism

8Lever opposition

same lever, opposite pullThe pair's primary lever is the same; they pull it in opposite directions. A portfolio containing both is internally incoherent on that lever.

Frame opposition

incompatible premisesThe strategies accept different premises about what AI is or what the binding problem is. They conflict not on lever choice but on the frame that makes lever choice sensible.

Complements, grouped by mechanism

4Same phase, different layer

same stage, distinct leversBoth are active in the same phase of the transition but act on different layers (model vs institution vs culture). They cover different failure modes inside the same window.

Stage-sequenced

one sets up the otherThe pair is phase-offset: one acts before the transition, the other during or after. The first creates the conditions under which the second binds.

Adjacent bet

different levers, loosely coupledDifferent levers, different directions of action. They reinforce only via the general principle that covering more bets dominates covering fewer.

Same-lever reinforce

same lever, same pull, different mechanismBoth strategies pull the same lever in the same direction by different means. They stack: doing both amplifies the pull, at the cost of double-counting in portfolio audits.

Axis position

Source note: Acceleration strategy.md