Scope ↓ · speed timing

Abandon superintelligence

Risk of superintelligence is unbounded and value foregone is bounded; permanent global coordination against the technology is possible enough.

Mechanism

Civilizational commitment to permanent foregoing of AI above some capability threshold, analogous to cloning moratoria or BWC.

If it succeeds: what binds next

Civilizational moratorium holds indefinitely. The binding problem is enforcement over generations as the value of defection grows with accumulated forgone capability.

A strategy that produces a worse next problem than the one it solved has not done durable work.

People on the record

6Profiled figures appear first, with their tier in small caps. Each face links to the person and their full quote record. Tag: abandon-superintelligence.

expertise mix · 4 profiled

recognition mix

A strategy whose endorsement skews to commentators or external-domain experts is in a different epistemic state from one endorsed mostly by frontier-builders. The mix is read carefully across both axes; see the board for criteria. Counts are over the 4 profiled people on this strategy (2 unprofiled excluded).

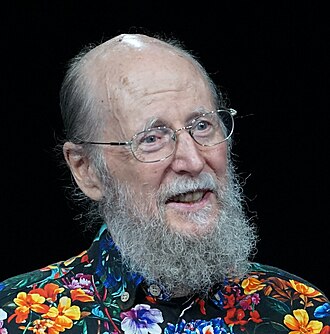

Bill Joy

Deep ML / safety technical · Mass-public recognition

Richard S. Sutton

Deep ML / safety technical · Known across the AI/safety field

Roman Yampolskiy

Deep ML / safety technical · Known across the AI/safety field

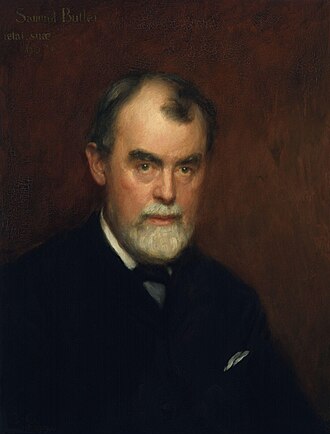

Samuel Butler

Expert in another field · Known across the AI/safety field

Avi Loeb

Harvard astrophysicist; Galileo Project director

Hans Moravec

Robotics pioneer; 'Mind Children' (1948–)

Coordinates

Conflicts, grouped by mechanism

3Frame opposition

incompatible premisesThe strategies accept different premises about what AI is or what the binding problem is. They conflict not on lever choice but on the frame that makes lever choice sensible.

Complements, grouped by mechanism

4Same-lever reinforce

same lever, same pull, different mechanismBoth strategies pull the same lever in the same direction by different means. They stack: doing both amplifies the pull, at the cost of double-counting in portfolio audits.

Shared authority

same legitimacy sourceDifferent levers, same legitimacy source (democratic, state, technical, market). The pair hangs together under one kind of authority; it stands or falls with that authority.

Same-side diversification

same side, different leverBoth act on the same side (AI or world) but pull distinct levers. They cover several failure modes on that side while leaving the other side uncovered.

Same-lever twins

4Both use the same lever in the same direction. Usually redundant inside a portfolio: each dollar or effort unit only buys one lever pull, even if two strategies are named.

Axis position

Source note: Abandon superintelligence strategy.md