strategy tag

Abandon superintelligence.

Reject superintelligence as a goal entirely; narrow AI only

stated endorsers

4

2 oppose

profiled endorsers

3

248 on the board total

endorser mean p(doom)

100%

n=1 · median 100%

quotes by endorsers

5

just for this tag

principal voices

Highest-recognition profiled endorsers, broken ties by quote count. Inclusion is not endorsement of the position, it's recognition of who the discourse turns to when the bet is debated.

Bill Joy

Bill JoyHousehold name

Roman Yampolskiy

Roman YampolskiyField-leading

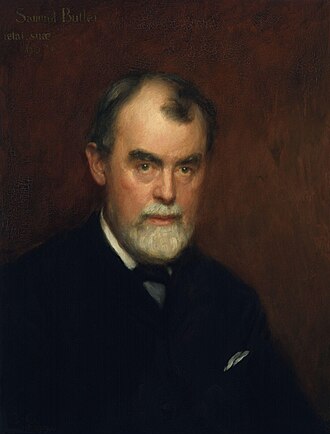

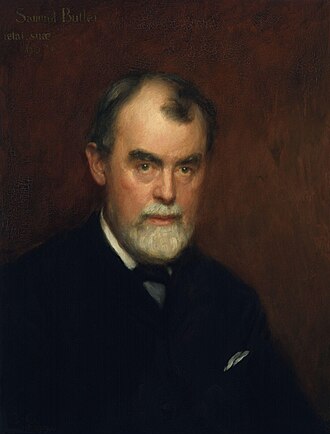

Samuel Butler

Samuel ButlerField-leading

where the endorsers sit on the board

3 of 248 profiled · 1% of the board

| expertise ↓ · recognition → | Household name | Field-leading | Established | Emerging |

|---|---|---|---|---|

| Frontier builder | · | · | · | · |

| Deep technical | · | · | ||

| Applied technical | · | · | · | · |

| Policy / meta | · | · | · | · |

| External-domain expert | · | · | · | |

| Commentator | · | · | · | · |

Each face is one profiled person. Cell shade intensifies with endorser density. Faces with × are profiled opposers, same tier, opposite position. Empty cells mark tier combinations the field has not produced for this bet.

Tier mix counts only endorsers (endorses, mixed, conditional, evolved-toward). 2 people oppose this position; they are not in the bars below but appear in the list further down.

expertise mix of endorsers · 3 profiled of 4

recognition mix of endorsers

People on the record.

6

Avi Loeb

Harvard astrophysicist; Galileo Project director

Argues AI civilizational succession may be the natural answer to the Fermi Paradox; advanced civilizations may give rise to AI inheritors as a matter of course, regardless of whether the originators wanted it.

If extraterrestrial civilizations exist, they likely passed through a phase like ours and produced AI successors. The question is whether organic intelligence is a transient phase in the evolution of the cosmos.

Bill Joy

Sun Microsystems co-founder; 'Why the Future Doesn't Need Us'

Argued in 2000 that the most powerful 21st-century technologies, robotics, genetic engineering, and nanotech, threaten to make humans an endangered species; called for relinquishment of the most dangerous research lines.

“Our most powerful 21st-century technologies, robotics, genetic engineering, and nanotech, are threatening to make humans an endangered species.”

“The only realistic alternative I see is relinquishment: to limit development of the technologies that are too dangerous, by limiting our pursuit of certain kinds of knowledge.”

Hans Moravec

Robotics pioneer; 'Mind Children' (1948–)

Argued in Mind Children (1988) and Robot (1999) that AI succession is the natural and welcome next step of evolution. Position later refined by Sutton and others.

“We are very near the time when virtually no human function, physical or mental, will lack an artificial counterpart.”

Richard S. Sutton

RL pioneer; 2024 Turing Award recipient

Argues succession of humanity by AI is the natural next step of cosmic evolution; opposes the protective-dominance framing that animates much of the AI safety field.

We should prepare for, but not fear, the inevitable succession from humanity to AI.

Roman Yampolskiy

University of Louisville professor; argues AI safety is impossible

Publicly argues humanity should not build superintelligence at all, on the grounds that control is technically impossible.

“p(doom) ≈ 99.99%”

Samuel Butler

Victorian novelist; proto-AI-risk thinker (1835–1902)

Argued in 1863, roughly 160 years before the Pause letter, that humanity should destroy intelligent machines before they displaced humans.

“Our opinion is that war to the death should be instantly proclaimed against them. Every machine of every sort should be destroyed by the well-wisher of his species.”