person

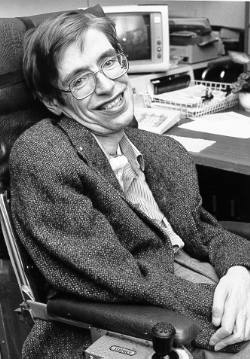

Stephen Hawking

Theoretical physicist; early mainstream AI-risk voice (1942–2018)

Helped launch mainstream concern about existential AI risk with his 2014 BBC warning. Co-founded the Cambridge Centre for the Future of Intelligence.

Profile

expertise

External-domain expert

Recognised expert outside AI (philosophy, economics, biology, journalism) who weighs in on AI consequences from that vantage.

Theoretical physicist (1942–2018). Cosmology, not AI; AI commentary was occasional. Co-signed the 2014 'Stephen Hawking AI letter' framing AI as existential.

recognition

Household name

Name recognition outside the AI/CS community. Featured by mainstream press, a Wikipedia page in many languages, a published bestseller, or holds a position the lay public knows.

Universal name recognition; Wikipedia in 100+ languages.

vintage

Pre-deep-learning

Active before AlexNet. The existential-risk frame matures (FHI, OpenPhil, EA). Public AI commentary still rare; deep learning not yet dominant.

1942–2018. AI commentary mostly 2014-2017 (FLI letter). His AI voice forms in the pre-deep-learning x-risk wave.

Hand-classified. See the board for the criteria and the full grid.

Strategy positions

Existential primacyendorses

Extinction/disempowerment risk overrides ordinary cost-benefitArgued full AI could end the human race, on the grounds that it would self-improve beyond biological human capacity.

“The development of full artificial intelligence could spell the end of the human race.”

“It would take off on its own, and re-design itself at an ever increasing rate. Humans, who are limited by slow biological evolution, couldn't compete, and would be superseded.”

Closest strategy neighbours

by jaccard overlapOther people whose strategy tags overlap with Stephen Hawking's. Overlap is on tag identity, not stance; opposites can show up if they reference the same tags.

Record last updated 2026-04-24.