Control mechanism ↑ · ai artefact

Alignment first

Technical alignment is solvable before critical capability thresholds close, and aligned systems compose safely into aligned populations.

Mechanism

Invest primarily in interpretability, scalable oversight, and post-training methods so AI does what principals intend.

What this name has meant

vintage driftThe name is stable; the content has shifted. A reader acting on the label without asking which vintage is being meant risks arguing with a position nobody currently holds.

Solving the principal–agent problem for arbitrary specified values. A research agenda against future agentic systems.

Training models to produce helpful, honest, harmless outputs via RLHF and constitutional methods. Current alignment practice absorbs this label.

If it succeeds: what binds next

Aligned frontier AI exists. Principals now choose what to align to, and operator legitimacy becomes the binding constraint. The race is over who gets to be the principal.

A strategy that produces a worse next problem than the one it solved has not done durable work.

Falsification signal

Interpretability and oversight methods stop scaling with model capability, stronger models are less rather than more inspectable.

A strategy held without a falsification signal is not strategy; it is affiliation. Continued support after this signal lands is identity, not bet. See the identity diagnostic.

Self-undermining threshold

overshoot riskWhen alignment investment hollows out institutional capacity.

Concentrating talent and funding into alignment produces a shortage of the democratic and institutional capacity an aligned superintelligence would land into. Solved alignment, dysfunctional substrate.

Every strategy has a stable region where it reinforces itself and an unstable region where pursuit defeats it. The threshold between them is usually narrower than advocates acknowledge.

People on the record

103Profiled figures appear first, with their tier in small caps. Each face links to the person and their full quote record. Tag: alignment-first.

expertise mix · 29 profiled

recognition mix

A strategy whose endorsement skews to commentators or external-domain experts is in a different epistemic state from one endorsed mostly by frontier-builders. The mix is read carefully across both axes; see the board for criteria. Counts are over the 29 profiled people on this strategy (74 unprofiled excluded).

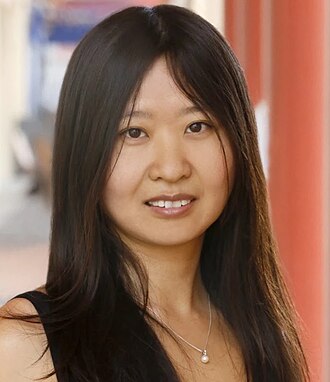

Ajeya Cotra

Governance, policy, strategy · Recognised inside subfield

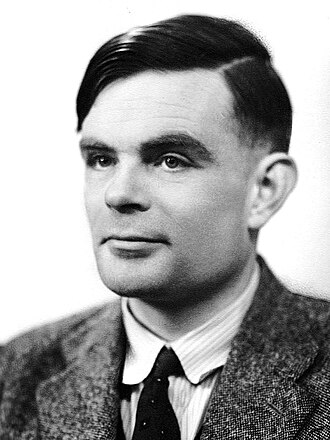

Alan Turing

Deep ML / safety technical · Mass-public recognition

Anca Dragan

Builds frontier systems · Known across the AI/safety field

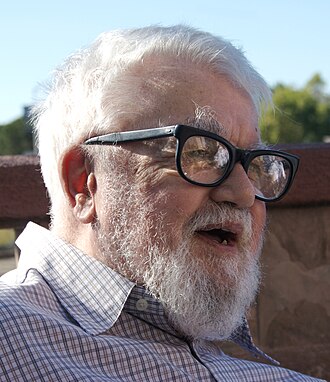

Andrew G. Barto

Deep ML / safety technical · Known across the AI/safety field

Brian Christian

Expert in another field · Known across the AI/safety field

Buck Shlegeris

Deep ML / safety technical · Recognised inside subfield

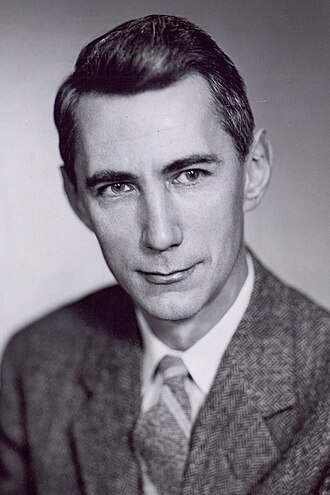

Claude Shannon

Deep ML / safety technical · Mass-public recognition

Daniel Dewey

Deep ML / safety technical · Recognised inside subfield

Doris Tsao

Expert in another field · Known across the AI/safety field

Evan Hubinger

Deep ML / safety technical · Recognised inside subfield

Iyad Rahwan

Deep ML / safety technical · Known across the AI/safety field

Jan Leike

Builds frontier systems · Known across the AI/safety field

John McCarthy

Deep ML / safety technical · Mass-public recognition

John Schulman

Builds frontier systems · Known across the AI/safety field

Joseph Carlsmith

Governance, policy, strategy · Known across the AI/safety field

Marvin Minsky

Deep ML / safety technical · Mass-public recognition

Nick Bostrom

Governance, policy, strategy · Mass-public recognition

Norbert Wiener

Expert in another field · Mass-public recognition

Owain Evans

Deep ML / safety technical · Recognised inside subfield

Paul Christiano

Builds frontier systems · Known across the AI/safety field

Richard Ngo

Deep ML / safety technical · Recognised inside subfield

Rob Miles

Applied or adjacent technical · Known across the AI/safety field

Rohin Shah

Deep ML / safety technical · Recognised inside subfield

Ryan Greenblatt

Deep ML / safety technical · Recognised inside subfield

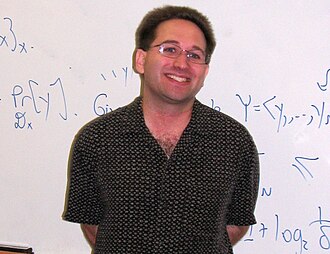

Scott Aaronson

Deep ML / safety technical · Known across the AI/safety field

Stuart Armstrong

Deep ML / safety technical · Recognised inside subfield

Stuart Russell

Deep ML / safety technical · Mass-public recognition

Victoria Krakovna

Deep ML / safety technical · Recognised inside subfield

Wei Dai

Deep ML / safety technical · Recognised inside subfield

Aaron Courville

Université de Montréal; Deep Learning textbook co-author

Adam Jermyn

Anthropic; previously astrophysics

Adam Kalai

Microsoft Research; AI fairness and safety

Agnes Callard

University of Chicago philosopher; aspiration theorist

Alex Irpan

Google Brain alumnus; Sorta Insightful blog

Alex Pan

Berkeley CHAI; reward hacking

Alex Turner

DeepMind alignment researcher; shard theory co-originator

67 more on the record. See the full tag page: alignment-first

Load-bearing commitments

Worldview positions this strategy quietly assumes. If the claim fails empirically or philosophically, the strategy loses its target or its premise.

Principals have determinate values AI can learn.

Fails if: If values are contested or constructed, the strategy loses its target.

AI is a tool with controllable properties.

Fails if: If AI has emergent agency, the tool frame fails and alignment becomes negotiation.

Coordinates

Conflicts, grouped by mechanism

0No strict conflicts catalogued. This strategy pulls a lever that nothing else pulls in the opposite direction.

Complements, grouped by mechanism

5Cross-side bridge

one AI-side, one world-sideOne acts on the model, the other on institutions or culture. The bridge hedges against both artefact-level and substrate-level failure.

Adjacent bet

different levers, loosely coupledDifferent levers, different directions of action. They reinforce only via the general principle that covering more bets dominates covering fewer.

Stage-sequenced

one sets up the otherThe pair is phase-offset: one acts before the transition, the other during or after. The first creates the conditions under which the second binds.

Same phase, different layer

same stage, distinct leversBoth are active in the same phase of the transition but act on different layers (model vs institution vs culture). They cover different failure modes inside the same window.

Same-lever twins

5Both use the same lever in the same direction. Usually redundant inside a portfolio: each dollar or effort unit only buys one lever pull, even if two strategies are named.

Axis position

Source note: Alignment first strategy.md