Institutional capacity ↑ · institutional

Governance first

Institutional capacity is the binding constraint; without it no technical success prevents misuse, capture, or concentration.

Mechanism

Build licensing, liability, audits, independent evaluation, and international coordination to supervise AI before it is ungovernable.

What this name has meant

vintage driftThe name is stable; the content has shifted. A reader acting on the label without asking which vintage is being meant risks arguing with a position nobody currently holds.

Passing substantive AI legislation.

Often means standard-setting at safety institutes plus international declarations, which is closer to voluntary restraint with state endorsement.

If it succeeds: what binds next

Functional regulatory infrastructure exists. The regulator now has to make substantive decisions with the empirical uncertainty that was the reason for its existence in the first place.

A strategy that produces a worse next problem than the one it solved has not done durable work.

Falsification signal

Enacted regulations cover less than 20% of frontier compute by some date, or institutional capture moves faster than capacity building.

A strategy held without a falsification signal is not strategy; it is affiliation. Continued support after this signal lands is identity, not bet. See the identity diagnostic.

Self-undermining threshold

overshoot riskWhen pursued through national regulation without international coordination.

Uncoordinated regulation produces regulatory capture opportunities in each jurisdiction. Captured regulators then accelerate the concentration the strategy was supposed to prevent.

Every strategy has a stable region where it reinforces itself and an unstable region where pursuit defeats it. The threshold between them is usually narrower than advocates acknowledge.

Historical analogue

Aviation · FAA / equivalentEvery strategy inherits a plausible ceiling from its precedent. The analogue conditions the realistic reach.

Produced

Industry-wide standard practice; airworthiness oversight.

Did not produce

Slow on emerging categories (drones); certification capture risk.

Addresses 2 failure scenarios

all scenarios →People on the record

252Profiled figures appear first, with their tier in small caps. Each face links to the person and their full quote record. Tag: governance-first.

expertise mix · 53 profiled

recognition mix

A strategy whose endorsement skews to commentators or external-domain experts is in a different epistemic state from one endorsed mostly by frontier-builders. The mix is read carefully across both axes; see the board for criteria. Counts are over the 53 profiled people on this strategy (199 unprofiled excluded).

Abeba Birhane

Deep ML / safety technical · Known across the AI/safety field

Allan Dafoe

Governance, policy, strategy · Known across the AI/safety field

Alondra Nelson

Governance, policy, strategy · Known across the AI/safety field

Amartya Sen

Expert in another field · Mass-public recognition

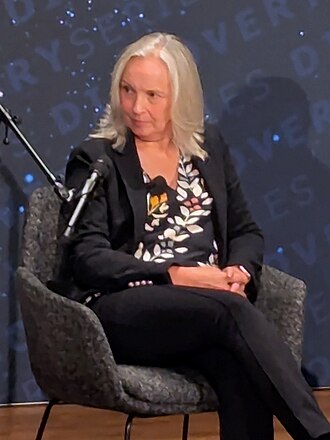

Amy Zegart

Governance, policy, strategy · Known across the AI/safety field

Andrew Yang

Governance, policy, strategy · Mass-public recognition

Bret Taylor

Governance, policy, strategy · Mass-public recognition

Carl Benedikt Frey

Expert in another field · Known across the AI/safety field

Cathy O'Neil

Applied or adjacent technical · Mass-public recognition

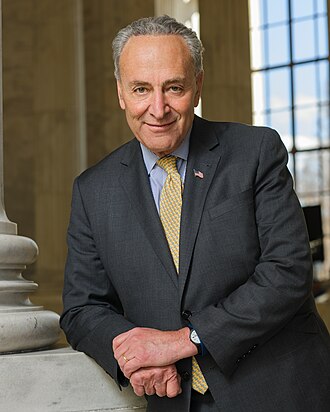

Chuck Schumer

Governance, policy, strategy · Mass-public recognition

Daron Acemoglu

Expert in another field · Mass-public recognition

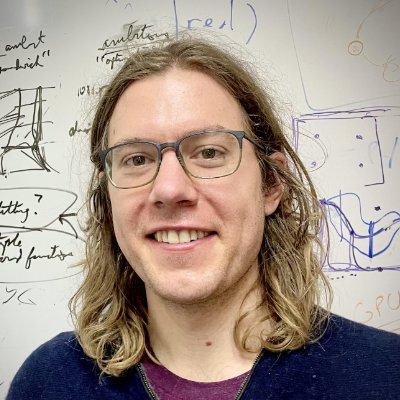

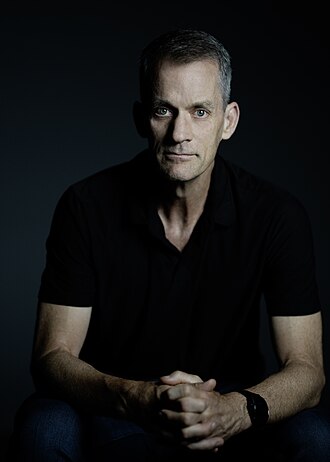

David Krueger

Deep ML / safety technical · Recognised inside subfield

Demis Hassabis

Builds frontier systems · Mass-public recognition

Edward Felten

Deep ML / safety technical · Known across the AI/safety field

Evan Williams

Governance, policy, strategy · Mass-public recognition

Frank Pasquale

Governance, policy, strategy · Known across the AI/safety field

Gary Marcus

Deep ML / safety technical · Mass-public recognition

Gillian Hadfield

Governance, policy, strategy · Known across the AI/safety field

Helen Toner

Governance, policy, strategy · Known across the AI/safety field

Holden Karnofsky

Governance, policy, strategy · Known across the AI/safety field

Jack Clark

Governance, policy, strategy · Known across the AI/safety field

Jason Matheny

Governance, policy, strategy · Known across the AI/safety field

Jeff Dean

Builds frontier systems · Known across the AI/safety field

Jen Easterly

Governance, policy, strategy · Known across the AI/safety field

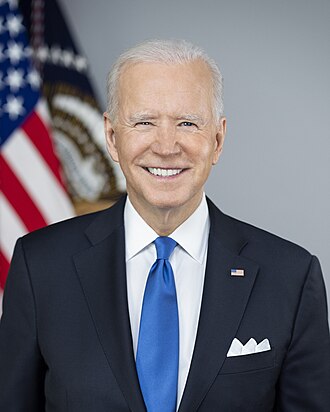

Joe Biden

Governance, policy, strategy · Mass-public recognition

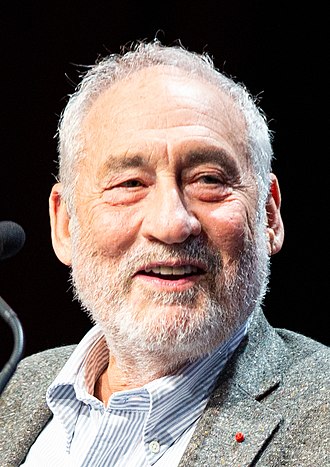

Joseph Stiglitz

Expert in another field · Mass-public recognition

Joy Buolamwini

Deep ML / safety technical · Mass-public recognition

Kamala Harris

Governance, policy, strategy · Mass-public recognition

Kara Swisher

Governance, policy, strategy · Mass-public recognition

Kate Darling

Expert in another field · Known across the AI/safety field

Luciano Floridi

Governance, policy, strategy · Known across the AI/safety field

MacKenzie Scott

Governance, policy, strategy · Mass-public recognition

Margaret Mitchell

Deep ML / safety technical · Known across the AI/safety field

Maria Ressa

Expert in another field · Mass-public recognition

Mireille Hildebrandt

Governance, policy, strategy · Recognised inside subfield

Mustafa Suleyman

Builds frontier systems · Mass-public recognition

216 more on the record. See the full tag page: governance-first

Coordinates

Conflicts, grouped by mechanism

0No strict conflicts catalogued. This strategy pulls a lever that nothing else pulls in the opposite direction.

Complements, grouped by mechanism

5Same-lever reinforce

same lever, same pull, different mechanismBoth strategies pull the same lever in the same direction by different means. They stack: doing both amplifies the pull, at the cost of double-counting in portfolio audits.

Cross-side bridge

one AI-side, one world-sideOne acts on the model, the other on institutions or culture. The bridge hedges against both artefact-level and substrate-level failure.

Same-side diversification

same side, different leverBoth act on the same side (AI or world) but pull distinct levers. They cover several failure modes on that side while leaving the other side uncovered.

Same phase, different layer

same stage, distinct leversBoth are active in the same phase of the transition but act on different layers (model vs institution vs culture). They cover different failure modes inside the same window.

Same-lever twins

7Both use the same lever in the same direction. Usually redundant inside a portfolio: each dollar or effort unit only buys one lever pull, even if two strategies are named.

Axis position

Source note: Governance first strategy.md