Institutional capacity ↑ · institutional

Arms control treaty

Sovereigns accept binding constraints they negotiate directly faster than those delegated to agencies; the historical base rate for durable restraint is treaty based.

Mechanism

Bind AI via bilateral or multilateral treaty (SALT / START / BWC / CWC model) with verification obligations and dispute resolution.

Falsification signal

Signatories cannot domestically enforce (the BWC pattern).

A strategy held without a falsification signal is not strategy; it is affiliation. Continued support after this signal lands is identity, not bet. See the identity diagnostic.

Historical analogue

Nuclear · SALT / STARTEvery strategy inherits a plausible ceiling from its precedent. The analogue conditions the realistic reach.

Produced

No state-to-state nuclear use in 80 years, modest proliferation control.

Did not produce

Did not prevent 9 nuclear states. BWC analogue without verification was worse.

People on the record

18Profiled figures appear first, with their tier in small caps. Each face links to the person and their full quote record. Tag: international-treaty.

Fumio Kishida

Governance, policy, strategy · Mass-public recognition

Yi Zeng

Deep ML / safety technical · Known across the AI/safety field

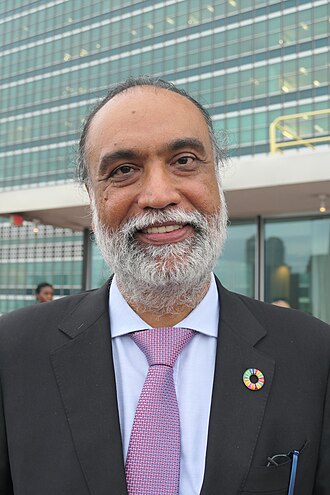

Amandeep Singh Gill

UN Secretary-General's Envoy on Technology

Angela Kane

Former UN High Representative for Disarmament Affairs

Anja Kaspersen

UN senior fellow; disarmament diplomat

Bletchley Declaration Signatories

First international AI Safety Summit signatories (2023)

Brian Tse

Founder of Concordia AI; China AI safety

Gabriela Ramos

UNESCO Assistant Director-General for Social and Human Sciences

Henry Kissinger

Former U.S. Secretary of State; co-author 'The Age of AI'

Isabella Wilkinson

Chatham House international affairs AI researcher

Jeffrey Ding

George Washington University; ChinAI newsletter

Jon Bateman

Carnegie senior fellow; AI and cyber strategy

Kati Suominen

Founder of Nextrade Group; AI trade policy

Robert Trager

Oxford Martin AI governance scholar

Sara Hossein

International AI law scholar

Toby Walsh

UNSW Sydney; AI safety advocate

Victor Gao

Chinese diplomat; AI dialogue participant

Yuhwen Yang

Carnegie Endowment China AI research

Load-bearing commitments

Worldview positions this strategy quietly assumes. If the claim fails empirically or philosophically, the strategy loses its target or its premise.

Arms control is tractable and enforceable for AI-like technology.

Fails if: If verification is impossible, the treaty is a declaration rather than a constraint.

Coordinates

Conflicts, grouped by mechanism

1Frame opposition

incompatible premisesThe strategies accept different premises about what AI is or what the binding problem is. They conflict not on lever choice but on the frame that makes lever choice sensible.

Complements, grouped by mechanism

5Same phase, different layer

same stage, distinct leversBoth are active in the same phase of the transition but act on different layers (model vs institution vs culture). They cover different failure modes inside the same window.

Cross-side bridge

one AI-side, one world-sideOne acts on the model, the other on institutions or culture. The bridge hedges against both artefact-level and substrate-level failure.

Same-lever reinforce

same lever, same pull, different mechanismBoth strategies pull the same lever in the same direction by different means. They stack: doing both amplifies the pull, at the cost of double-counting in portfolio audits.

Stage-sequenced

one sets up the otherThe pair is phase-offset: one acts before the transition, the other during or after. The first creates the conditions under which the second binds.

Same-lever twins

8Both use the same lever in the same direction. Usually redundant inside a portfolio: each dollar or effort unit only buys one lever pull, even if two strategies are named.

Axis position

Source note: Arms control treaty strategy.md