Control mechanism ↑ · ai artefact

Interpretability first

Mechanistic understanding is a precondition for reliable oversight; behavioural evaluation without interpretability cannot rule out deceptive alignment.

Mechanism

Scale mechanistic interpretability on frontier models so training, evaluation, and governance decisions read internal structure, not just behaviour.

If it succeeds: what binds next

Models are legible. Legibility itself is now an asset and a target, interpretability tools become security-sensitive infrastructure.

A strategy that produces a worse next problem than the one it solved has not done durable work.

Falsification signal

Leading labs cannot produce mechanistic explanations of their own frontier models within two to three years of release.

A strategy held without a falsification signal is not strategy; it is affiliation. Continued support after this signal lands is identity, not bet. See the identity diagnostic.

Self-undermining threshold

overshoot riskWhen safety talent concentrates away from governance and resilience.

If interpretability then fails to scale, the stripped adjacent families had no time to mature as alternatives.

Every strategy has a stable region where it reinforces itself and an unstable region where pursuit defeats it. The threshold between them is usually narrower than advocates acknowledge.

Addresses 1 failure scenario

all scenarios →People on the record

15Profiled figures appear first, with their tier in small caps. Each face links to the person and their full quote record. Tag: interpretability-bet.

expertise mix · 4 profiled

recognition mix

A strategy whose endorsement skews to commentators or external-domain experts is in a different epistemic state from one endorsed mostly by frontier-builders. The mix is read carefully across both axes; see the board for criteria. Counts are over the 4 profiled people on this strategy (11 unprofiled excluded).

Chris Olah

Builds frontier systems · Known across the AI/safety field

Connor Leahy

Deep ML / safety technical · Known across the AI/safety field

Cynthia Rudin

Deep ML / safety technical · Known across the AI/safety field

Neel Nanda

Deep ML / safety technical · Recognised inside subfield

Asma Ghandeharioun

Google DeepMind; 'Patchscopes' for LLM interpretability

David Bau

Northeastern; mechanistic interpretability of LLMs

Fernanda Viégas

Harvard; ex-Google PAIR; data visualization

Jacob Andreas

MIT NLP; language models as belief reports

John Wentworth

Independent alignment researcher; natural abstractions

Lucius Bushnaq

Apollo Research; mech interp

Martin Wattenberg

Harvard; ex-Google PAIR; visualization for ML

Rich Caruana

Microsoft Research; interpretable ML

Roger Grosse

U Toronto; Anthropic; influence functions for LLMs

Trenton Bricken

Anthropic mechanistic interpretability

Tristan Hume

Anthropic mechanistic interpretability

Coordinates

Conflicts, grouped by mechanism

0No strict conflicts catalogued. This strategy pulls a lever that nothing else pulls in the opposite direction.

Complements, grouped by mechanism

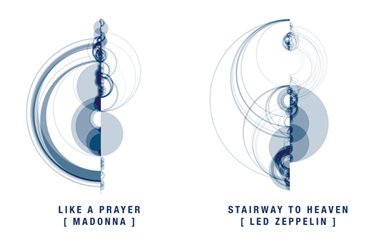

5Same-lever reinforce

same lever, same pull, different mechanismBoth strategies pull the same lever in the same direction by different means. They stack: doing both amplifies the pull, at the cost of double-counting in portfolio audits.

Adjacent bet

different levers, loosely coupledDifferent levers, different directions of action. They reinforce only via the general principle that covering more bets dominates covering fewer.

Cross-side bridge

one AI-side, one world-sideOne acts on the model, the other on institutions or culture. The bridge hedges against both artefact-level and substrate-level failure.

Same-lever twins

2Both use the same lever in the same direction. Usually redundant inside a portfolio: each dollar or effort unit only buys one lever pull, even if two strategies are named.

Axis position

Source note: Interpretability first strategy.md