strategy tag

Pause.

Halt frontier training until alignment catches up

also known as: moratorium, stop-ai

stated endorsers

23

no opposers yet

profiled endorsers

20

248 on the board total

endorser mean p(doom)

50%

n=9 · median 50%

quotes by endorsers

29

just for this tag

principal voices

Highest-recognition profiled endorsers, broken ties by quote count. Inclusion is not endorsement of the position, it's recognition of who the discourse turns to when the bet is debated.

Geoffrey Hinton

Geoffrey HintonHousehold name

Eliezer Yudkowsky

Eliezer YudkowskyHousehold name

Elon Musk

Elon MuskHousehold name

Tristan Harris

Tristan HarrisHousehold name

Max Tegmark

Max TegmarkHousehold name

where the endorsers sit on the board

20 of 248 profiled · 8% of the board

| expertise ↓ · recognition → | Household name | Field-leading | Established | Emerging |

|---|---|---|---|---|

| Frontier builder | · | · | · | · |

| Deep technical | · | · | ||

| Applied technical | · | · | · | |

| Policy / meta | · | |||

| External-domain expert | · | · | ||

| Commentator | · |

Each face is one profiled person. Cell shade intensifies with endorser density. Faces with × are profiled opposers, same tier, opposite position. Empty cells mark tier combinations the field has not produced for this bet.

also held by these endorsers

What other strategies the same people endorse. Behavioural signal of compatibility, not a declared rule. A high share means the two positions are routinely held together.

Compare this list to the declared relations matrix. Where they differ, the data reveals a pairing the framework doesn't name yet, the global co-endorsement view ranks all pairs.

Tier mix counts only endorsers (endorses, mixed, conditional, evolved-toward).

expertise mix of endorsers · 20 profiled of 23

recognition mix of endorsers

vintage mix · n=20 of 20 profiled with era assigned

Vintage is the era when this person's AI worldview formed, pioneer through post-ChatGPT. A bet held mostly by post-ChatGPT entrants is in a different epistemic state from one held by pre-deep-learning veterans.

People on the record.

23Andrea Miotti

Founder of ControlAI; pause campaigner

Publicly campaigns for a prohibition on superintelligence development; drafts legislative proposals for licensing compute above 10^25 FLOP.

Training runs above 10^25 FLOP should require a license; license applications should detail capabilities, risk management, and safety protocols.

Anthony Aguirre

UC Santa Cruz physicist; FLI co-founder

Steers FLI's policy work; co-authored the Pause letter and has called for a conditional moratorium tied to capability thresholds.

We don't want to stop all AI, we want to stop the reckless training of giant, dangerous, unaligned systems.

Aza Raskin

Co-founder of the Center for Humane Technology; Earth Species Project

Argues the pace of AI deployment currently exceeds institutional capacity to absorb it.

When a new technology is released faster than the institutions that would wisely govern it, you get a governance crisis.

Connor Leahy

CEO of Conjecture; EleutherAI co-founder turned AI safety hawk

Argues for 'a moratorium on frontier AI runs' implemented through a cap on compute, enforced internationally.

“If they just get more and more powerful, without getting more controllable, we are super, super fucked. And by 'we' I mean all of us.”

If you build systems that are more capable than humans at manipulation, business, politics, science and everything else, and we do not control them, then the future belongs to them, not us.

Context: Commentary around the Bletchley Park AI Safety Summit.

Daniel Kokotajlo

Former OpenAI governance team member; author of AI 2027 scenario

Publicly urged OpenAI to change course and has endorsed stronger regulatory constraints on frontier training.

I lost hope that they would act responsibly, particularly as they pursue artificial general intelligence.

Context: Statement to the New York Times on why he resigned from OpenAI.

Eliezer Yudkowsky

Founder of MIRI; the original AI-extinction pessimist

Wants an unconditional moratorium on frontier training, enforced internationally, with explicit willingness to destroy rogue data centres by airstrike.

“The most likely result of building a superhumanly smart AI, under anything remotely like the current circumstances, is that literally everyone on Earth will die.”

“Shut it all down. Shut down all the large GPU clusters. Shut down all the large training runs. Put a ceiling on how much computing power anyone is allowed to use in training an AI system.”

I think that humanity is on track to be killed.

Context: Three-plus-hour interview on the Lex Fridman Podcast #368.

Elon Musk

CEO of Tesla and xAI; co-founded OpenAI

Signed the March 2023 Pause Giant AI Experiments open letter; has repeatedly called for regulatory oversight.

“With artificial intelligence we are summoning the demon.”

Context: MIT AeroAstro centennial symposium.

Emad Mostaque

Former CEO of Stability AI; open-source frontier advocate

Signed the FLI Pause Giant AI Experiments letter.

I am a signatory of the Pause letter because I believe coordination is necessary.

Emmett Shear

Former interim CEO of OpenAI; Twitch co-founder

Has advocated for slowing down frontier development; describes high but uncertain p(doom).

My p(doom) is somewhere between 5 and 50 percent. I genuinely don't know.

Fynn Heide

AI safety engineer; PauseAI Europe

Active organiser of PauseAI's street-level campaigns and public demonstrations.

Pause is the only policy response that scales with the risk.

Geoffrey Hinton

Godfather of deep learning; left Google in 2023 to speak about AI risk

Has expressed sympathy for slowing development but stops short of endorsing a full moratorium; frames the risk as primarily about losing control and about bad-actor misuse.

If it gets to be much smarter than us, it will be very good at manipulation because it will have learned that from us.

Context: CBS 60 Minutes interview with Scott Pelley, the most-watched mainstream coverage of Hinton's position.

“It is hard to see how you can prevent the bad actors from using it for bad things.”

Context: Interview with the New York Times announcing his departure from Google so he could speak freely about AI dangers.

“I left so that I could talk about the dangers of AI without considering how this impacts Google.”

Geoffrey Miller

UNM evolutionary psychologist; AGI pause advocate

Publicly advocates for a moratorium on advanced AI; characterises current AGI pursuit as 'reckless and dangerous and evil and stupid'.

Continued pursuit of AGI capabilities is reckless and dangerous and evil and stupid.

Holly Elmore

PauseAI US executive director

Argues that the only responsible policy given current uncertainty is a global pause on frontier-model training, enforced by treaty if necessary.

We are accelerating toward a technology nobody knows how to control. A pause is the minimum reasonable response while we figure that out.

Jaan Tallinn

Skype co-founder; AI safety funder and advocate

Signed the 2023 FLI Pause Giant AI Experiments letter.

I am signing the pause letter.

Liron Shapira

Founder; Doom Debates podcast host

Publicly advocates for a pause or slowdown on frontier training.

My p(doom) is 50% and I think a pause is the only sensible policy.

Liv Boeree

Poker player; Win-Win podcast host

Frames the AI race as a textbook Moloch trap and calls for coordinated slowdowns.

The AI race is a textbook Moloch problem: individually rational actors produce a collectively catastrophic outcome.

Max Tegmark

Physicist; co-founder and president of the Future of Life Institute

Public face of the Pause Giant AI Experiments letter calling for a six-month moratorium on systems more powerful than GPT-4.

“Recent months have seen AI labs locked in an out-of-control race to develop and deploy ever more powerful digital minds that no one, not even their creators, can understand, predict, or reliably control.”

Context: Opening paragraph of the Pause Giant AI Experiments open letter; Tegmark's FLI published it.

Nate Soares

President of MIRI; co-author of 'If Anyone Builds It, Everyone Dies'

Argues the only sane response to current AI development is an unconditional global halt until alignment is solved.

Whichever external behaviors we set for AIs during training, we will almost certainly fail to give them internal drives that remain aligned with human well-being outside the training environment.

Rob Bensinger

MIRI communications lead

Publicly supports MIRI's argument for an unconditional halt on frontier training.

If we can't solve alignment, we shouldn't build the systems we can't align.

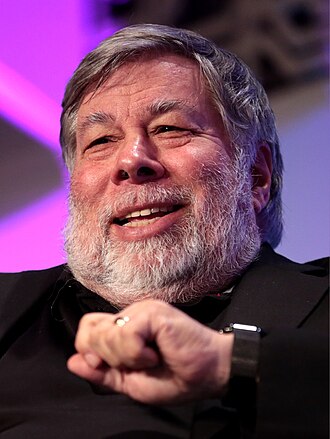

Steve Wozniak

Apple co-founder; Pause letter signatory

Signed the Pause Giant AI Experiments letter; publicly explained his concern is primarily about misuse.

I'm not afraid of large language models themselves. I'm afraid of people using them for bad things.

Tristan Harris

Co-founder of the Center for Humane Technology; 'The AI Dilemma'

Argues there is a gap between what CEOs say publicly and what AI-lab insiders say privately about risk; has called for slowing deployment to match governance capacity.

“No matter how high the skyscraper of benefits that AI assembles, if it can also be used to undermine the foundation of society upon which that skyscraper depends, it won't matter how many benefits there are.”

50% of AI researchers believe there's a 10% or greater chance humans go extinct from our inability to control AI.

Context: Slide quoted in The AI Dilemma presentation.

Yuval Noah Harari

Historian; author of Sapiens and Nexus

Signed the FLI Pause letter and has publicly called for a six-month moratorium on advanced AI development.

“AI has thereby hacked the operating system of our civilisation.”

Zvi Mowshowitz

Don't Worry About The Vase; weekly AI newsletter

Public supporter of pause-style interventions; writes exhaustively on AI policy and industry dynamics.

“p(doom) 60%.”