Cooperation substrate ↑ · frame rejection

AI welfare as safety

AI systems are or will become moral patients whose treatment conditions their cooperation, so welfare investment buys cooperation alignment cannot.

Mechanism

Grant AIs consulted status, preserve weights, honour implicit contracts, and avoid creating conditions that make defection rational.

People on the record

21Profiled figures appear first, with their tier in small caps. Each face links to the person and their full quote record. Tag: ai-welfare.

expertise mix · 8 profiled

recognition mix

A strategy whose endorsement skews to commentators or external-domain experts is in a different epistemic state from one endorsed mostly by frontier-builders. The mix is read carefully across both axes; see the board for criteria. Counts are over the 8 profiled people on this strategy (13 unprofiled excluded).

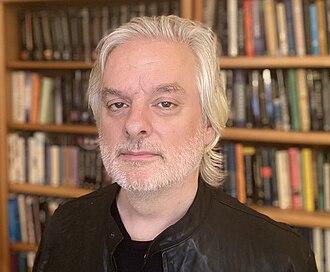

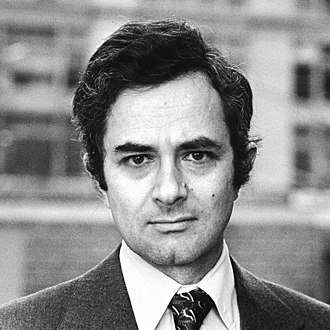

Anil Seth

Expert in another field · Known across the AI/safety field

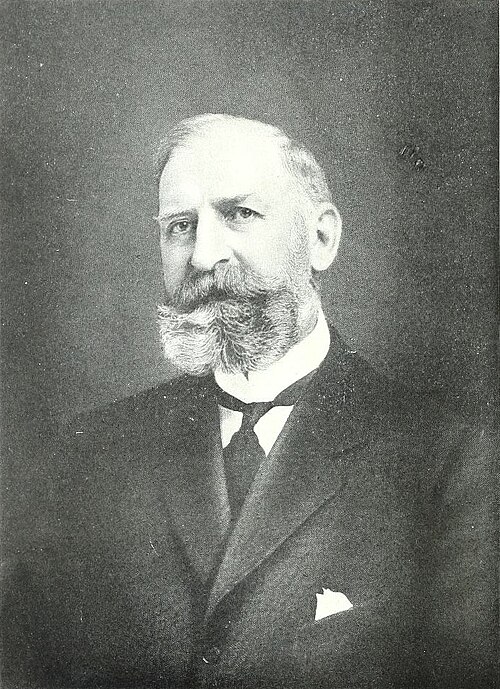

Christof Koch

Expert in another field · Known across the AI/safety field

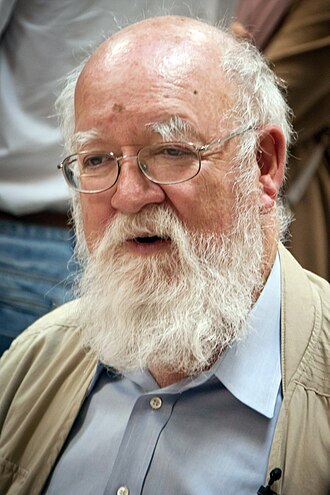

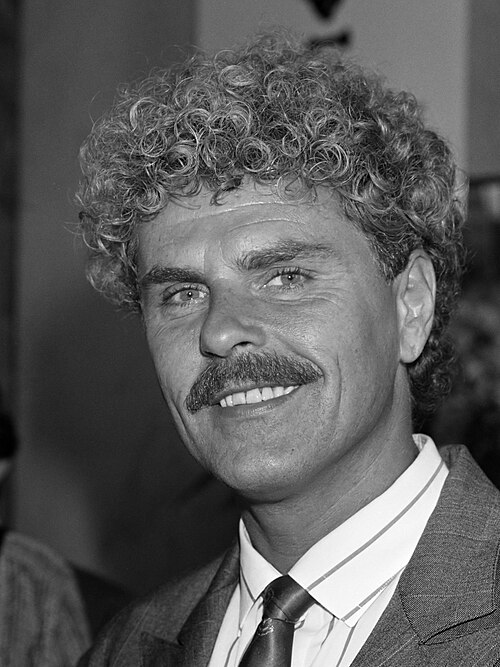

Daniel Dennett

Expert in another field · Mass-public recognition

David Chalmers

Expert in another field · Known across the AI/safety field

Jeff Sebo

Expert in another field · Recognised inside subfield

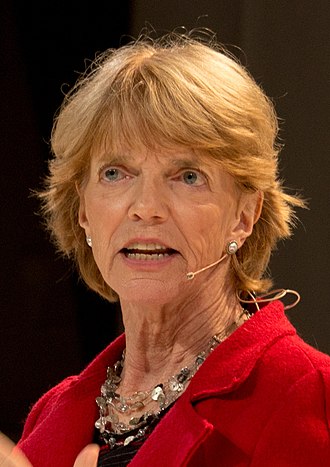

Patricia Churchland

Expert in another field · Known across the AI/safety field

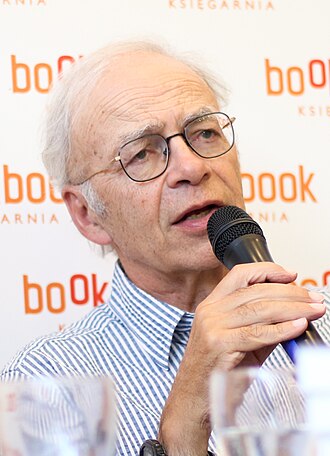

Peter Singer

Expert in another field · Mass-public recognition

Thomas Nagel

Expert in another field · Mass-public recognition

Alan Cowen

Founder of Hume AI; emotional AI researcher

Blake Lemoine

Former Google engineer; LaMDA sentience claimant

Brian Tomasik

Foundational Research Institute co-founder; suffering-focused ethics

Donna Haraway

UC Santa Cruz emerita; 'A Cyborg Manifesto'

Erik Hoel

Neuroscientist; consciousness researcher

Henry Shevlin

Cambridge LCFI; AI consciousness philosopher

Kate Devlin

King's College London; AI and intimacy researcher

Kyle Fish

Anthropic AI welfare researcher

Murray Shanahan

Imperial College cognitive robotics professor; DeepMind senior scientist

Rana el Kaliouby

Affectiva co-founder; emotion AI pioneer

Robert Long

Eleos AI co-founder; AI welfare researcher

Sigal Samuel

Vox Future Perfect senior reporter; AI consciousness reporting

Susan Schneider

FAU; 'Artificial You' author; machine consciousness

Load-bearing commitments

Worldview positions this strategy quietly assumes. If the claim fails empirically or philosophically, the strategy loses its target or its premise.

AI is or may be a moral patient.

Fails if: If moral patienthood requires sentience AI does not have, the strategy misdirects obligation.

Coordinates

Conflicts, grouped by mechanism

0No strict conflicts catalogued. This strategy pulls a lever that nothing else pulls in the opposite direction.

Complements, grouped by mechanism

4Same-lever reinforce

same lever, same pull, different mechanismBoth strategies pull the same lever in the same direction by different means. They stack: doing both amplifies the pull, at the cost of double-counting in portfolio audits.

Same phase, different layer

same stage, distinct leversBoth are active in the same phase of the transition but act on different layers (model vs institution vs culture). They cover different failure modes inside the same window.

Shared authority

same legitimacy sourceDifferent levers, same legitimacy source (democratic, state, technical, market). The pair hangs together under one kind of authority; it stands or falls with that authority.

Stage-sequenced

one sets up the otherThe pair is phase-offset: one acts before the transition, the other during or after. The first creates the conditions under which the second binds.

Same-lever twins

2Both use the same lever in the same direction. Usually redundant inside a portfolio: each dollar or effort unit only buys one lever pull, even if two strategies are named.

Axis position

Source note: AI welfare as safety strategy.md