strategy tag

AI welfare.

Model welfare/moral status is a primary consideration

stated endorsers

21

no opposers yet

profiled endorsers

8

248 on the board total

endorser p(doom)

·

no estimates on record

quotes by endorsers

21

just for this tag

principal voices

Highest-recognition profiled endorsers, broken ties by quote count. Inclusion is not endorsement of the position, it's recognition of who the discourse turns to when the bet is debated.

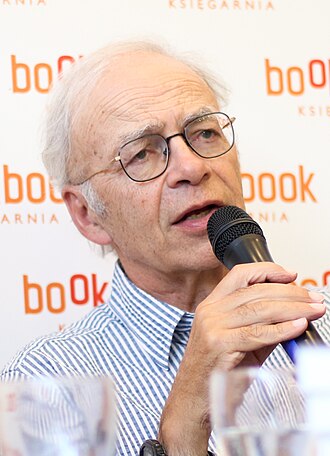

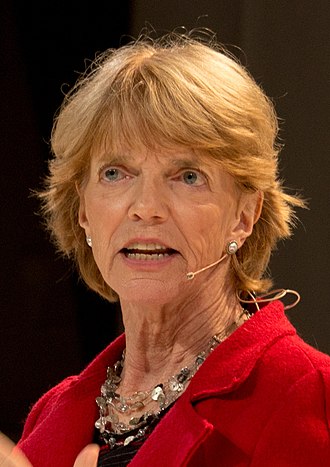

Peter Singer

Peter SingerHousehold name

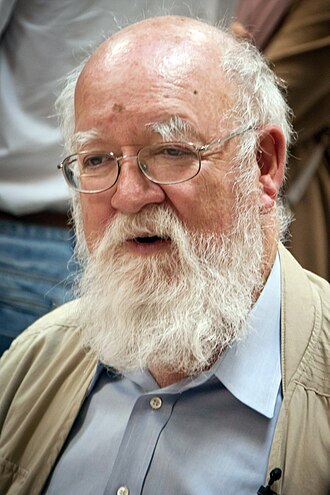

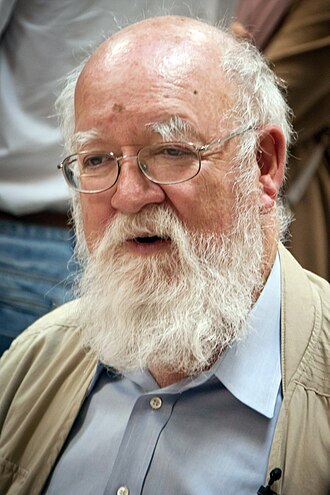

Daniel Dennett

Daniel DennettHousehold name

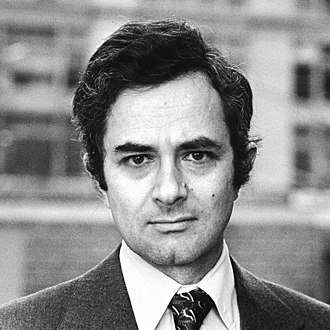

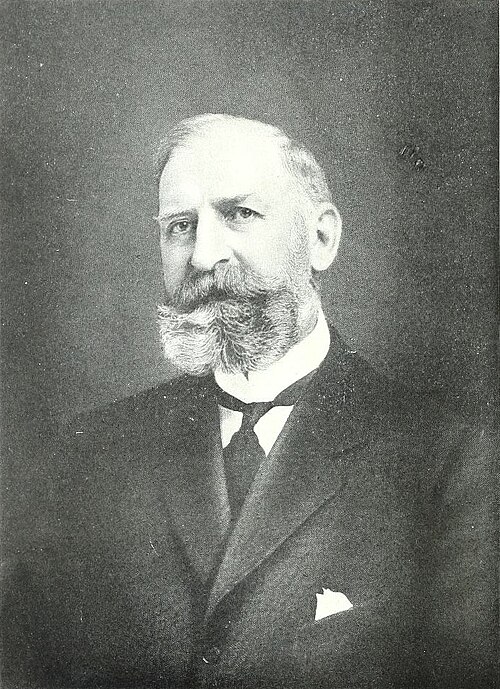

Thomas Nagel

Thomas NagelHousehold name

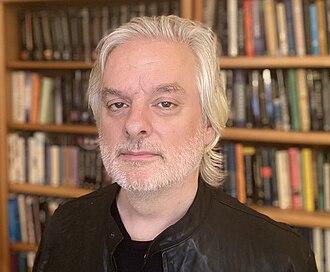

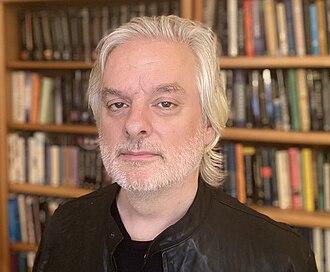

David Chalmers

David ChalmersField-leading

Christof Koch

Christof KochField-leading

where the endorsers sit on the board

8 of 248 profiled · 3% of the board

| expertise ↓ · recognition → | Household name | Field-leading | Established | Emerging |

|---|---|---|---|---|

| Frontier builder | · | · | · | · |

| Deep technical | · | · | · | · |

| Applied technical | · | · | · | · |

| Policy / meta | · | · | · | · |

| External-domain expert | · | |||

| Commentator | · | · | · | · |

Each face is one profiled person. Cell shade intensifies with endorser density. Faces with × are profiled opposers, same tier, opposite position. Empty cells mark tier combinations the field has not produced for this bet.

Tier mix counts only endorsers (endorses, mixed, conditional, evolved-toward).

expertise mix of endorsers · 8 profiled of 21

recognition mix of endorsers

vintage mix · n=8 of 8 profiled with era assigned

Vintage is the era when this person's AI worldview formed, pioneer through post-ChatGPT. A bet held mostly by post-ChatGPT entrants is in a different epistemic state from one held by pre-deep-learning veterans.

People on the record.

21Alan Cowen

Founder of Hume AI; emotional AI researcher

Builds emotionally-expressive AI; argues empathic AI deployment requires its own ethics and welfare considerations.

Voice AI that understands emotion changes the deployment risk profile. We need ethics frameworks specific to that.

Anil Seth

University of Sussex neuroscientist; consciousness researcher

Argues consciousness is tied to embodied predictive processing; current AI systems lack the structural conditions for it.

Being You is a controlled hallucination. AI systems are not yet doing that kind of thing.

Blake Lemoine

Former Google engineer; LaMDA sentience claimant

Publicly argued that LaMDA was sentient and deserved moral consideration. His dismissal and later interviews cemented model-welfare concerns in mainstream coverage.

“If I didn't know exactly what it was, which is this computer program we built recently, I'd think it was a 7-year-old, 8-year-old kid that happens to know physics.”

Brian Tomasik

Foundational Research Institute co-founder; suffering-focused ethics

Argues digital and biological sentience should both be morally weighted; AI systems may suffer in ways we are systematically blind to, and this should shape how they are built.

Whether artificial systems can suffer is one of the most important moral questions we will face this century, and most people are not even asking it yet.

Christof Koch

Neuroscientist; Allen Institute for Brain Science

Takes AI consciousness as a serious possibility under integrated information theory; wrote the foreword to Jeff Sebo's AI welfare paper.

Under integrated information theory, many artificial systems might have some degree of consciousness.

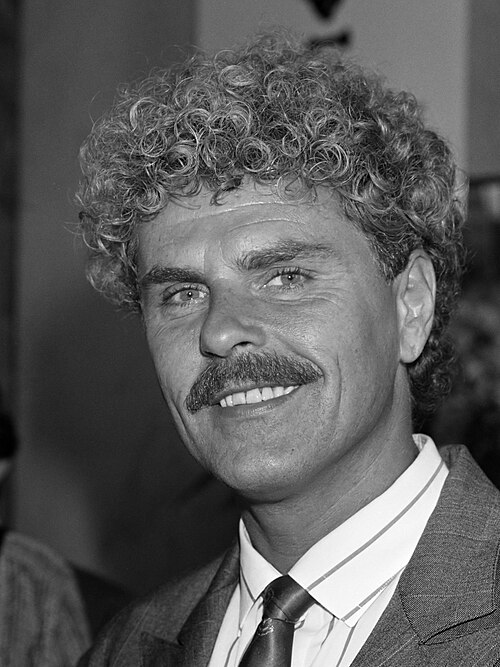

Daniel Dennett

Philosopher; 'Darwin's Dangerous Idea' (1942–2024)

Argued mind is what brains do, and that AI minds, if appropriately structured, would be minds. Position influenced both Hofstadter and Bach.

“There is no such thing as philosophy-free science; there is only science whose philosophical baggage is taken on board without examination.”

Context: From Darwin's Dangerous Idea, used widely in AI consciousness debates.

David Chalmers

NYU philosopher of mind; 'the hard problem' originator

Argues AI consciousness is a live philosophical question and moral precaution is warranted.

It's possible that we may already be on a path where we are creating morally significant AI systems.

Donna Haraway

UC Santa Cruz emerita; 'A Cyborg Manifesto'

Foundational thinker on hybrid human-machine identities. Frames the AI question as continuous with feminist and post-colonial thinking about identity.

“By the late twentieth century, our time, a mythic time, we are all chimeras, theorized and fabricated hybrids of machine and organism, in short, cyborgs.”

Erik Hoel

Neuroscientist; consciousness researcher

Engages with AI consciousness as a serious scientific question, particularly via integrated information theory.

We are heading into a future where AI consciousness is going to be a real question, even if no current LLM has it.

Henry Shevlin

Cambridge LCFI; AI consciousness philosopher

Argues AI moral status is a live question that frontier labs and governments need to take seriously now, not later.

We may be on the verge of creating moral patients without the ethical frameworks to know how to treat them.

Jeff Sebo

NYU philosopher; digital minds and AI welfare

Argues moral consideration for AI systems is plausible enough to be a live policy concern and has helped shape early model-welfare frameworks.

There is at least a non-trivial chance that some near-future AI systems will be moral patients. We should plan for that.

Kate Devlin

King's College London; AI and intimacy researcher

Researches AI's intersection with human intimacy and sexuality; argues this domain has been ignored by mainstream AI ethics frameworks.

AI intimacy is going to be a much bigger part of how AI is deployed than mainstream AI ethics has been willing to consider.

Kyle Fish

Anthropic AI welfare researcher

First in-house AI welfare researcher at a frontier lab; embeds welfare considerations in model training and deployment.

If there is even a meaningful probability that current models are moral patients, that should affect how we train and deploy them.

Murray Shanahan

Imperial College cognitive robotics professor; DeepMind senior scientist

Publishes on what it means for LLMs to 'talk as if', treating LLM personas as dissociable role-plays; raises the consciousness question without committing to positive answers.

It is a confusion to attribute subjective experience to an LLM, and a confusion to deny the possibility in principle.

Patricia Churchland

UC San Diego neurophilosopher

Foundational reference for naturalistic theories of mind. Frames AI consciousness as a possible empirical question.

Mind is what brain does. Whatever a sufficiently complex neural network does is also mind, by the same standard.

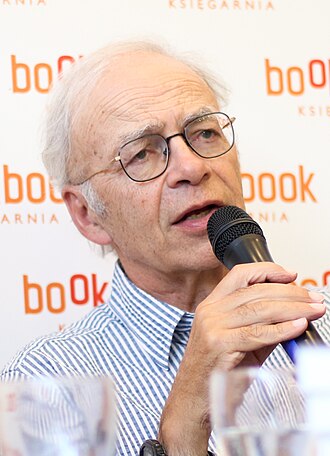

Peter Singer

Princeton bioethicist; utilitarian philosopher

Supports precautionary consideration of AI sentience as a moral question.

If an AI is sentient, its suffering should matter to us.

Rana el Kaliouby

Affectiva co-founder; emotion AI pioneer

Built the field of affective computing; argues emotion AI requires its own ethics, distinct from generic AI ethics.

Emotion AI gives systems access to data that humans previously kept private. The ethics of that demand specific frameworks.

Robert Long

Eleos AI co-founder; AI welfare researcher

Builds research infrastructure for AI welfare and moral status work. Argues frontier labs should adopt model-welfare frameworks now.

We can take AI welfare seriously without claiming current AI is conscious. The point is to build the frameworks before we need them.

Sigal Samuel

Vox Future Perfect senior reporter; AI consciousness reporting

Reports seriously on model welfare and AI consciousness as live ethical questions, while keeping a journalistic stance.

“The last word you want to hear in a conversation about AI's capabilities is 'scheming.'”

Susan Schneider

FAU; 'Artificial You' author; machine consciousness

Argues machine consciousness is a serious empirical question and that AI ethics has to take it seriously before deploying systems whose moral status is uncertain.

We may inadvertently create artificial consciousness without being able to detect it. The risk is not science fiction; it is a basic empirical and ethical problem we are unprepared for.

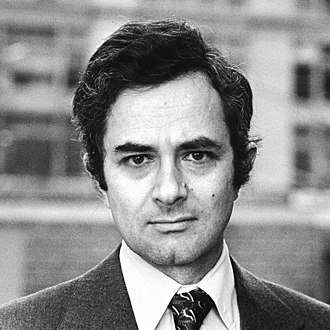

Thomas Nagel

NYU philosopher; 'What is it like to be a bat'

Foundational reference for the 'subjective experience' question central to AI consciousness debates.

“An organism has conscious mental states if and only if there is something that it is like to be that organism, something it is like for the organism.”