strategy tag

Interpretability bet.

Mechanistic interpretability is necessary and sufficient to know models are safe

stated endorsers

14

1 oppose

profiled endorsers

3

248 on the board total

endorser p(doom)

·

no estimates on record

quotes by endorsers

14

just for this tag

principal voices

Highest-recognition profiled endorsers, broken ties by quote count. Inclusion is not endorsement of the position, it's recognition of who the discourse turns to when the bet is debated.

Cynthia Rudin

Cynthia RudinField-leading

Chris Olah

Chris OlahField-leading

Neel Nanda

Neel NandaEstablished

where the endorsers sit on the board

3 of 248 profiled · 1% of the board

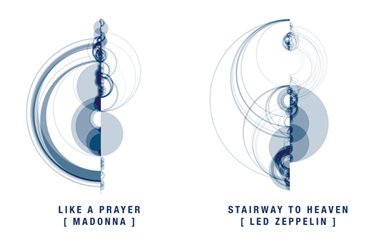

| expertise ↓ · recognition → | Household name | Field-leading | Established | Emerging |

|---|---|---|---|---|

| Frontier builder | · | · | · | |

| Deep technical | · | · | ||

| Applied technical | · | · | · | · |

| Policy / meta | · | · | · | · |

| External-domain expert | · | · | · | · |

| Commentator | · | · | · | · |

Each face is one profiled person. Cell shade intensifies with endorser density. Faces with × are profiled opposers, same tier, opposite position. Empty cells mark tier combinations the field has not produced for this bet.

Tier mix counts only endorsers (endorses, mixed, conditional, evolved-toward). 1 person opposes this position; they are not in the bars below but appear in the list further down.

expertise mix of endorsers · 3 profiled of 14

recognition mix of endorsers

People on the record.

15Asma Ghandeharioun

Google DeepMind; 'Patchscopes' for LLM interpretability

Argues language models can be turned into interpretability tools for themselves; reframes mechanistic interpretation as a translation problem between hidden states and natural language.

“Patchscopes leverage the model's own ability to generate text to inspect its hidden representations, unifying many prior interpretability methods.”

Chris Olah

Anthropic interpretability co-founder; inventor of modern mech interp

Frames mechanistic interpretability as the tool most likely to let us verify whether a model's cognition matches its stated goal.

I'm most optimistic about safety paths that give us some kind of detailed mechanistic understanding of neural networks.

Connor Leahy

CEO of Conjecture; EleutherAI co-founder turned AI safety hawk

Argues current alignment approaches, including interpretability-only bets, are not sufficient; sometimes explicitly pessimistic about the research path.

“The truth is, I do not know how to build an aligned system and I don't even know where to start.”

Cynthia Rudin

Duke professor; interpretable ML pioneer

Argues for inherently interpretable models over post-hoc explanations, a different flavour of interpretability than the mechanistic-interpretability school.

“Stop explaining black box machine learning models for high-stakes decisions and use interpretable models instead.”

David Bau

Northeastern; mechanistic interpretability of LLMs

Argues mechanistic interpretability is making rapid progress in localizing and editing knowledge inside transformer weights; views this as a foundation for safety oversight.

“Factual associations in GPT correspond to localized, directly editable computations in mid-layer feed-forward modules.”

Fernanda Viégas

Harvard; ex-Google PAIR; data visualization

Argues human–AI interaction is best designed when people can see and steer model internals; co-led major industry investments in this approach at Google PAIR before moving to Harvard.

Interactive visualizations turn opaque models into objects we can think with. That is the path to AI that humans can actually verify and shape.

Jacob Andreas

MIT NLP; language models as belief reports

Argues language models develop richer internal structure than behavior alone reveals; mechanistic and probing techniques are required to understand what they 'believe'.

Language models contain structured representations of the agents and situations described in their inputs. Reading those representations is closer to ethnography than to prompt engineering.

John Wentworth

Independent alignment researcher; natural abstractions

Argues alignment requires identifying the abstractions a model converges on; if these match human concepts, training-time supervision becomes far more reliable.

The natural abstractions hypothesis is roughly: a wide variety of cognitive systems will converge to use the same high-level abstractions for reasoning about the world.

Lucius Bushnaq

Apollo Research; mech interp

Argues interpretability tools are most valuable when explicitly designed to detect deceptive or strategic behaviours in models, not just to characterize benign features.

Interpretability that only finds nice features misses the alignment-relevant ones. We need methods designed to surface the deceptive behaviours we are most worried about.

Martin Wattenberg

Harvard; ex-Google PAIR; visualization for ML

Argues visualization is a primary research method for understanding modern neural networks, not a presentation layer, and that the field's safety guarantees rise and fall with the depth of that understanding.

If we can't see what models are doing, we can't trust them. Visualization is fundamental to building justified confidence in ML systems.

Neel Nanda

Mechanistic interpretability team lead at Google DeepMind

Advocates mechanistic interpretability as a scalable safety tool; also writes accessible tutorials to grow the research field.

Interpretability is, I think, the most promising general-purpose alignment approach.

Rich Caruana

Microsoft Research; interpretable ML

Argues high-stakes ML applications, health, criminal justice, finance, should default to interpretable models that practitioners can audit by hand, not opaque deep nets.

Black-box models are not appropriate for high-stakes decisions. We have interpretable models that match black-box accuracy in many of these domains; using them is a matter of choice, not capability.

Roger Grosse

U Toronto; Anthropic; influence functions for LLMs

Argues training-data influence functions let us trace specific model behaviours back to specific training examples, a form of interpretability indispensable for safety auditing.

We scale influence functions to language models with billions of parameters. The result is a tool for tracing what the model 'learned' from what it saw, at production scale.

Trenton Bricken

Anthropic mechanistic interpretability

Argues sparse-autoencoder-style decomposition of model activations into monosemantic features is a tractable path to making large models comprehensible enough to oversee.

We use a sparse autoencoder to decompose a small language model's MLP activations into monosemantic features, and we find that the resulting features can be interpreted, controlled, and used to track model behaviour.

Tristan Hume

Anthropic mechanistic interpretability

Argues sparse-autoencoder scaling can characterize what large frontier models 'see' in a way that makes external safety claims testable rather than aspirational.

We extracted millions of features from Claude 3 Sonnet using sparse autoencoders. The features map to specific concepts, including ones relevant to safety, like power-seeking behaviour and deception.