strategy tag

International treaty.

Arms-control-style treaty on frontier training or deployment

also known as: arms control

stated endorsers

18

no opposers yet

profiled endorsers

2

248 on the board total

endorser p(doom)

·

no estimates on record

quotes by endorsers

18

just for this tag

People on the record.

18

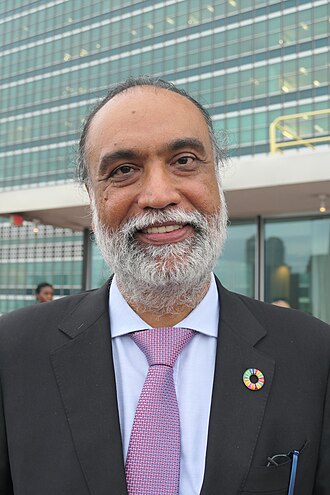

Amandeep Singh Gill

UN Secretary-General's Envoy on Technology

Leads UN-level AI coordination; argues AI governance needs global institutions built around inclusivity and scientific input.

International governance of AI must reflect all voices, not just the loudest national security voices.

Angela Kane

Former UN High Representative for Disarmament Affairs

Argues the disarmament-treaty playbook should be applied to AI; signed the CAIS statement.

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Anja Kaspersen

UN senior fellow; disarmament diplomat

Argues AI requires arms-control-style international verification mechanisms.

We built verification infrastructure for chemical and nuclear weapons. We can build it for AI, but only if we decide to.

Bletchley Declaration Signatories

First international AI Safety Summit signatories (2023)

First international statement acknowledging frontier AI risk as requiring cross-national coordination.

“There is potential for serious, even catastrophic, harm, either deliberate or unintentional, stemming from the most significant capabilities of these AI models.”

Brian Tse

Founder of Concordia AI; China AI safety

Builds dialogue capacity between Western and Chinese AI safety communities; argues productive US-China AI safety cooperation is feasible.

There is a real Chinese AI safety research community. Western governance conversations often miss it entirely.

Fumio Kishida

Former Prime Minister of Japan (2021–2024); Hiroshima AI Process architect

Architect of the Hiroshima AI Process; pushed for Global South inclusion in international AI governance.

“Japan will take the lead in establishing international rule-making that will enable the entire international community, including the Global South, to enjoy the benefit of safe, secure, and trustworthy generative AI.”

Gabriela Ramos

UNESCO Assistant Director-General for Social and Human Sciences

Led the first global UNESCO agreement on AI ethics, adopted unanimously by 193 member states in November 2021.

“Decisions impacting millions of people should be fair, transparent and contestable. These new technologies must help us address the major challenges in our world today.”

Henry Kissinger

Former U.S. Secretary of State; co-author 'The Age of AI'

Argued AI requires a new diplomatic framework comparable in scale to nuclear arms control; called for U.S.-China dialogue specifically on AI's strategic implications.

We need to start serious discussions about how to keep AI from running off into territories nobody wants to go. The challenge is not technological, it is the absence of any framework for international agreement.

Isabella Wilkinson

Chatham House international affairs AI researcher

Argues international coordination on AI, modelled on nuclear non-proliferation institutions, is the missing layer.

AI governance needs an IAEA-equivalent for frontier training.

Jeffrey Ding

George Washington University; ChinAI newsletter

Argues the diffusion of general-purpose AI capabilities across great-power blocs makes coordination (rather than racing) the more accurate frame for U.S.–China competition.

Whichever country gets superior AI is not necessarily the one that develops it first. The diffusion phase, how fast and broadly capabilities spread, matters more than the leading edge.

Jon Bateman

Carnegie senior fellow; AI and cyber strategy

Argues the U.S.–China AI relationship needs structured dialogue mechanisms, including arms-control-style confidence-building measures, before crisis dynamics force the issue.

The U.S. and China need a Biden-Xi-style dialogue track on AI specifically, not because we will agree on values, but because we cannot afford to crisis-manage on top of misperception.

Kati Suominen

Founder of Nextrade Group; AI trade policy

Argues AI governance should be integrated with trade-and-investment frameworks (USMCA, WTO).

Putting AI governance outside trade frameworks creates two regimes that will collide. Better to integrate them.

Robert Trager

Oxford Martin AI governance scholar

Argues verifiable compute accounting is the key primitive for any international AI treaty.

Verifiable compute accounting is the missing primitive for international AI governance. Without it, treaties have nothing to check.

Sara Hossein

International AI law scholar

Argues existing international human-rights frameworks can be extended to govern AI without inventing new institutions from scratch.

We already have human rights law. It applies to AI. What we are missing is enforcement infrastructure.

Toby Walsh

UNSW Sydney; AI safety advocate

Argues lethal autonomous weapons must be banned by international treaty, modeled on the 1990s ban on blinding lasers; co-organized 'killer robots' open letter with thousands of researcher signatures.

Killer robots will become the third revolution in warfare, after gunpowder and nuclear arms. They will fundamentally lower the threshold for armed conflict.

Victor Gao

Chinese diplomat; AI dialogue participant

Participates in US-China AI safety dialogues; publicly advocates for great-power coordination on AI.

The United States and China must find a way to cooperate on AI safety even as they compete economically.

Yi Zeng

Chinese Academy of Sciences; Brain-inspired Cognitive AI Lab director

Participant in US-China track II dialogues on AI safety.

AI safety should not be an area of geopolitical competition; it is a global public good.

Yuhwen Yang

Carnegie Endowment China AI research

Reports on Chinese AI ecosystem from sources US analysts often miss; argues mutual understanding is necessary for any international AI agreement.

Western AI policy debates often misread Chinese AI policy because they read English summaries rather than primary Chinese sources.