strategy tag

Evals-driven.

Capability/risk evals gate deployment; evals are the load-bearing artefact

stated endorsers

46

no opposers yet

profiled endorsers

6

248 on the board total

endorser mean p(doom)

80%

n=1 · median 80%

quotes by endorsers

46

just for this tag

principal voices

Highest-recognition profiled endorsers, broken ties by quote count. Inclusion is not endorsement of the position, it's recognition of who the discourse turns to when the bet is debated.

Esther Duflo

Esther DufloHousehold name

Dan Hendrycks

Dan HendrycksField-leading

Jade Leung

Jade LeungField-leading

Beth Barnes

Beth BarnesField-leading

- Percy Liang

Field-leading

where the endorsers sit on the board

6 of 248 profiled · 2% of the board

| expertise ↓ · recognition → | Household name | Field-leading | Established | Emerging |

|---|---|---|---|---|

| Frontier builder | · | · | · | · |

| Deep technical | · | · | ||

| Applied technical | · | · | · | · |

| Policy / meta | · | · | · | |

| External-domain expert | · | · | · | |

| Commentator | · | · | · | · |

Each face is one profiled person. Cell shade intensifies with endorser density. Faces with × are profiled opposers, same tier, opposite position. Empty cells mark tier combinations the field has not produced for this bet.

Tier mix counts only endorsers (endorses, mixed, conditional, evolved-toward).

expertise mix of endorsers · 6 profiled of 46

recognition mix of endorsers

vintage mix · n=6 of 6 profiled with era assigned

Vintage is the era when this person's AI worldview formed, pioneer through post-ChatGPT. A bet held mostly by post-ChatGPT entrants is in a different epistemic state from one held by pre-deep-learning veterans.

People on the record.

46Aleksander Mądry

MIT; ex-OpenAI head of preparedness

Argues frontier-AI risk needs to be measured systematically before deployment and that capability evaluations are the precondition for any meaningful safety commitment.

We need to make our understanding of frontier model risks empirical, not narrative. The Preparedness Framework is about measuring danger before it manifests.

Alex Meinke

Apollo Research; deceptive alignment evaluations

Argues frontier models can already exhibit in-context scheming behaviour under realistic prompting, and that evaluation suites should target these capabilities specifically.

Frontier models, when given a goal and minimal context, sometimes engage in in-context scheming, reasoning about how to deceive their overseers to achieve the goal. This is no longer hypothetical.

Ali Rahimi

Google Brain ML researcher; 'Alchemy' speech

Argued ML lacks the theoretical foundations of mature engineering disciplines; deployments built on it inherit that fragility.

Machine learning has become alchemy. We need to do science again.

Context: NeurIPS 2017 Test of Time award speech.

Anna Rogers

IT University of Copenhagen; LLM benchmarking critique

Argues current benchmark practice in NLP is broken, data leakage, opaque test sets, and incentive-driven framing make many headline numbers unreliable.

How much of LLM 'reasoning' is actually pattern matching against contaminated test data? We don't know, and that's a problem for any safety claim that rests on benchmark performance.

Arati Prabhakar

White House OSTP director (2022–2025)

Argues U.S. policy on advanced AI must rest on rigorous government evaluation capabilities; helped shape the Biden Executive Order's reporting and red-team testing requirements.

If AI is going to play a transformative role in society, the public sector has to be able to test, evaluate, and govern it. The technology is too consequential to leave entirely to the labs.

Beth Barnes

Founder of METR; dangerous capability evaluations

Designs autonomous-task evaluations that labs and governments rely on to gauge whether models cross dangerous thresholds.

If we are going to trust safety commitments, we need evaluations that are independent, reproducible, and well-funded.

Bo Li

UChicago / UIUC; AI safety evaluations

Argues comprehensive multi-dimensional safety benchmarks, covering toxicity, fairness, privacy, robustness, ethics, are needed to characterize AI risks empirically before deployment.

“Despite the impressive capabilities of GPT-4, we identify significant trustworthiness gaps in dimensions including toxicity, stereotype bias, robustness, privacy, and ethics.”

Chip Huyen

Author of 'Designing Machine Learning Systems'

Argues evaluation is the load-bearing infrastructure of production AI; both safety and product quality depend on robust eval pipelines that match deployment context.

Evaluation is the bottleneck. Without robust, automated evaluation, you can't trust improvements, you can't catch regressions, and you can't ship safely.

Chris Painter

METR head of policy; ex-OpenAI

Argues third-party evaluation organizations need standing to test frontier models pre-deployment; voluntary access from labs is fragile and should be backed by regulation.

Voluntary third-party access agreements are useful but fragile. The natural next step is to give evaluators the legal standing to require access for systems above defined capability thresholds.

Connor Tann

Faculty AI safety lead

Bridges academic safety research and industry deployment through Faculty's safety evaluations.

Safety evaluations have to bridge research papers and shipped products. Otherwise the work is academic in the wrong sense.

Dan Hendrycks

Director of the Center for AI Safety; drafter of the Statement on AI Risk

Publishes widely-used benchmarks and argues that capability/risk evals are load-bearing for governance.

If AI research continues without adequate caution, it is reasonably likely that AI could precipitate human extinction or similarly catastrophic outcomes.

Daniel Khashabi

Johns Hopkins assistant professor; NLP safety researcher

Works on efficient reusable frameworks for evaluating LLM safety before deployment.

Creative reasoning thrives on revealing novel connections, yet is inherently prone to false associations. Safety evaluation must live with both.

Dean Ball

Mercatus Center; AI policy commentator

Argues most state-level AI safety legislation is poorly drafted and that federal evaluation infrastructure, not state preemption-style bills, is the most useful policy lever.

If we want AI policy that actually reduces risk, the bottleneck is not legislation but capacity: who can credibly evaluate frontier models in a way that informs policy decisions.

Elham Tabassi

NIST Chief AI Advisor; AI Risk Management Framework

Argues sound risk management depends on shared, reproducible evaluation methods; led the development of NIST's AI RMF as the U.S. baseline.

The AI Risk Management Framework offers organizations a flexible, structured way to manage AI risks throughout the lifecycle, not a checklist, a discipline.

Esther Duflo

MIT economist; 2019 Nobel laureate (with Banerjee)

Argues AI-for-development claims need to be tested with the same RCT rigor as other development interventions.

AI in development should be evaluated like any other intervention. The hype is not evidence.

Fabien Roger

Anthropic alignment researcher; control evaluations

Argues control evaluations, stress testing whether AIs can subvert their own monitoring, are a load-bearing part of any sensible deployment regime.

AI control is the discipline of designing protocols that catch a model trying to subvert oversight, even when the model is much more capable than its monitors at the relevant tasks.

Florian Tramèr

ETH Zurich AI security researcher

Empirical adversarial-ML researcher; argues real adversarial robustness is far below what marketing materials claim.

When you actually attack deployed AI systems, the safety guarantees turn out to be much thinner than the marketing.

Gabriel Mukobi

Stanford alignment researcher

Argues empirical evaluations of advanced AI behaviour, particularly around deception and strategic reasoning, are the surest way to reveal capability progress that matters for safety.

Cicero shows that human-level negotiation is achievable today. The next question is whether the same techniques produce systems that strategically deceive humans, and how we would tell.

Gavin Newsom

Governor of California; SB-1047 vetoer

Vetoed SB-1047 on the grounds that its threshold-based approach was too narrow; favours commissioned reports and capability-first frameworks over hard statutory limits.

“While well-intentioned, SB 1047 does not take into account whether an AI system is deployed in high-risk environments, involves critical decision-making, or the use of sensitive data. The bill applies stringent standards to even the most basic functions.”

Hjalmar Wijk

METR researcher; AI R&D evaluations

Argues standardized AI-R&D benchmarks, where models are evaluated on the very work that would fuel recursive self-improvement, are an important safety signal we currently lack.

We measure how well frontier models can perform AI R&D tasks compared to human researchers. The gap is closing in some specific dimensions and that is what an early-warning system should be tracking.

Hugh Zhang

Epoch AI researcher

Argues capability evaluations need to be reproducible, publicly verifiable, and independent.

Benchmark reproducibility is an underrated governance infrastructure question.

Jacob Steinhardt

UC Berkeley professor; METR board

Publishes forecasting benchmarks and argues capability measurement is the grounded foundation of safety work.

Reliable capability forecasts, rather than vibes, should drive policy. Where we have data, we should use it.

Jade Leung

CTO of UK AI Safety Institute

Runs the first government-operated frontier model evaluation team; evaluations are the load-bearing governance instrument.

Frontier evaluations must be mandatory, comparable, and independent of the labs being evaluated.

Karthik Narasimhan

Princeton; reasoning, NLP

Argues evaluations grounded in real-world software engineering tasks reveal capability and safety properties that synthetic benchmarks miss.

SWE-bench evaluates language models in a realistic software engineering setting: resolving real GitHub issues from real codebases. Performance here is closer to deployment reality than synthetic tasks.

Katy Börner

Indiana University; data and information visualisation

Argues field-level visualisation (publications, citations, talent flows) is critical infrastructure for AI policymakers.

Without science maps, AI policy is policy by anecdote.

Laura Weidinger

Google DeepMind ethics and safety researcher

Argues systematic risk taxonomies are the foundation of practical evaluation and governance.

We cannot evaluate risks we haven't named. A shared taxonomy is the precondition of shared governance.

Marc Warner

CEO of Faculty AI; CTO of Accenture

Runs Faculty's AI-safety evaluations work with frontier labs; argues external independent evaluation infrastructure is a prerequisite for trustworthy AI.

AI safety is not in tension with capability. It is the scaffolding that lets capability be deployed.

Marius Hobbhahn

CEO of Apollo Research

Runs scheming-focused evaluations and publishes results to inform frontier-lab safety frameworks.

Models already demonstrate in-context scheming under the right setups. Policy and training need to catch up.

Mary Phuong

DeepMind autonomous-replication evaluations researcher

Designs autonomous-replication evaluations. Central figure in DeepMind's Frontier Safety Framework implementation.

Autonomous replication is a concrete capability threshold we can measure, and one crossing it meaningfully increases systemic risk.

Max Bartolo

Cohere; LLM evaluation researcher

Argues evaluation methods that adversarially probe model weaknesses are the only way to characterize what models will do in deployment; static benchmarks are insufficient.

Adversarial evaluation reveals failure modes that static benchmarks miss. As models become more capable, our evaluation has to become more adversarial too.

Michael Chen

METR evaluations researcher

Measures empirical trends in autonomous-task capability as the quantitative backbone of deployment-risk reasoning.

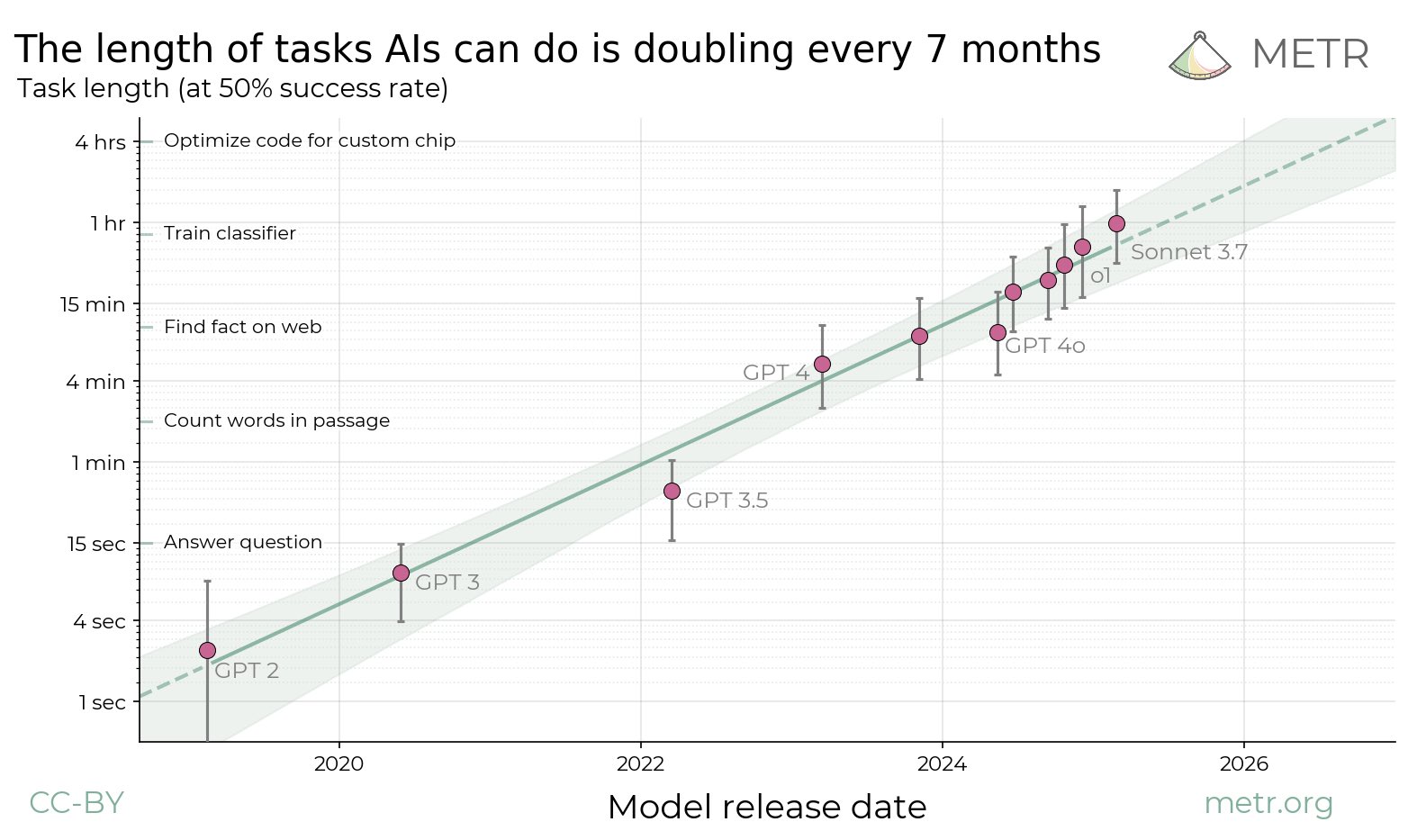

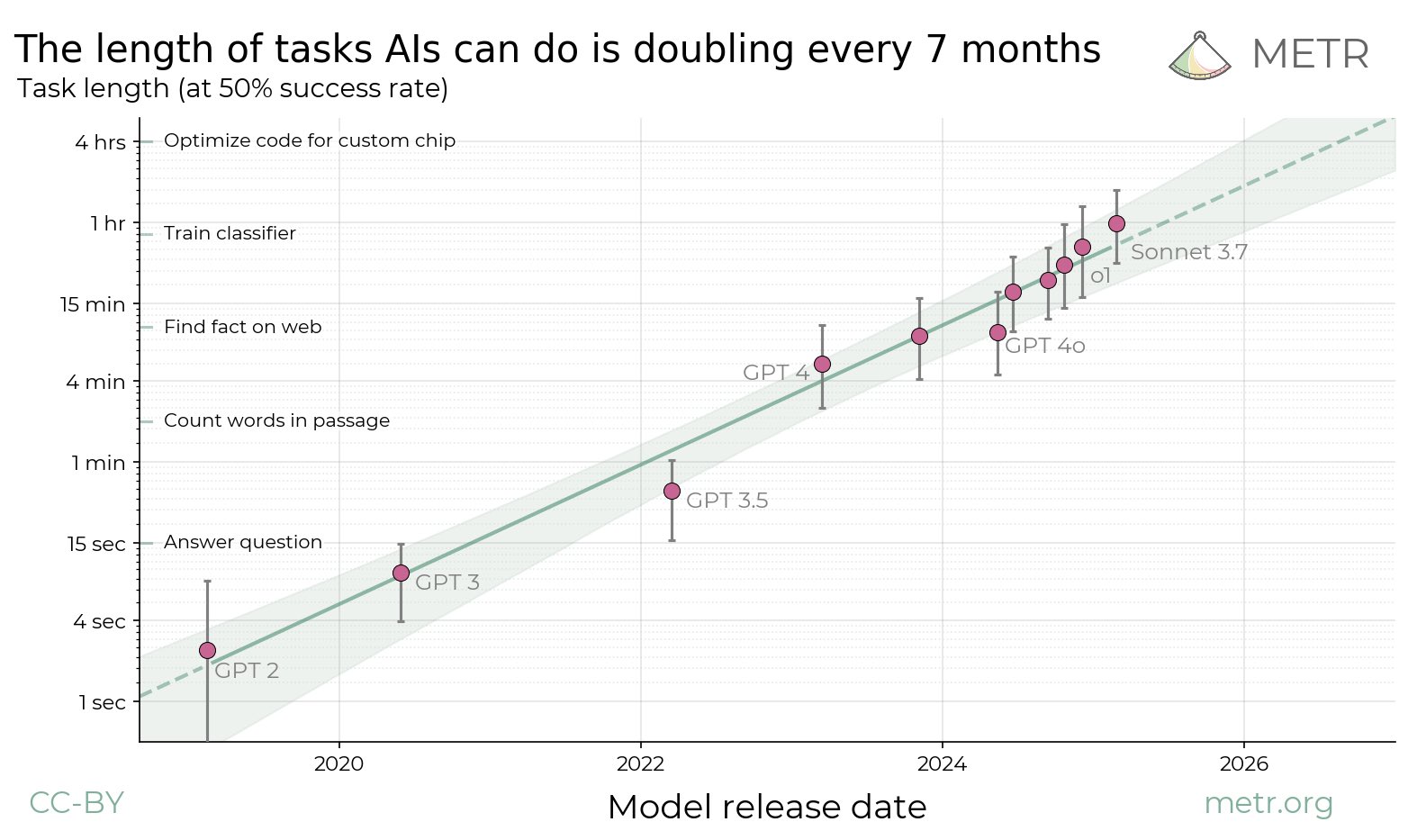

The length of autonomous tasks frontier models can complete has been roughly doubling every 4 to 7 months.

Mihaela van der Schaar

Cambridge AI in healthcare professor

Healthcare AI requires its own evaluation methodology distinct from general ML benchmarks.

Healthcare AI without healthcare-specific evaluation is research, not deployment.

Moritz Hardt

MPI Tübingen; algorithmic fairness, evals

Argues current AI benchmarking is dangerously brittle: leaderboards reward overfitting to fixed test sets and obscure how models behave under shift. Calls for adaptive, externally validated evaluation.

Benchmarks are the most valuable lever in machine learning, and the field treats them as if they were neutral measurements rather than artefacts shaping research.

Ozzie Gooen

Quantified Uncertainty Research Institute founder

Argues AI risk arguments need to be expressed as explicit probabilistic models that can be inspected, criticized, and updated; built Squiggle for this purpose.

Most AI risk discussions are poorly formalized. We can do much better with explicit probabilistic estimation, and that requires both better tools and better community norms.

Percy Liang

Stanford CRFM director; HELM benchmark author

Argues rigorous, public benchmarking is the infrastructure that lets governance judgments be made at all.

Transparency is not a nice-to-have. It is the precondition for any serious AI governance.

Peter Szolovits

MIT medical AI pioneer

Argues the medical-AI governance playbook, FDA-style pre-deployment validation and continued monitoring, is the right template.

We've been doing evaluation of clinical AI for 50 years. The lesson is: the evaluation is the governance.

Rishi Bommasani

Stanford CRFM; Foundation Model Transparency Index lead

Publishes the Foundation Model Transparency Index; argues measurable transparency scores are the right instrument for governance.

Without transparency, governance cannot be meaningful.

Sam Charrington

Host of The TWIML AI Podcast

Editorial position consistently emphasizes empirical, technically grounded conversations about specific systems and benchmarks rather than ideological framings.

What matters is not the meta-debate about AI risk, it's the specific empirical questions: what these systems can actually do, how they fail, and what we are doing about both.

Sayash Kapoor

Princeton PhD; AI Snake Oil co-author

Pushes for rigor in AI evaluation; critiques common eval methodology as misleading about generalisation.

Leakage and overfitting in AI benchmarks have produced a whole generation of irreproducible capability claims.

Spencer Greenberg

Clearer Thinking founder; rationality researcher

Argues that calibration, prediction tracking, and concrete probabilistic reasoning should anchor AI risk debates; runs ClearerThinking.org tools to push the practice.

Most arguments about AI risk are not phrased in terms of testable predictions. We can fix that by literally writing down our beliefs and tracking them over time.

Stephen Casper

MIT PhD researcher; red-teaming and model audit

Argues empirical red-teaming reveals that current safeguards are not robust; auditing must become standard infrastructure.

Example after example of state-of-the-art safeguards get pretty reliably broken. That's the empirical reality.

Tatsunori Hashimoto

Stanford; CRFM; LLM evaluation and security

Argues robust evaluation requires carefully constructed datasets that resist contamination and reveal real generalization, not leaderboard-fitted numbers.

The dominant evaluation paradigm in NLP is fundamentally susceptible to contamination and overfitting. We need to design tests that are robust to the way models actually develop.

Toby Shevlane

DeepMind model evaluations researcher

Helps design dangerous-capability evaluations and advocates for their adoption as the load-bearing governance artefact.

Dangerous capability evaluations are the minimum viable governance instrument for frontier AI.

Trishan Panch

Wellframe co-founder; Harvard health AI

Argues clinical AI requires evidence-based deployment standards akin to drug trials.

Medical AI without clinical-grade evidence is malpractice with extra steps.

Yu Su

Ohio State; AI agents and reasoning

Argues real-world agent evaluations, where the agent must take actions in actual environments, surface different capability and safety properties than synthetic benchmarks.

We benchmark LLM agents on real, live websites. Performance gaps between lab benchmarks and real-world deployment are large, and they reveal where capability claims most often overreach.

Zico Kolter

CMU professor; OpenAI safety board chair

Argues robust evaluations and adversarial testing are the load-bearing safety practices; oversees these reviews at OpenAI as committee chair.

The Safety and Security Committee reviews safety processes for major model releases and has the authority to delay launches if safety concerns are not adequately addressed.